Neighbor List Benchmarks#

This page presents benchmark results for various neighbor list algorithms

across different GPU hardware. Results are automatically generated from

CSV files in the benchmark_results/ directory.

Warning

These results are intended to be indicative only: your actual performance may vary depending on the atomic system topology, software and hardware configuration and we encourage users to benchmark on their own systems of interest.

How to Read These Charts#

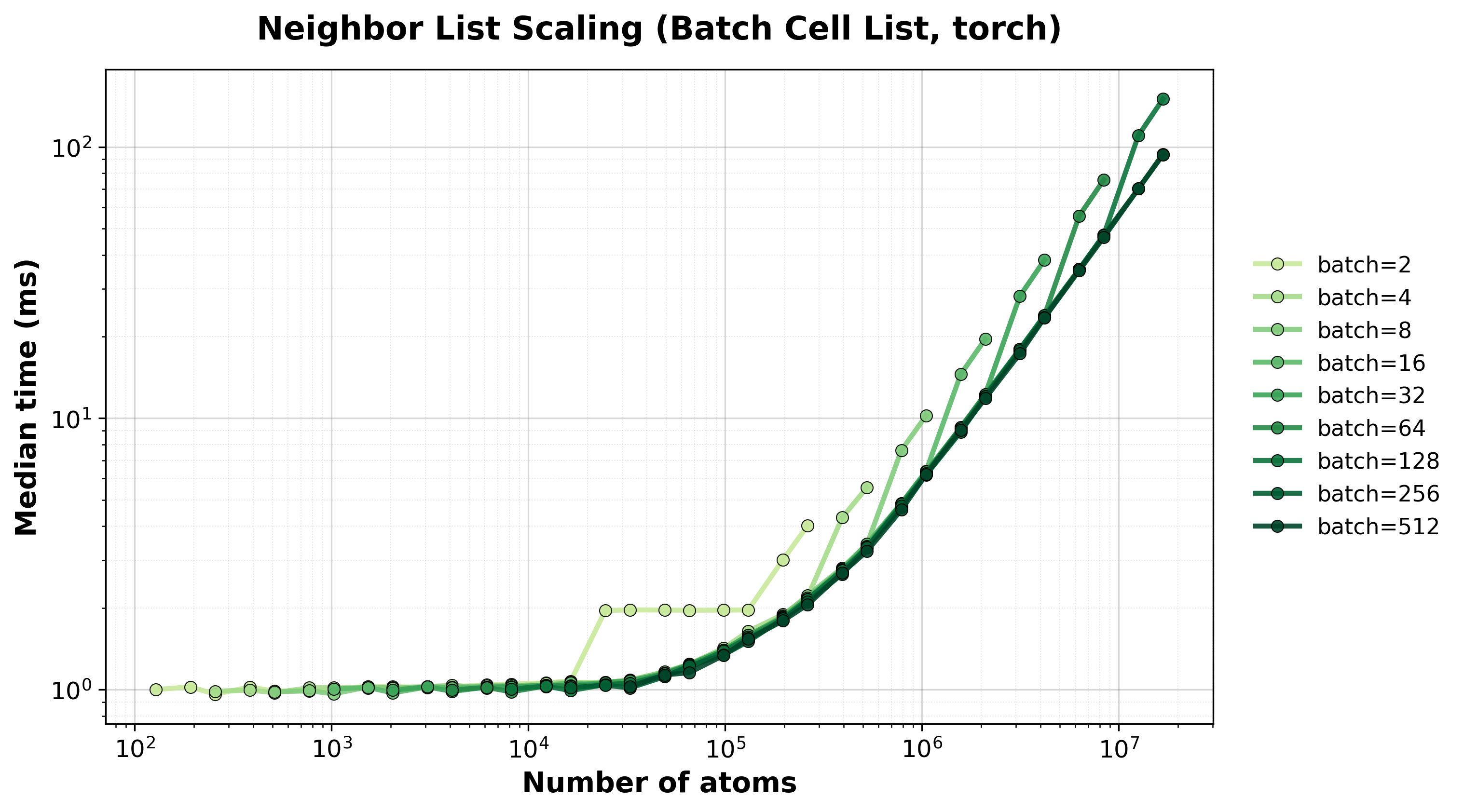

Time Scaling : Median execution time (ms) vs. system size. Lower is better. Cell list algorithms show \(O(N)\) scaling while naive algorithms show \(O(N^2)\).

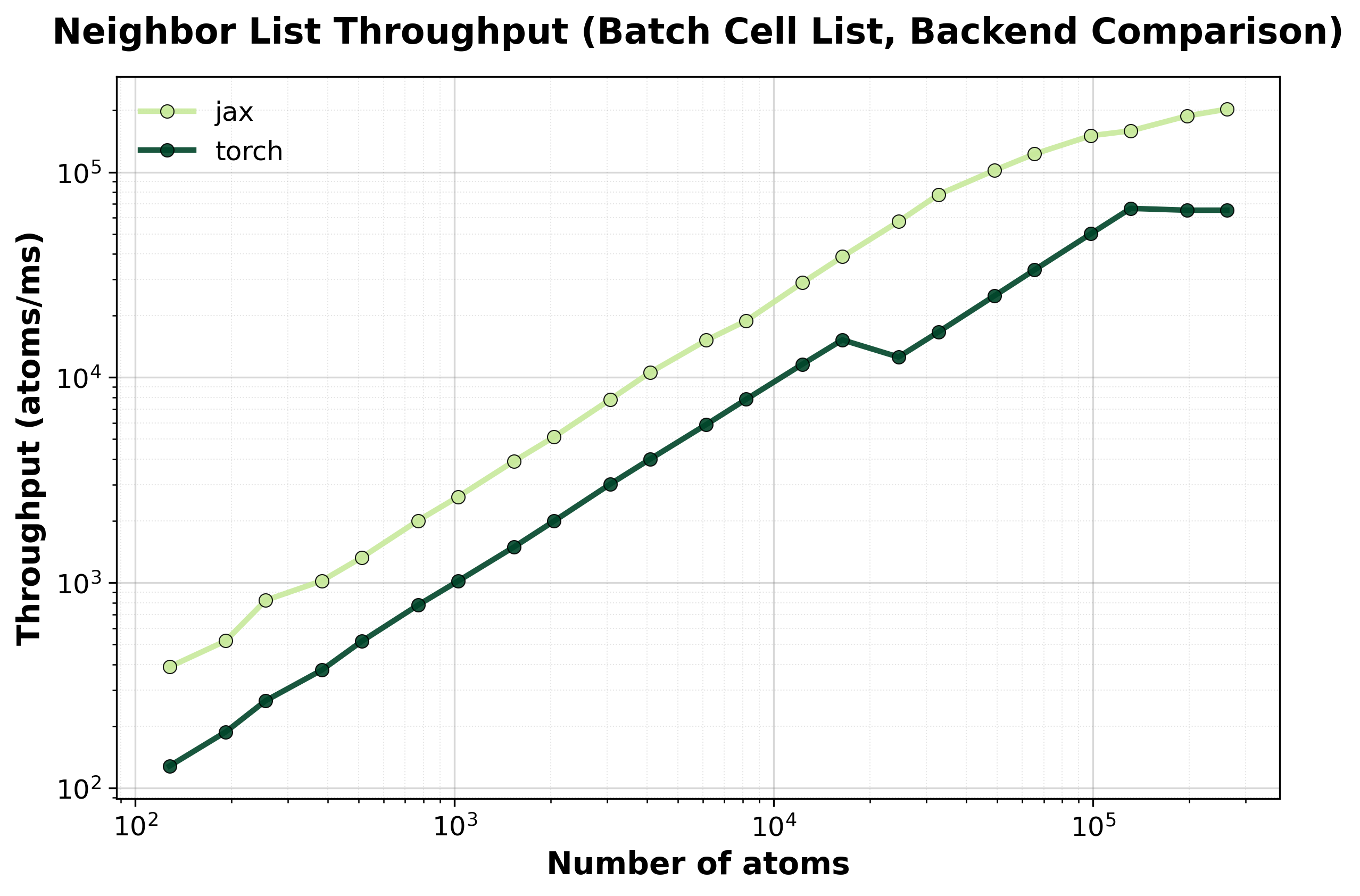

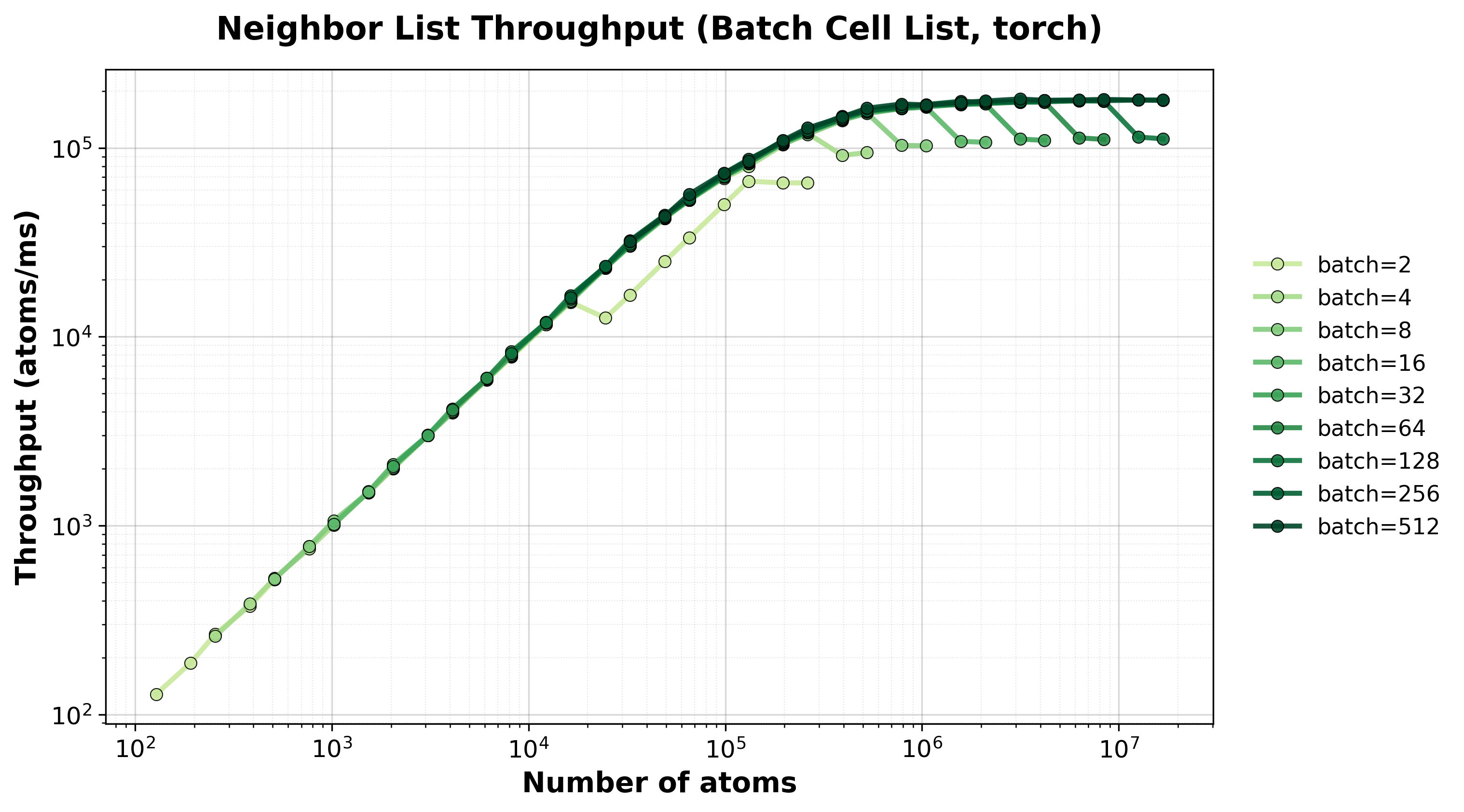

Throughput : Atoms processed per millisecond. Higher is better. This metric helps compare efficiency across different system sizes.

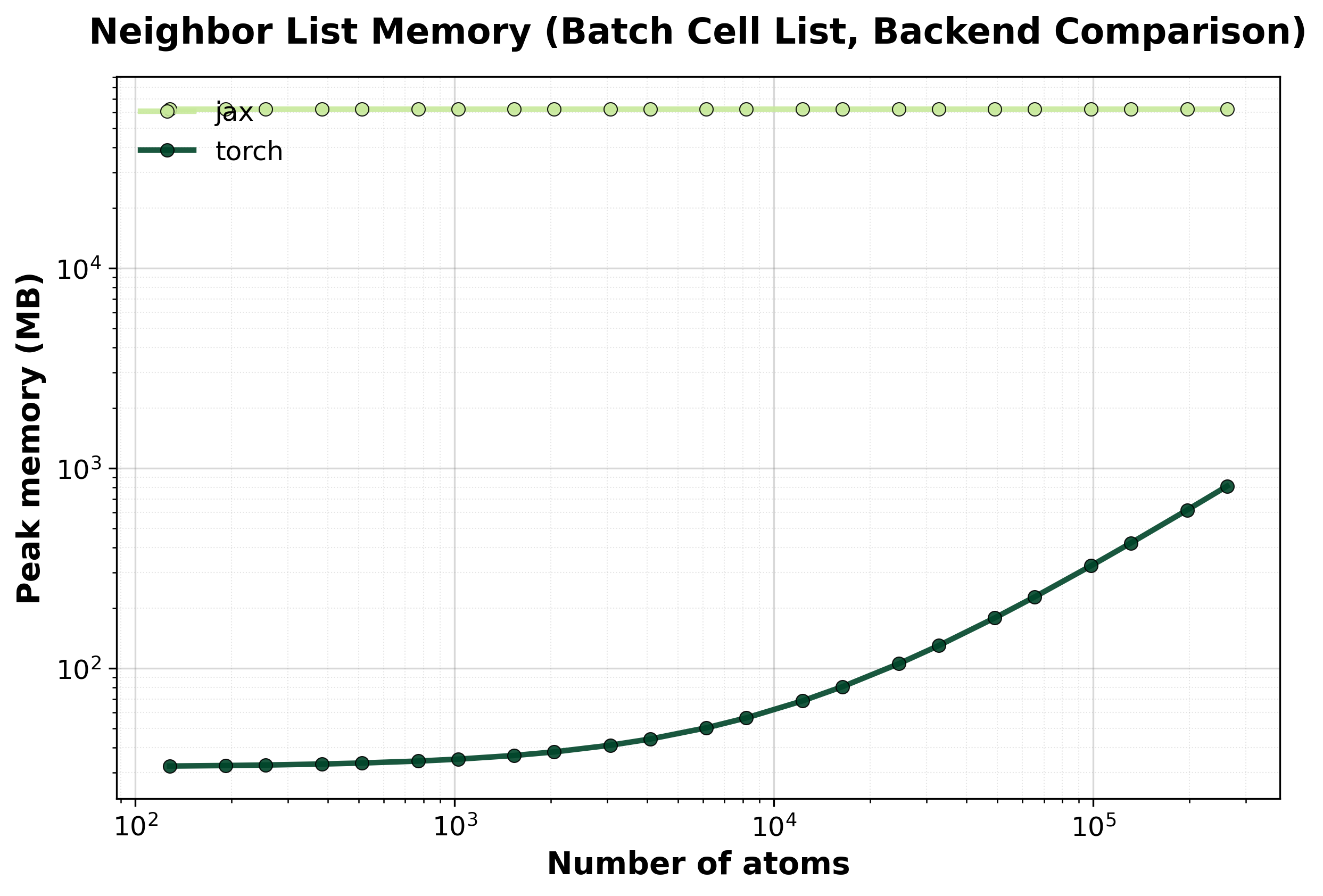

Memory : Peak GPU memory usage (MB) vs. system size. Useful for estimating memory requirements for your target system.

Performance Results#

Select a method to view detailed benchmark data and scaling plots:

Naive#

Brute-force \(O(N^2)\) algorithm. Best for very small systems where the overhead of cell list construction exceeds the computational savings.

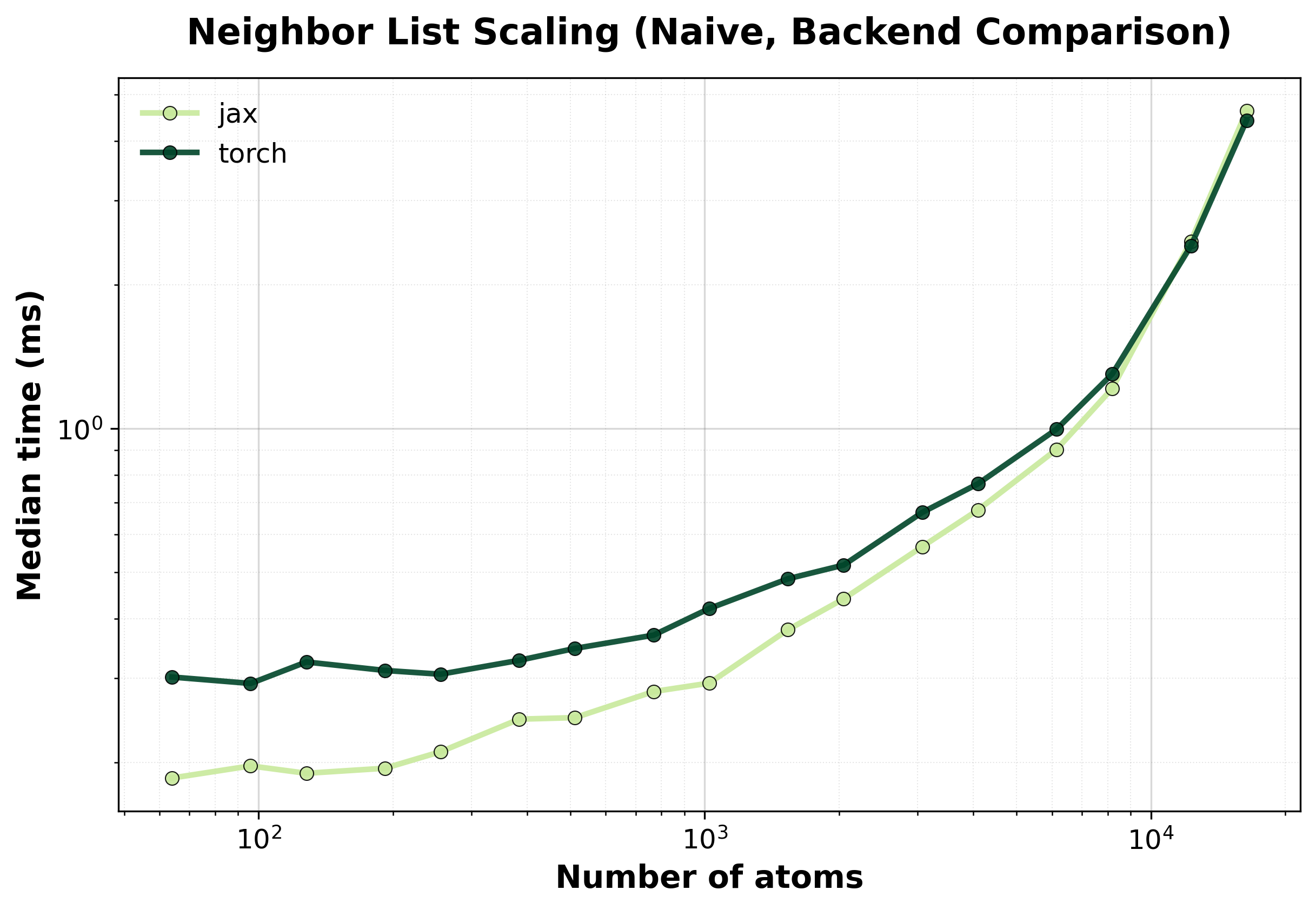

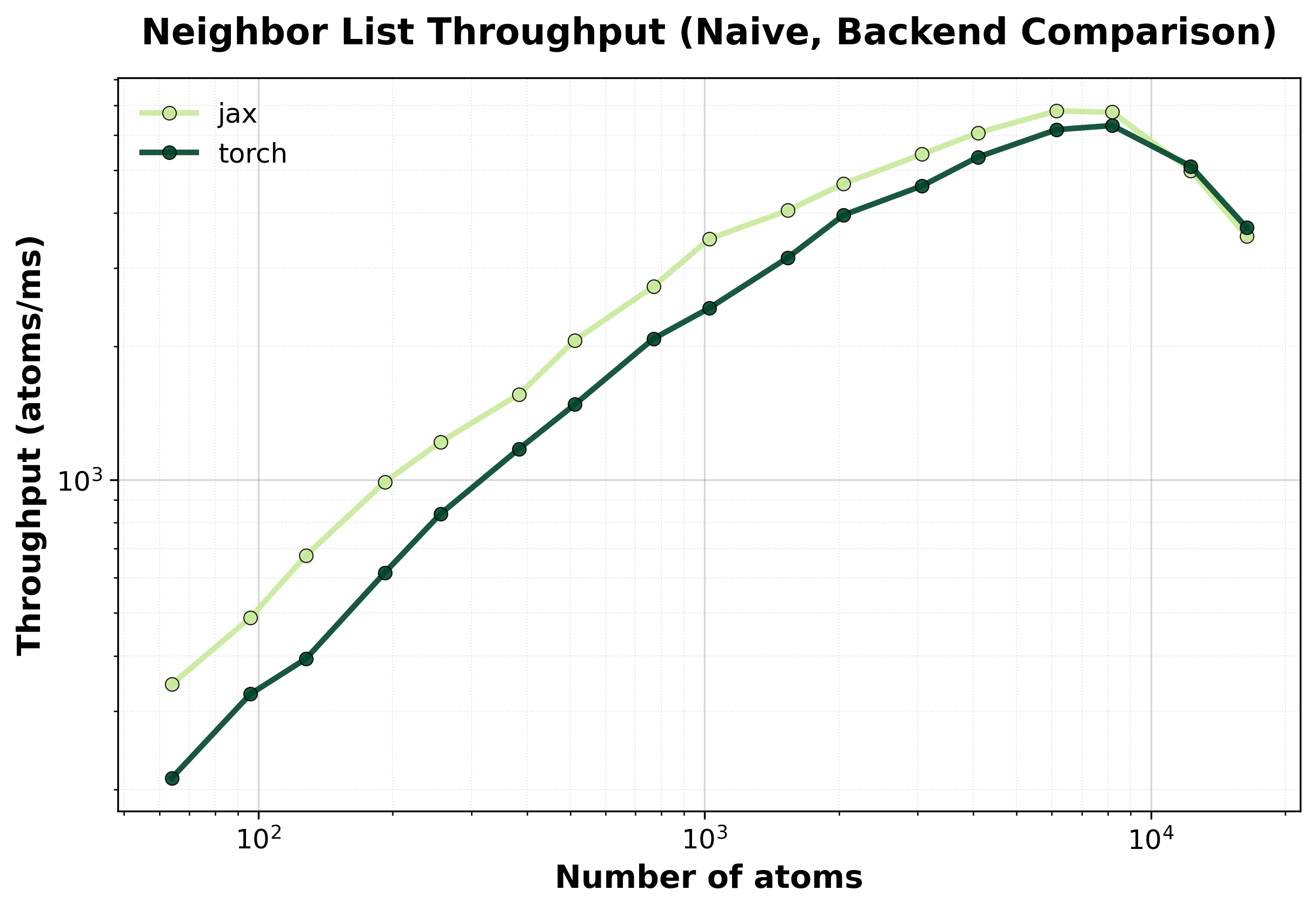

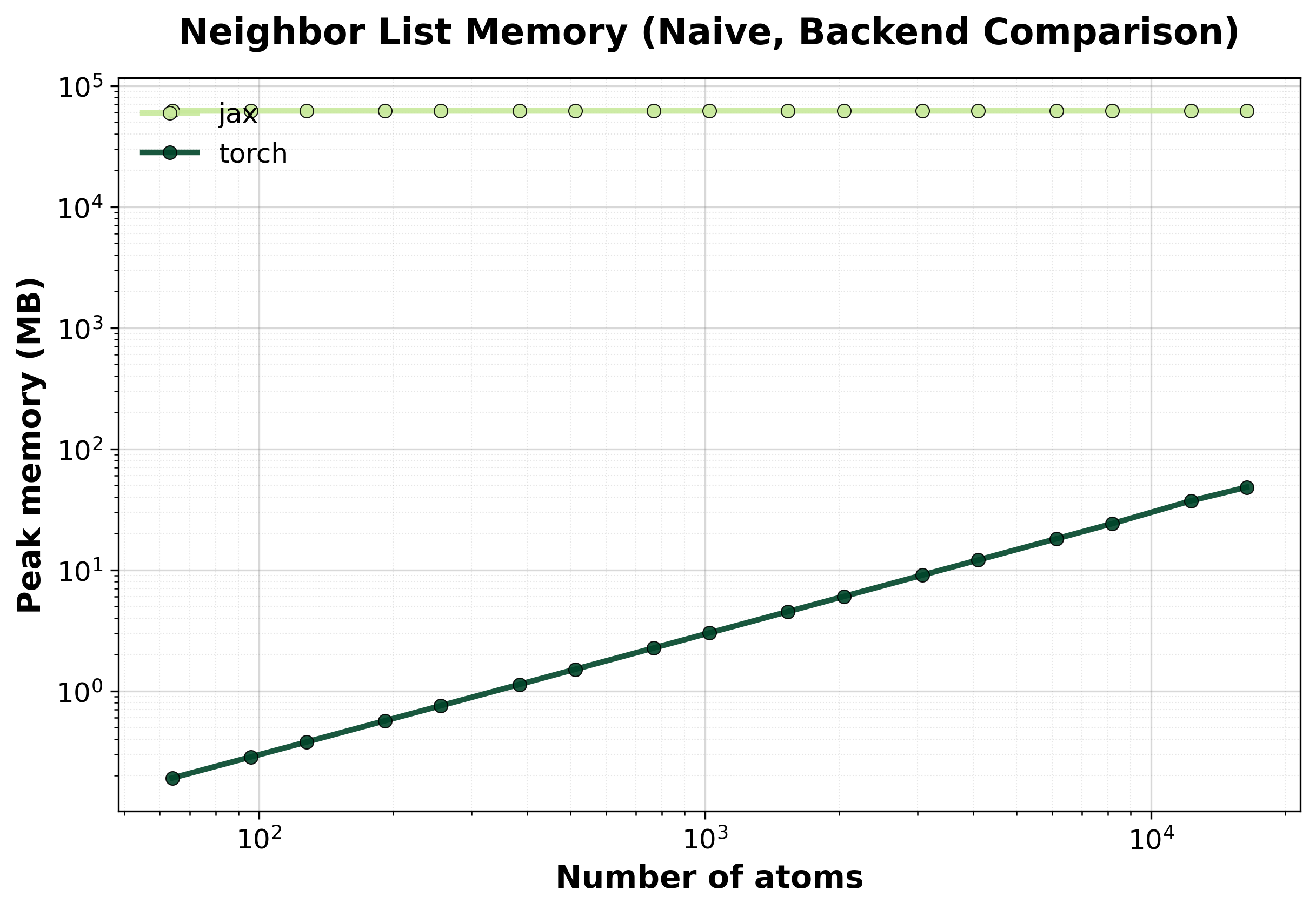

Simple comparison of single (non-batched) system computations between backends, where we scale up the size of the system.

Time Scaling

Median execution time comparison between backends. The \(O(N^2)\) scaling becomes apparent for larger systems.#

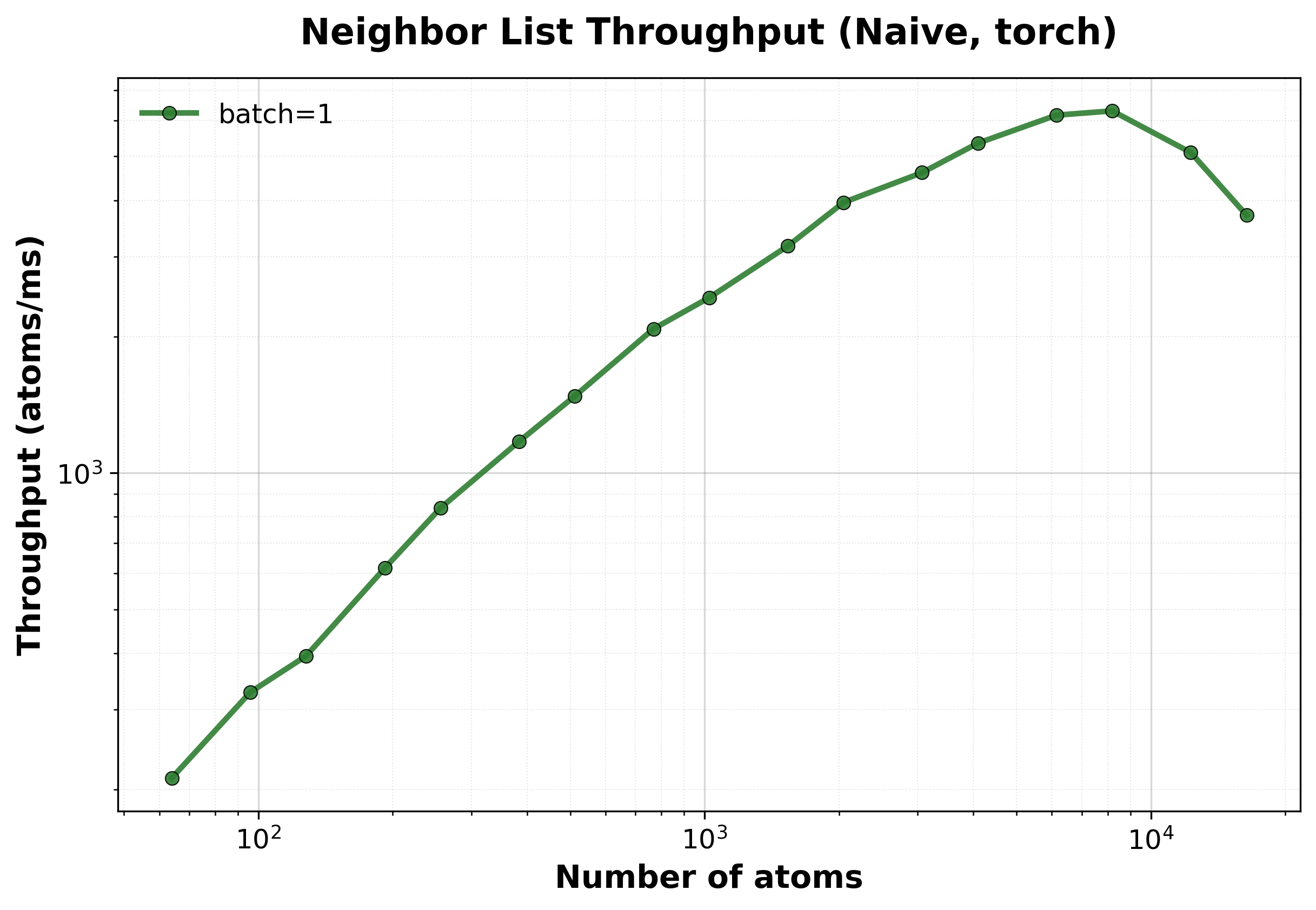

Throughput

Throughput (atoms/ms) comparison between backends.#

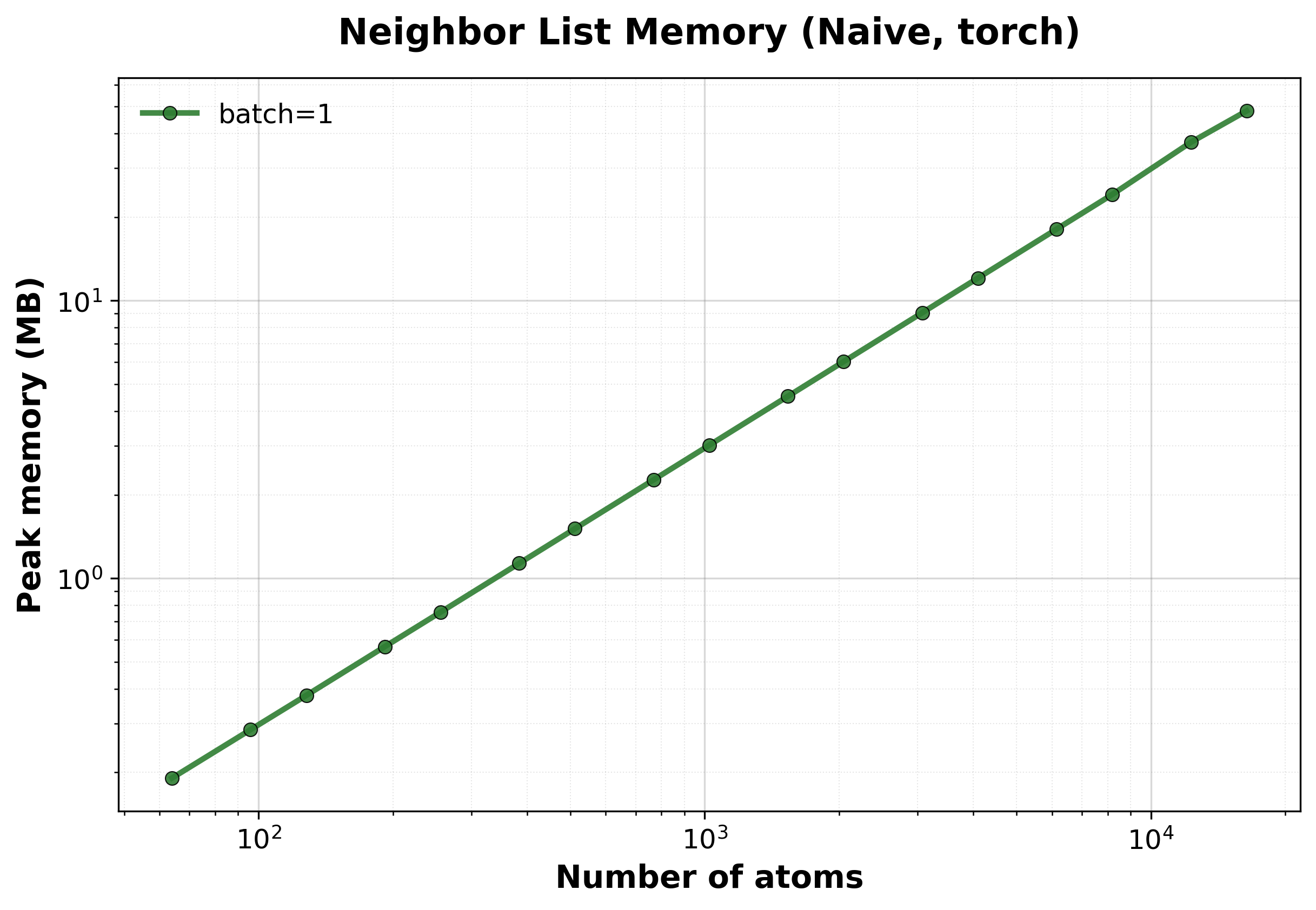

Memory Usage

Peak GPU memory consumption comparison between backends.#

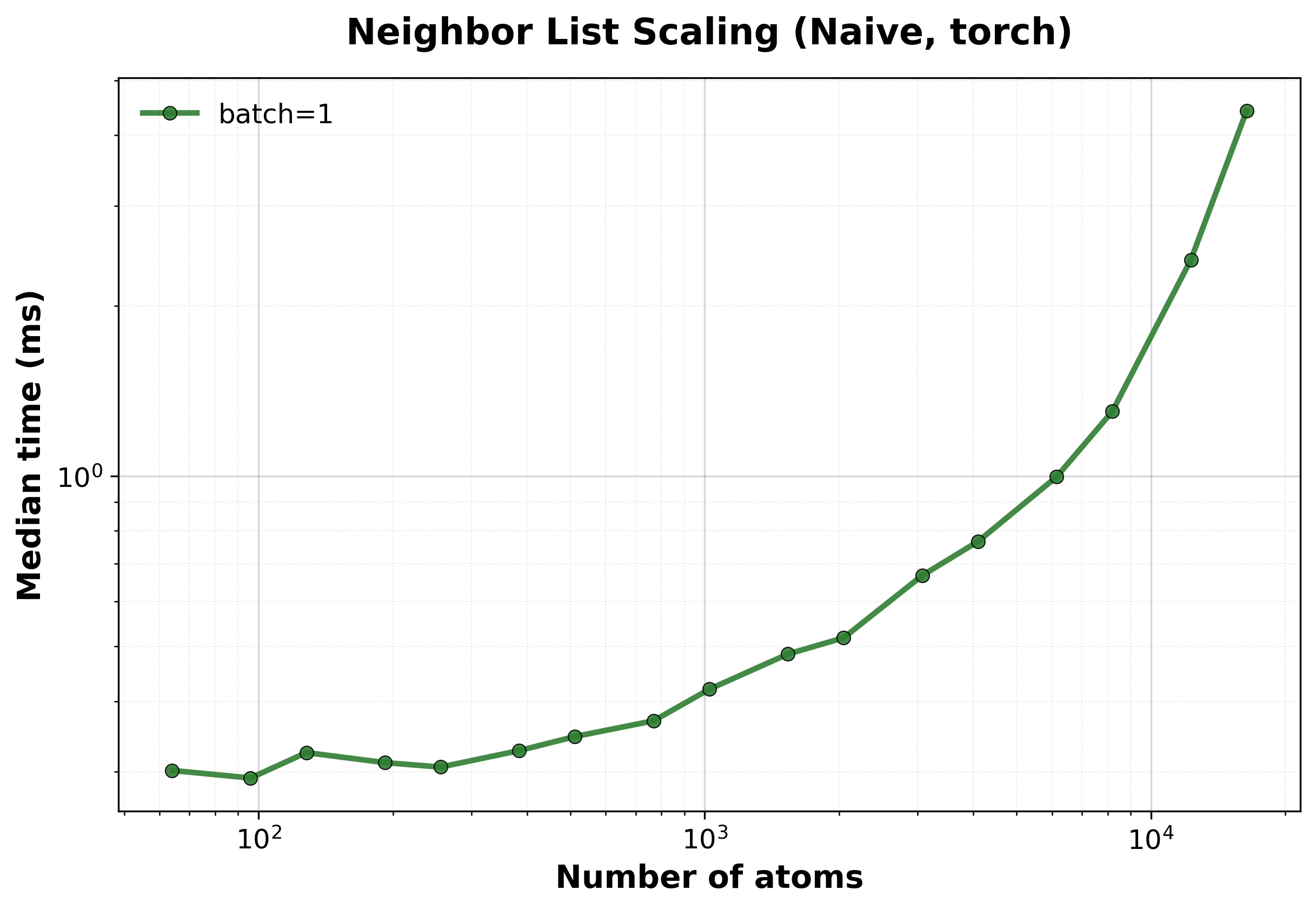

Scaling of the naive algorithm with the nvalchemiops Torch backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

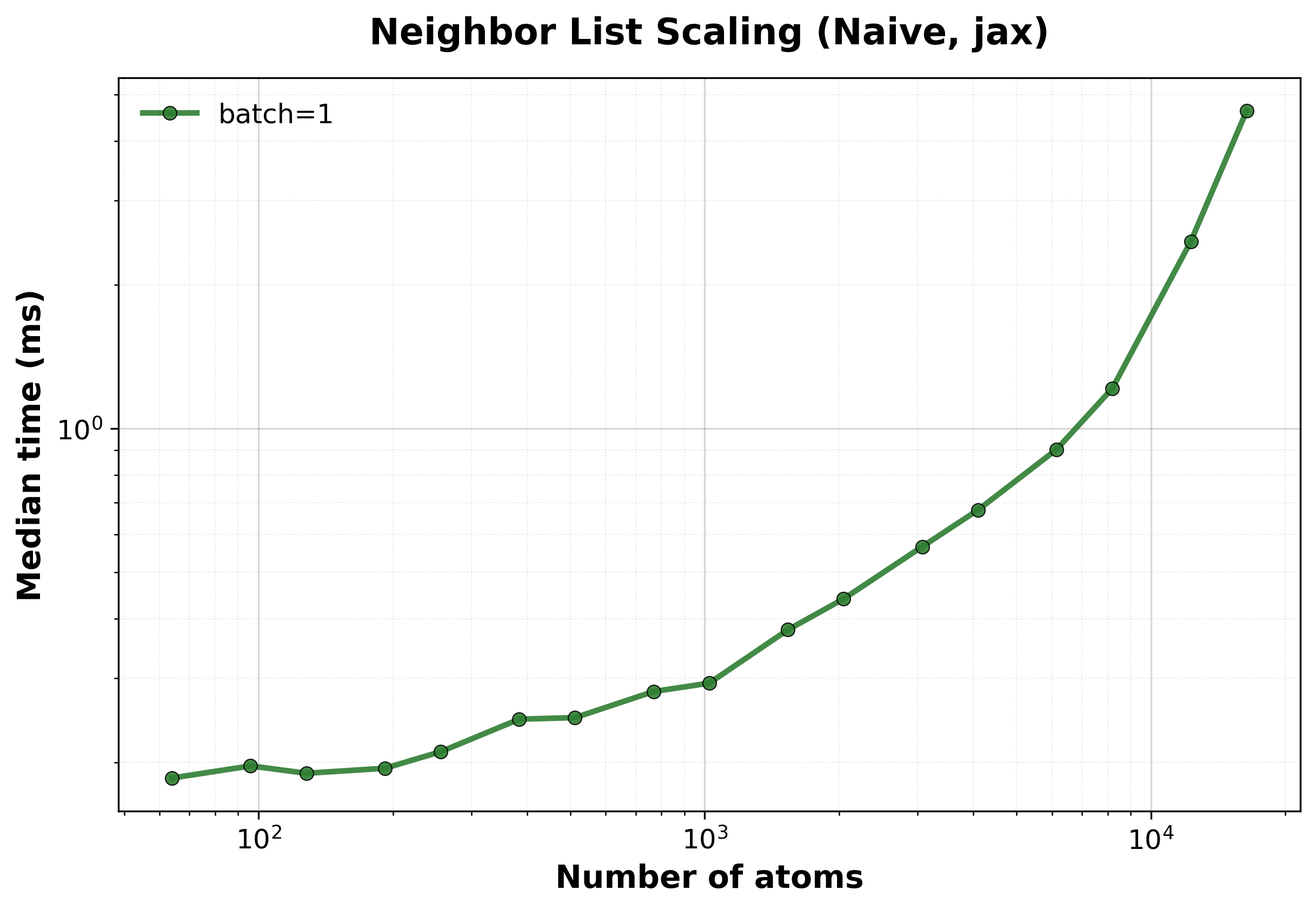

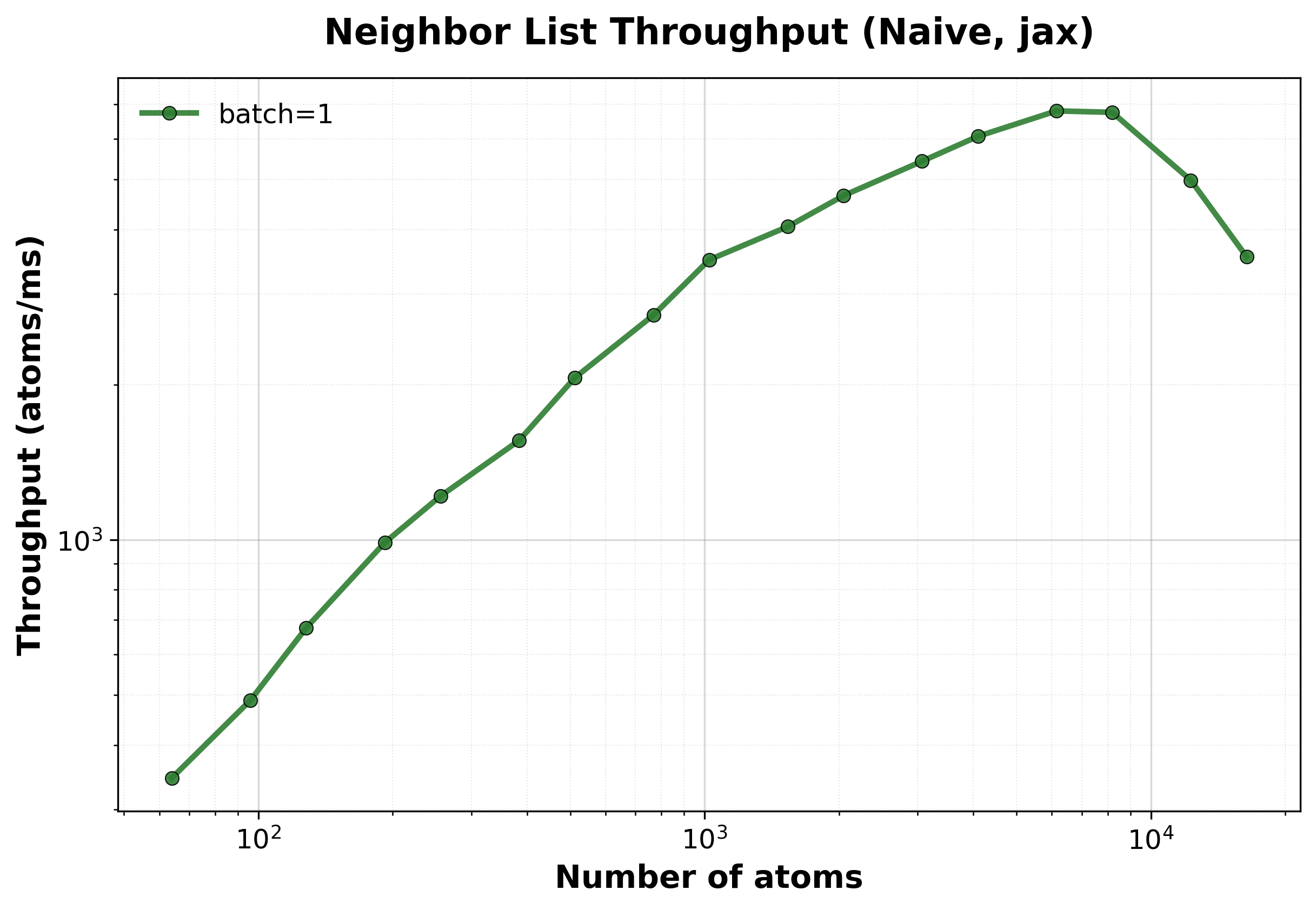

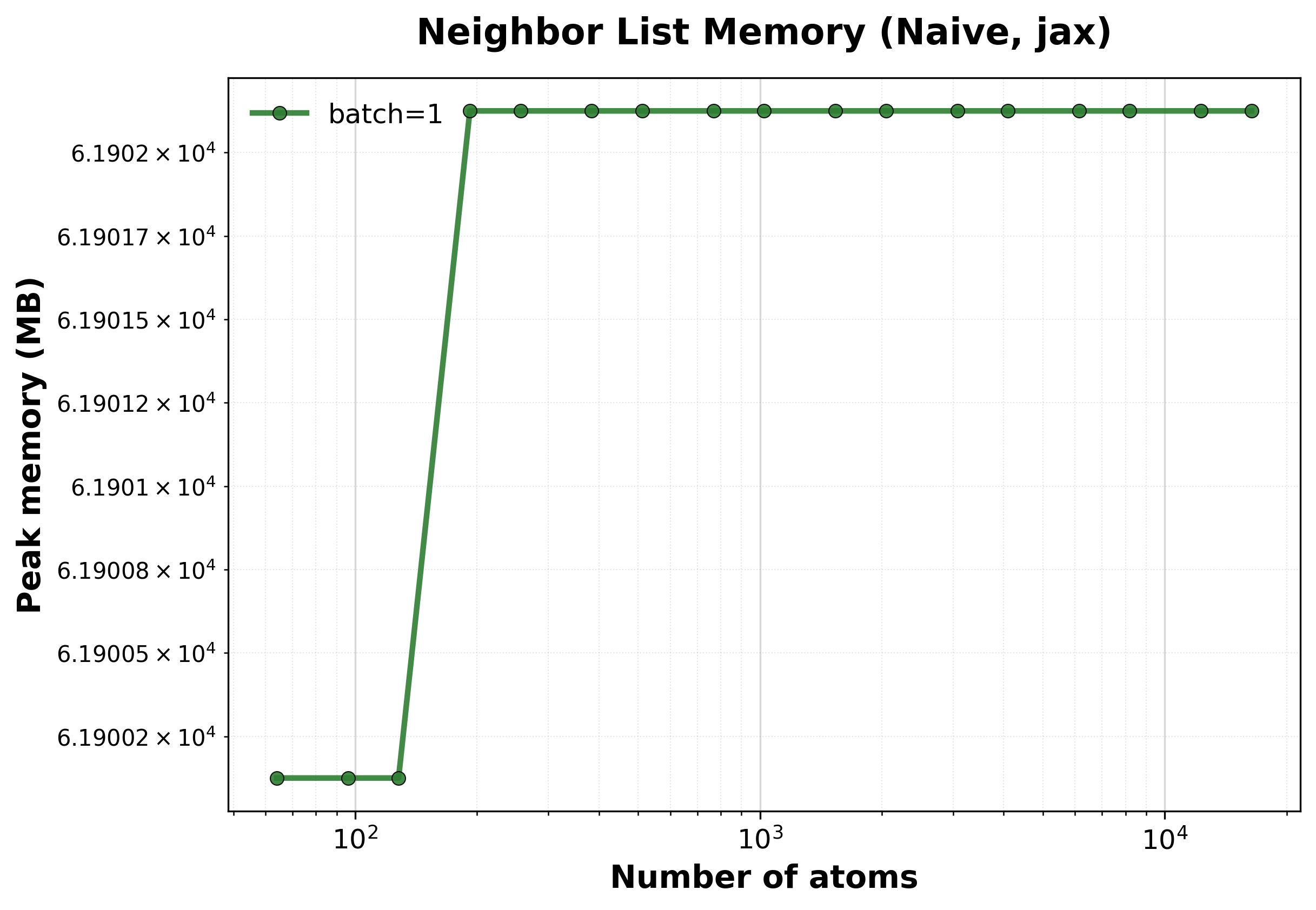

Scaling of the naive algorithm with the nvalchemiops JAX backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

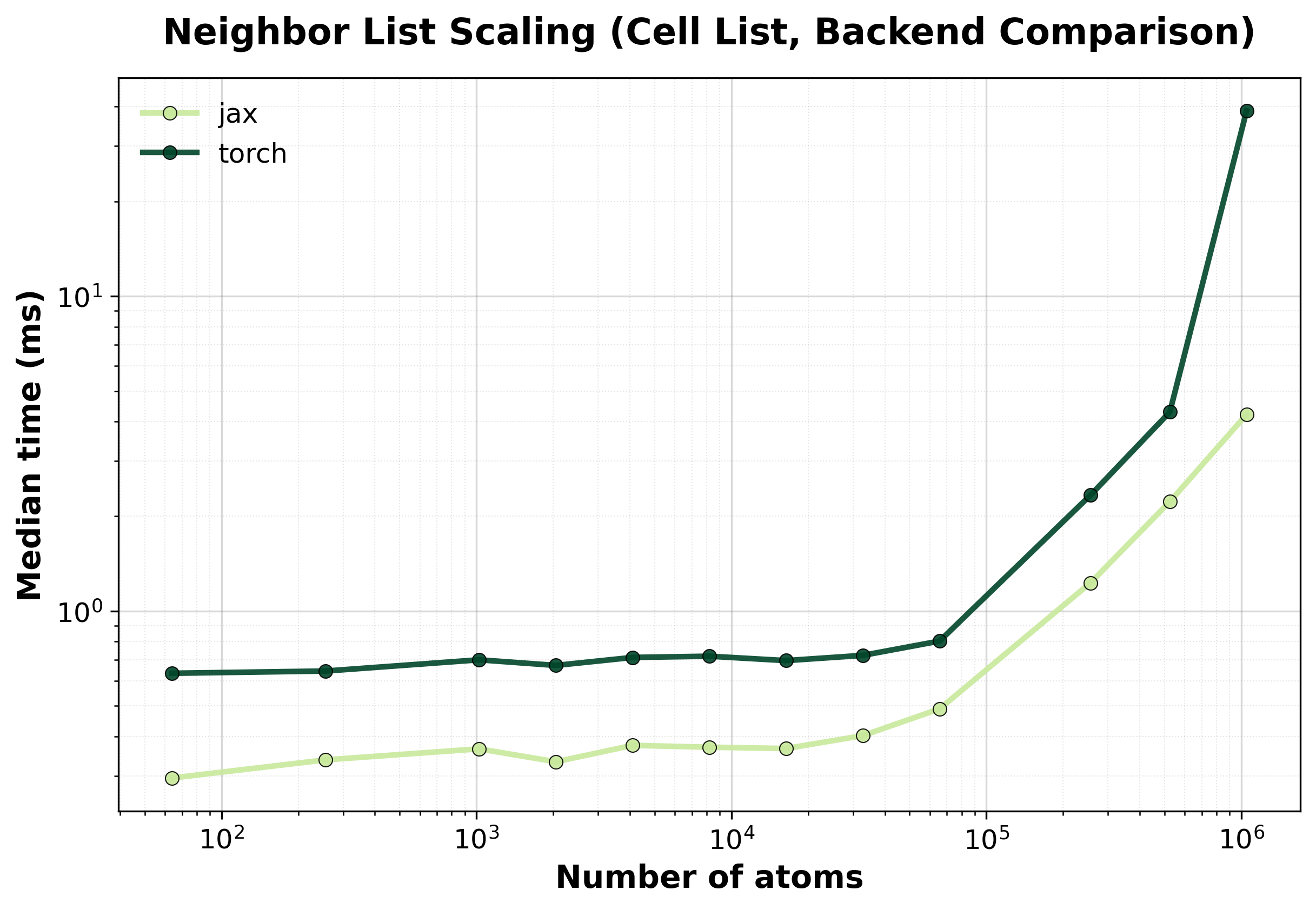

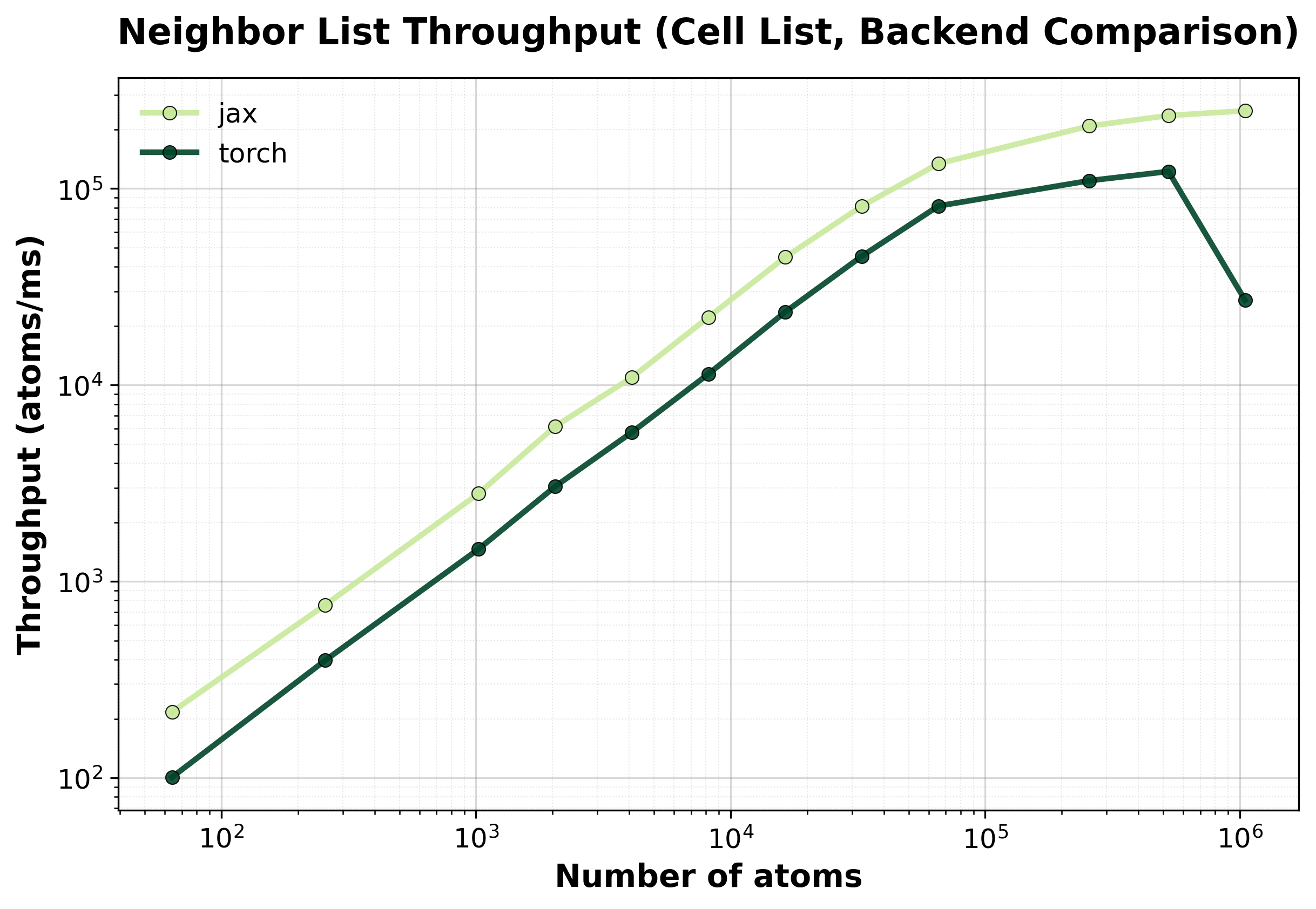

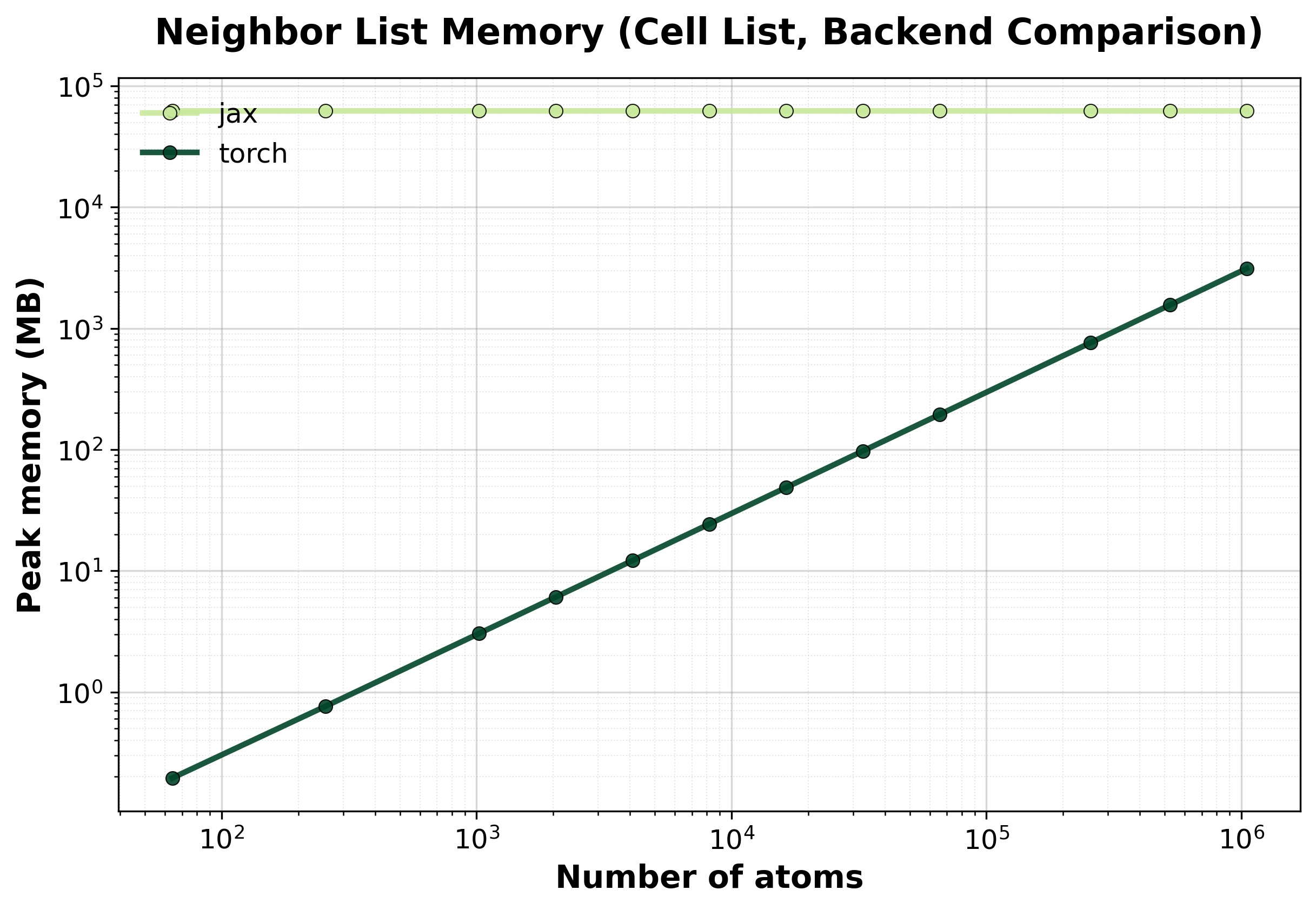

Cell List#

Spatial hashing \(O(N)\) algorithm. Recommended for medium to large systems where computational efficiency is critical.

Simple comparison of single (non-batched) system computations between backends, where we scale up the size of the system.

Time Scaling

Median execution time comparison between backends. Shows near-linear \(O(N)\) scaling for large systems.#

Throughput

Throughput (atoms/ms) comparison between backends.#

Memory Usage

Peak GPU memory consumption comparison between backends.#

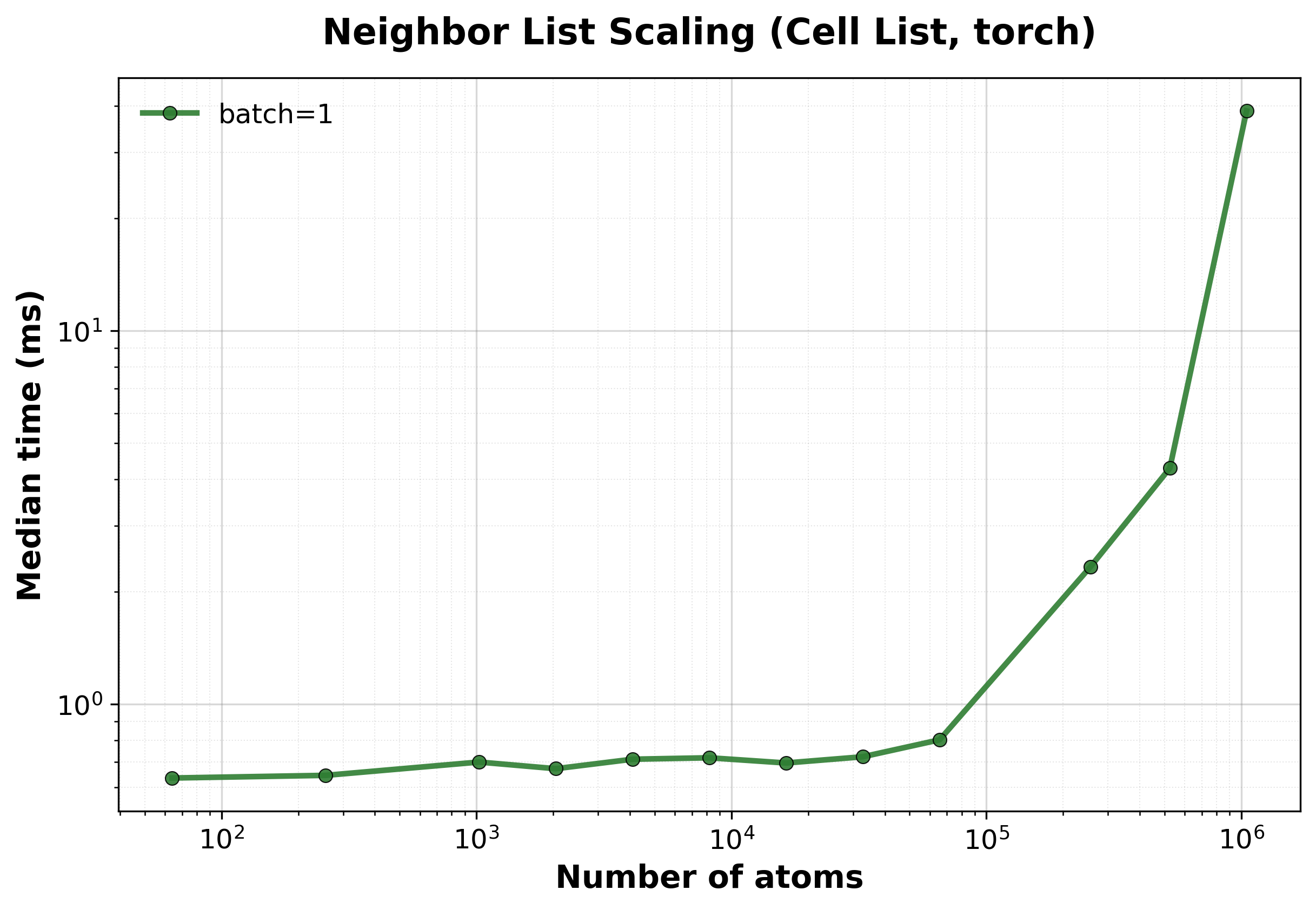

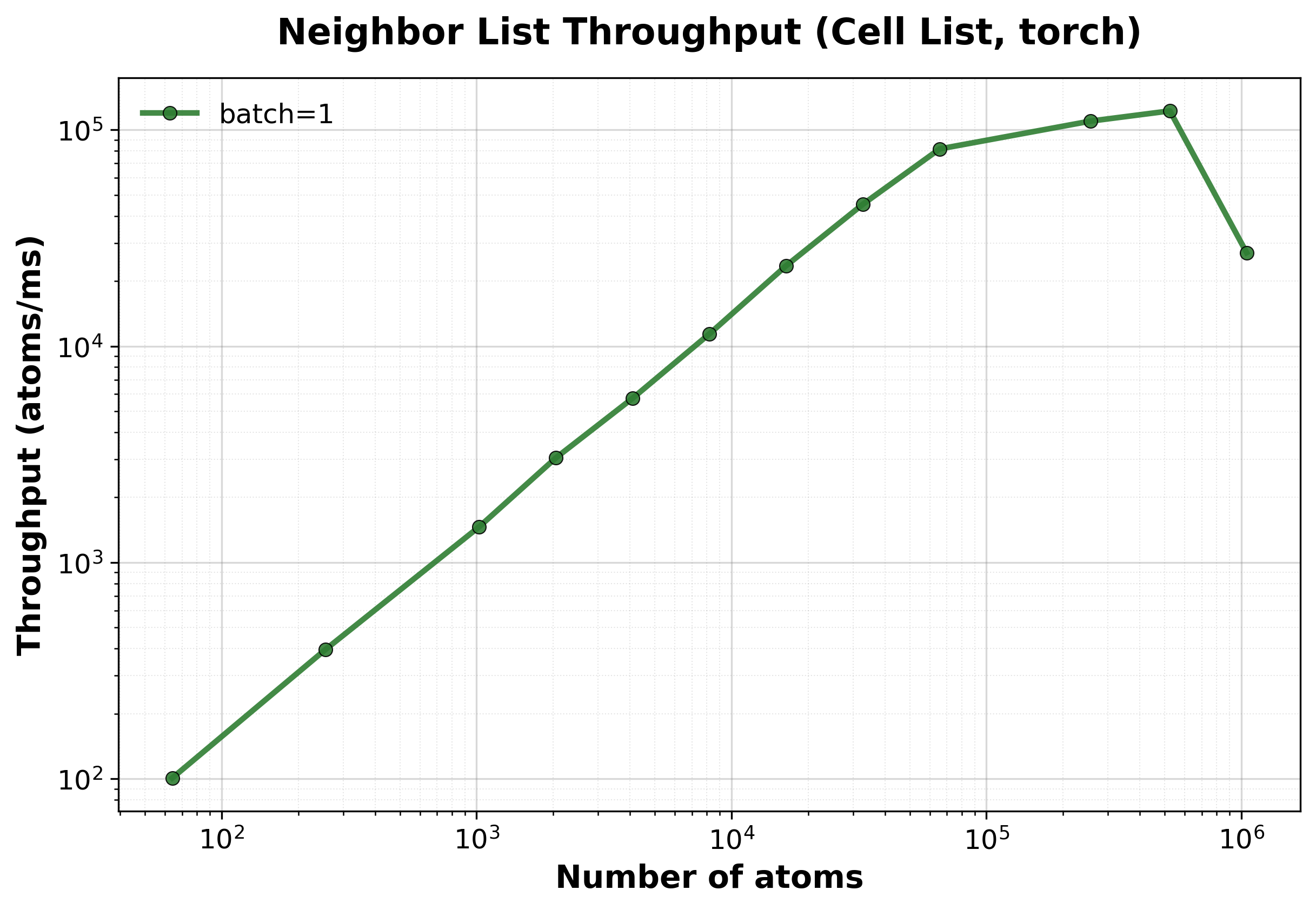

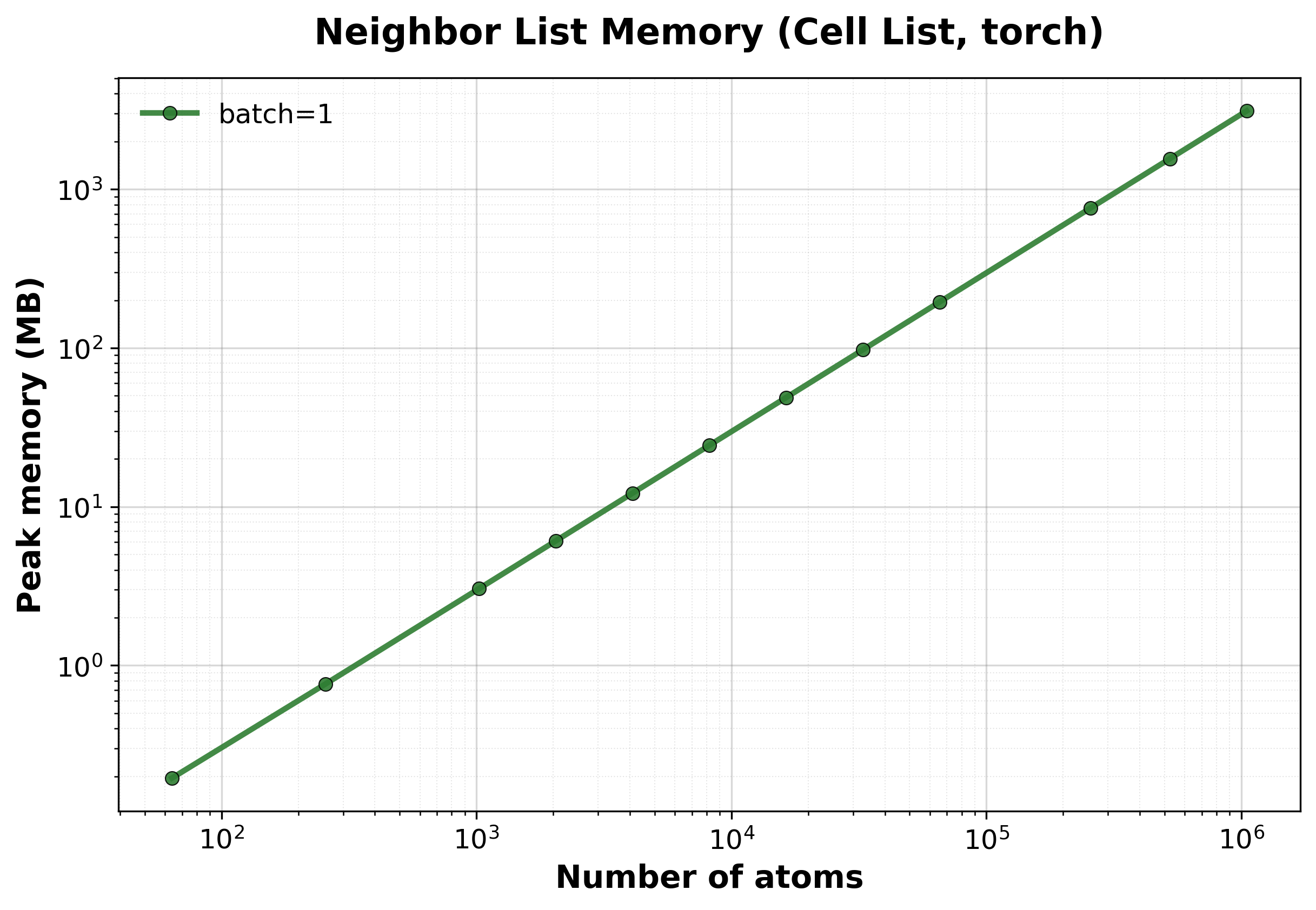

Scaling of the cell list algorithm with the nvalchemiops Torch backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

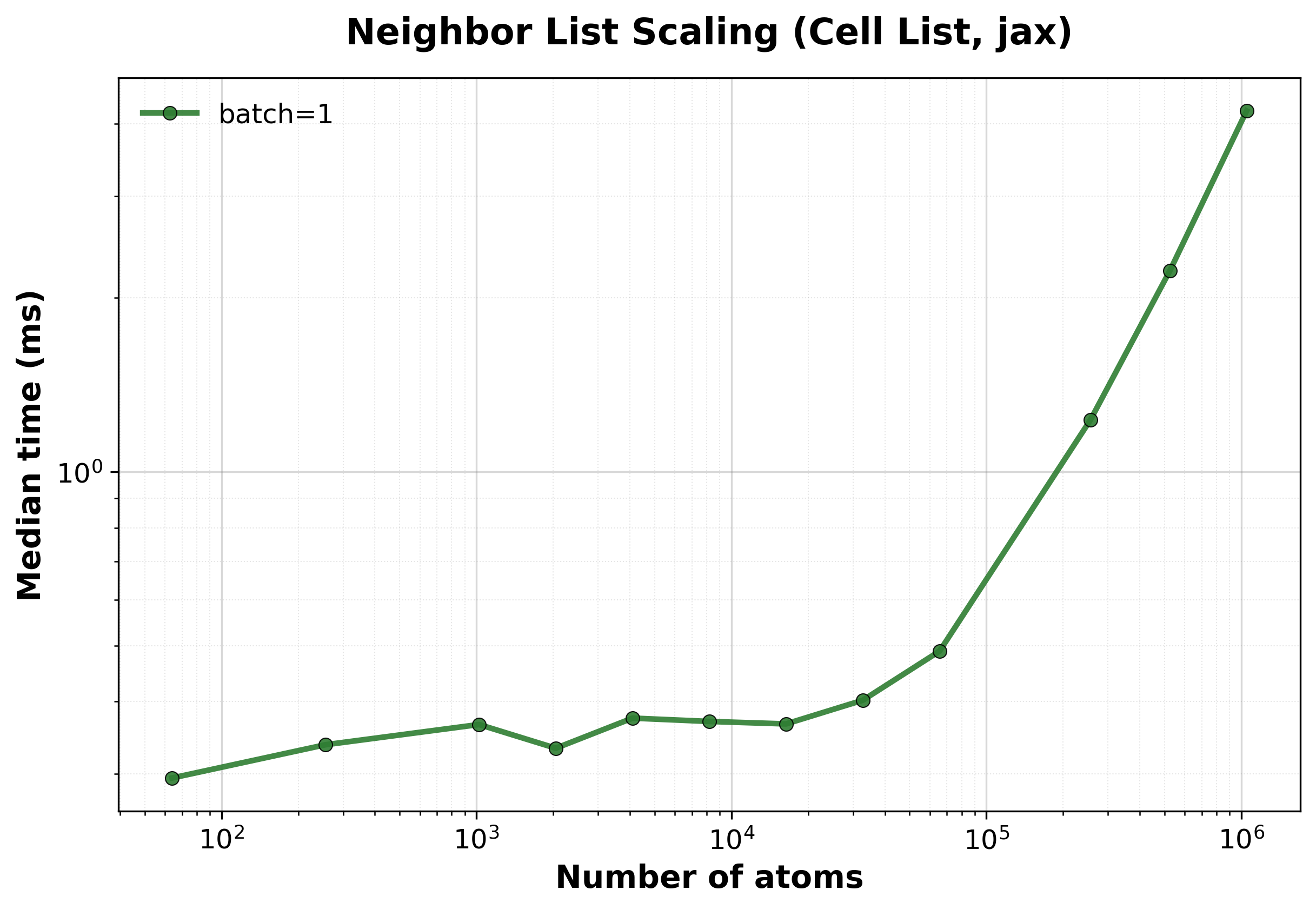

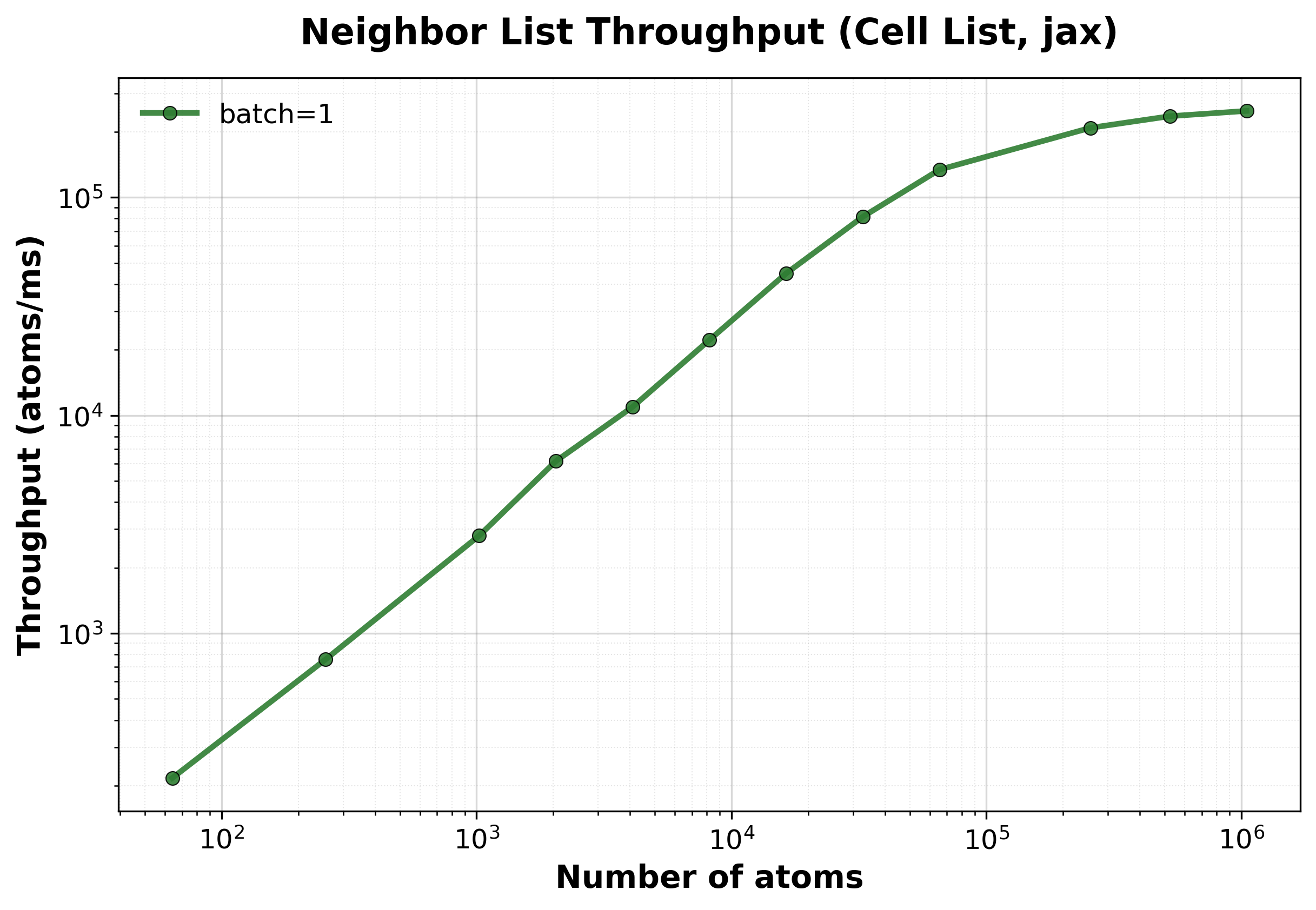

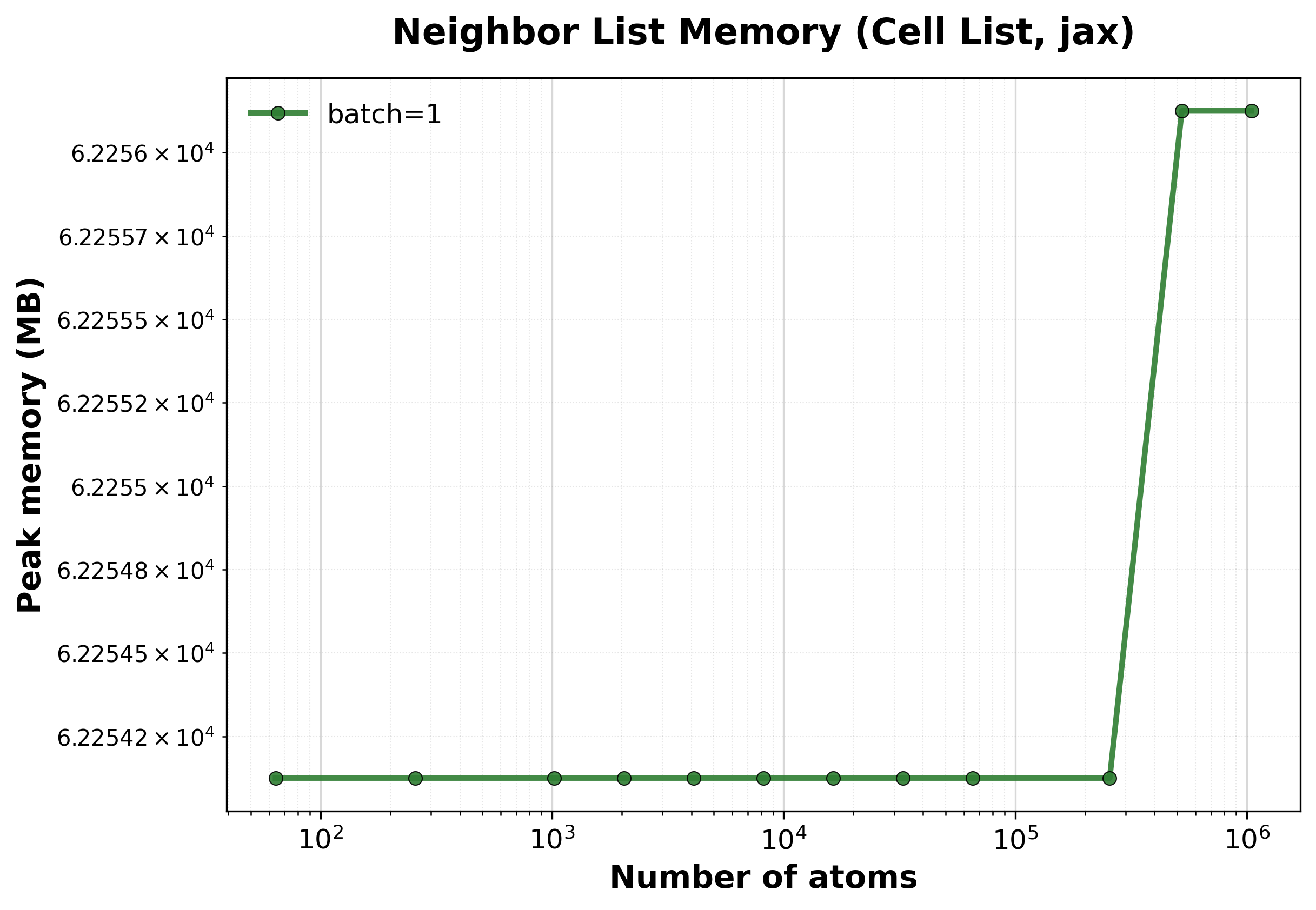

Scaling of the cell list algorithm with the nvalchemiops JAX backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

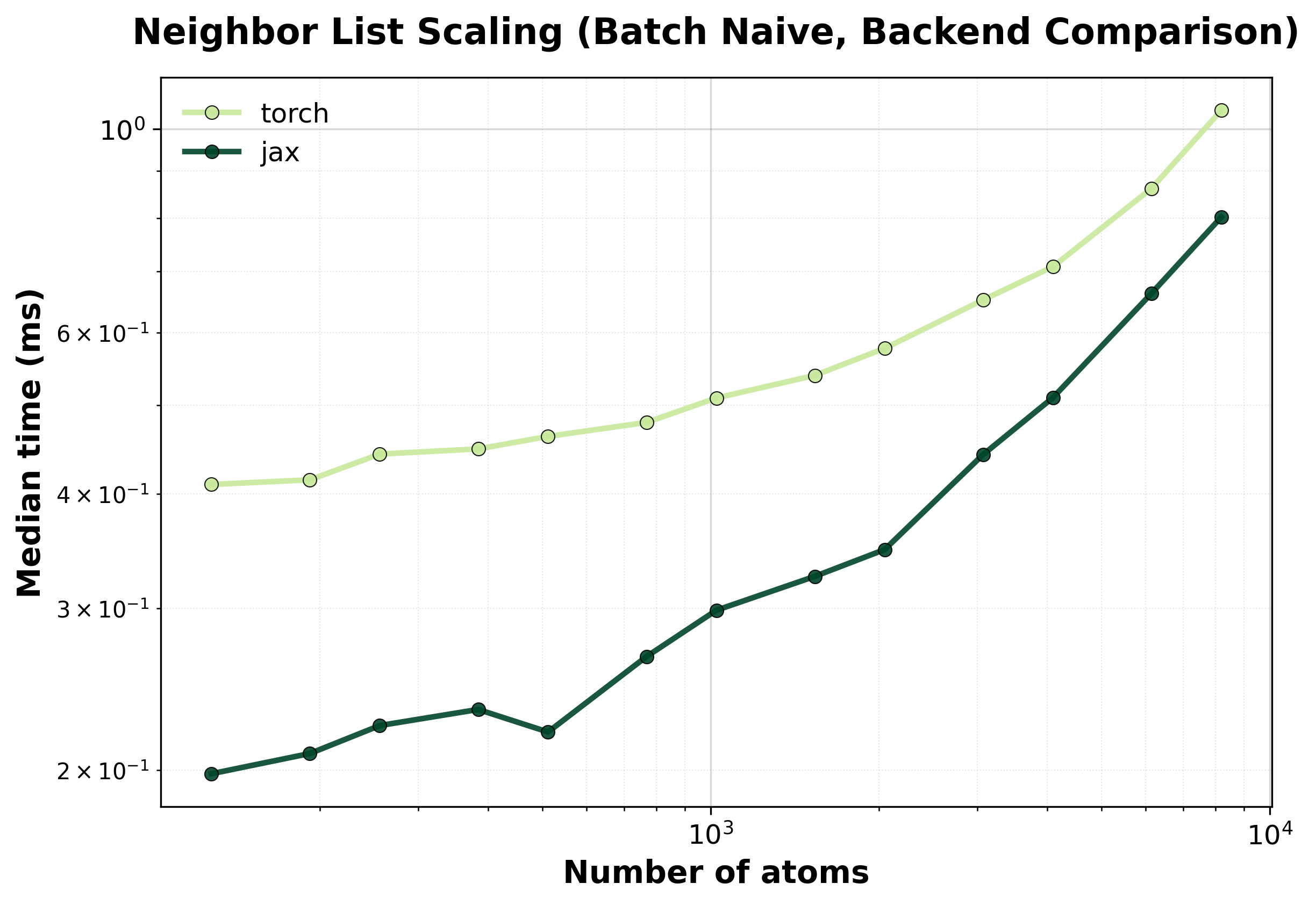

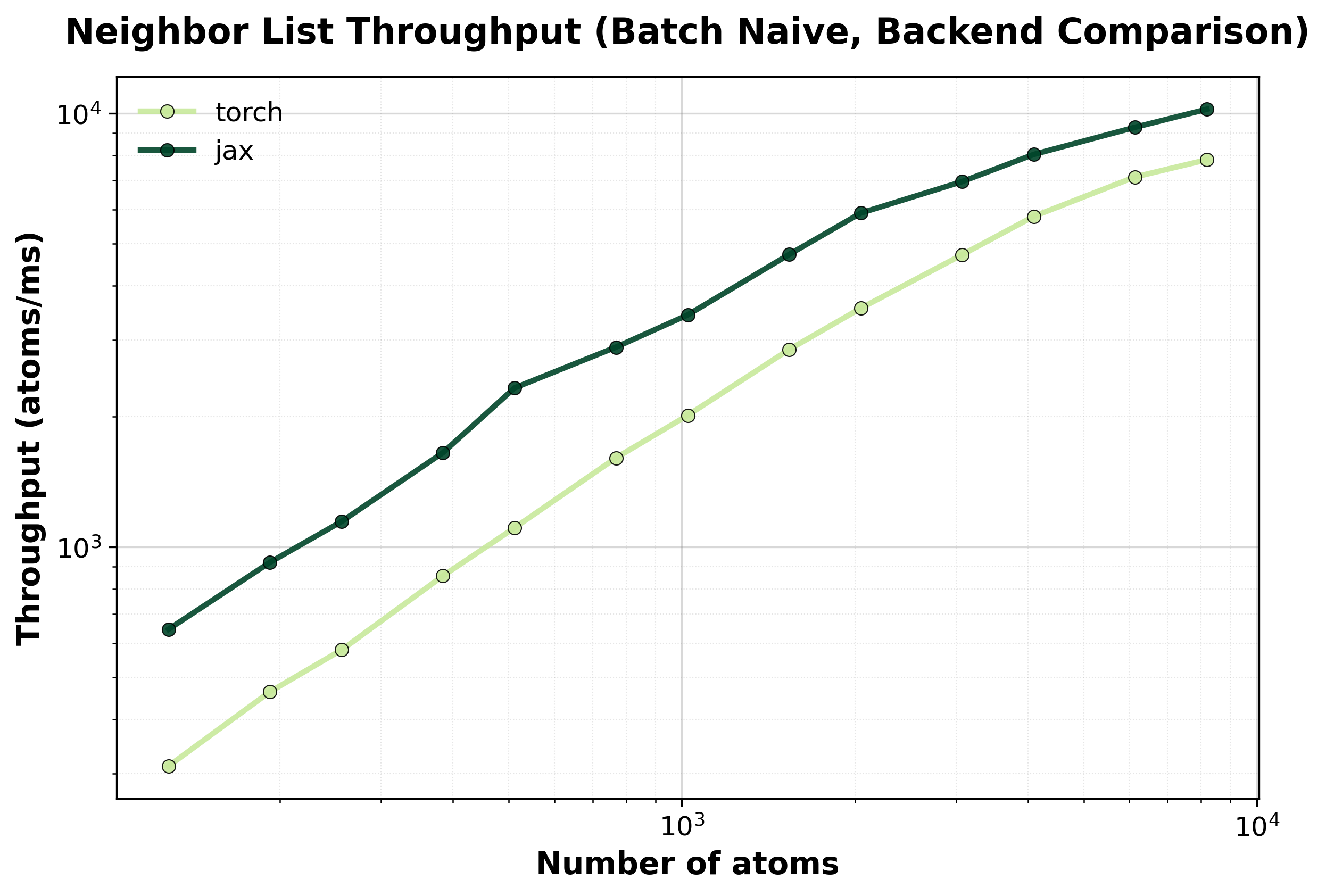

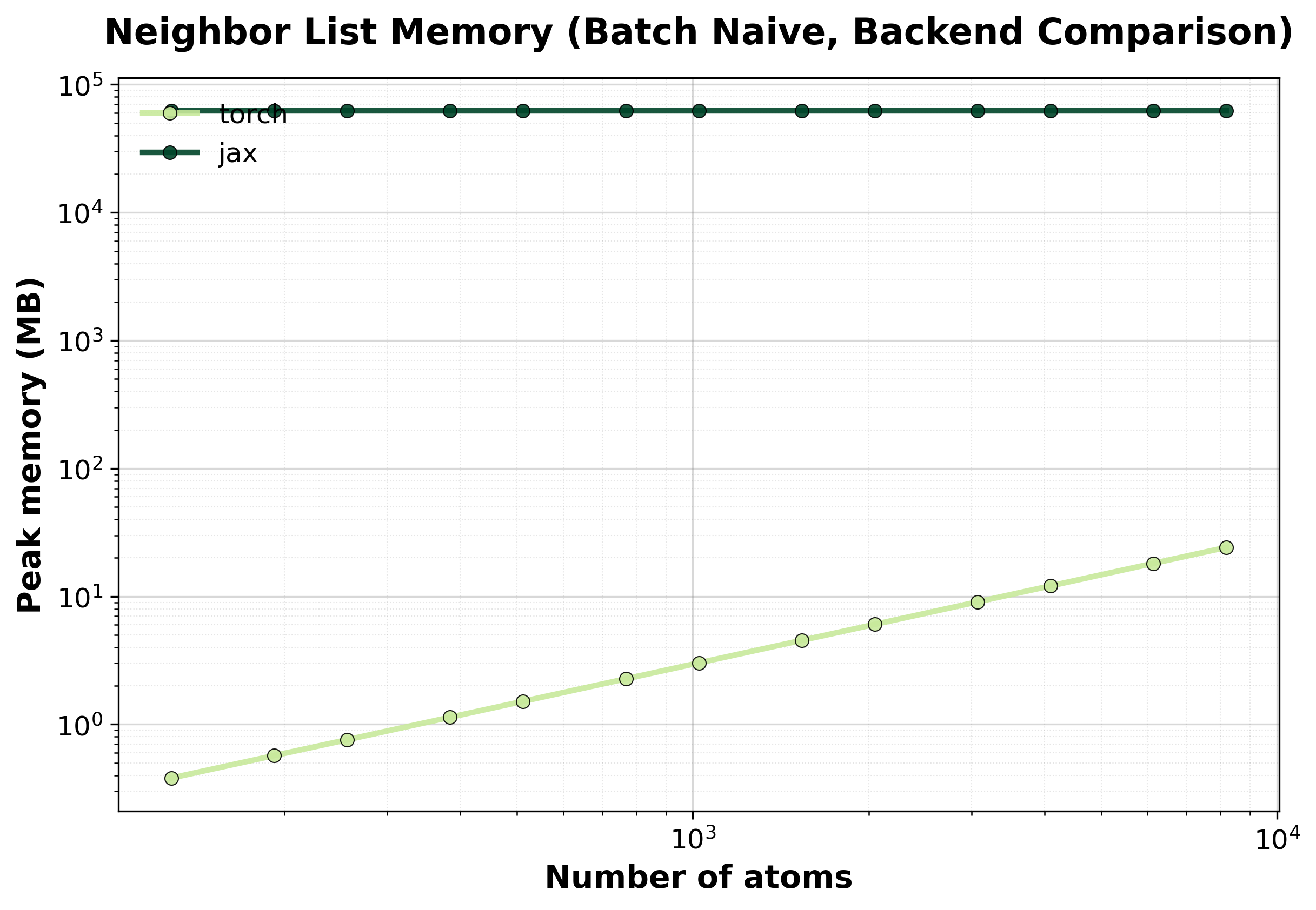

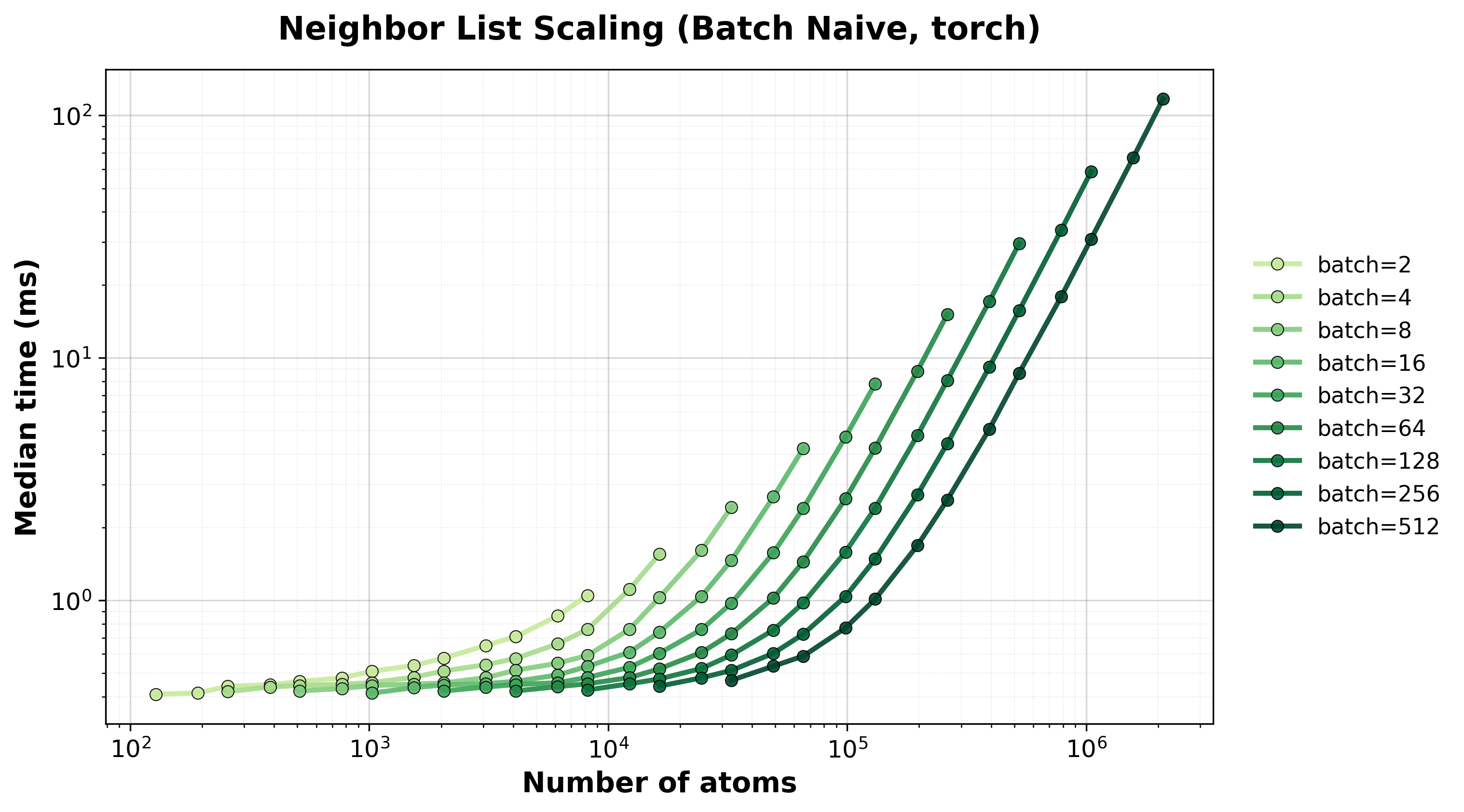

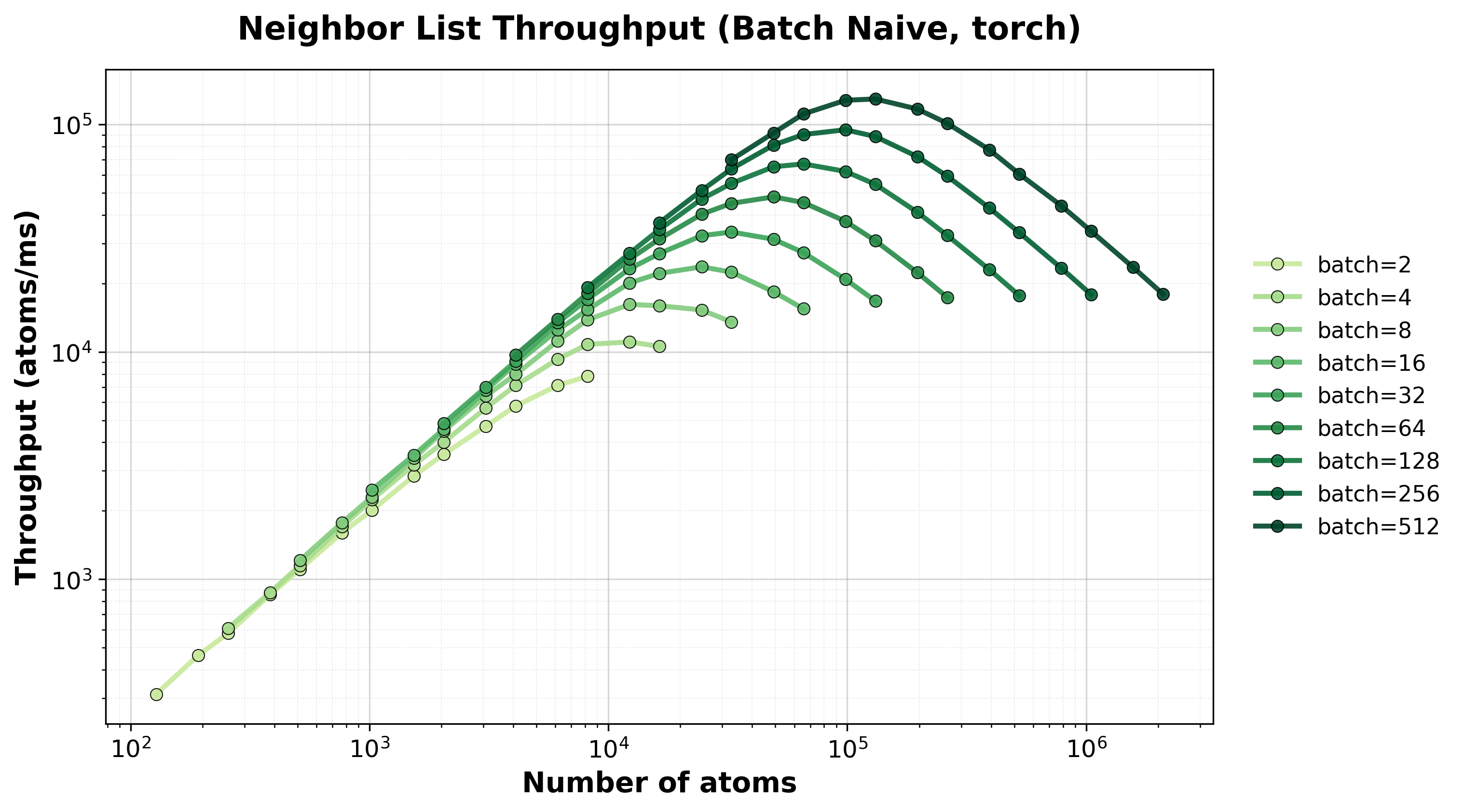

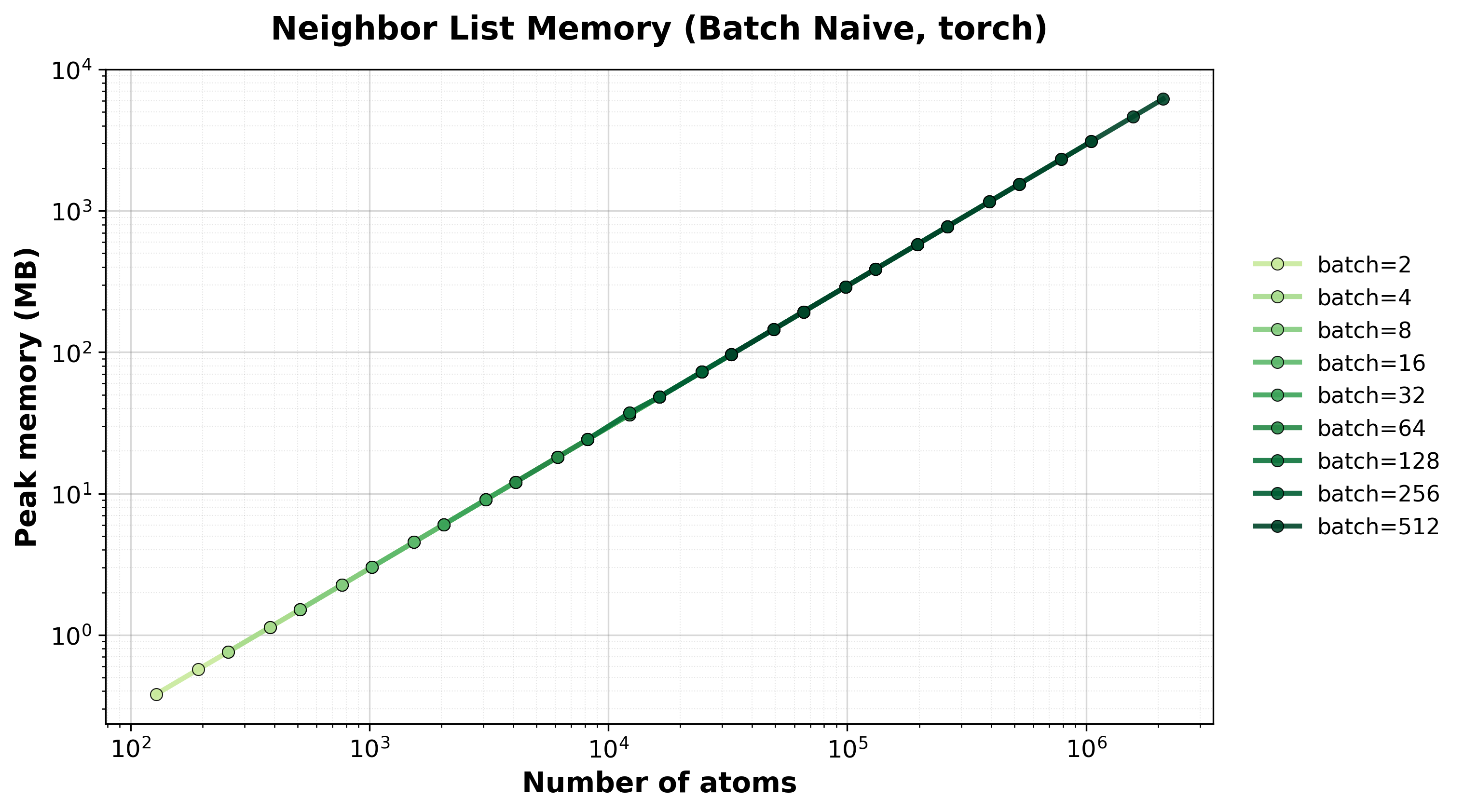

Batch Naive#

Batched brute-force algorithm for processing multiple small systems simultaneously. Useful for ML workflows with many small molecules.

Simple comparison of single (non-batched) system computations between backends, where we scale up the size of the system.

Time Scaling

Median execution time comparison between backends.#

Throughput

Throughput (atoms/ms) comparison between backends.#

Memory Usage

Peak GPU memory consumption comparison between backends.#

Scaling of the batched naive algorithm with the nvalchemiops Torch backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

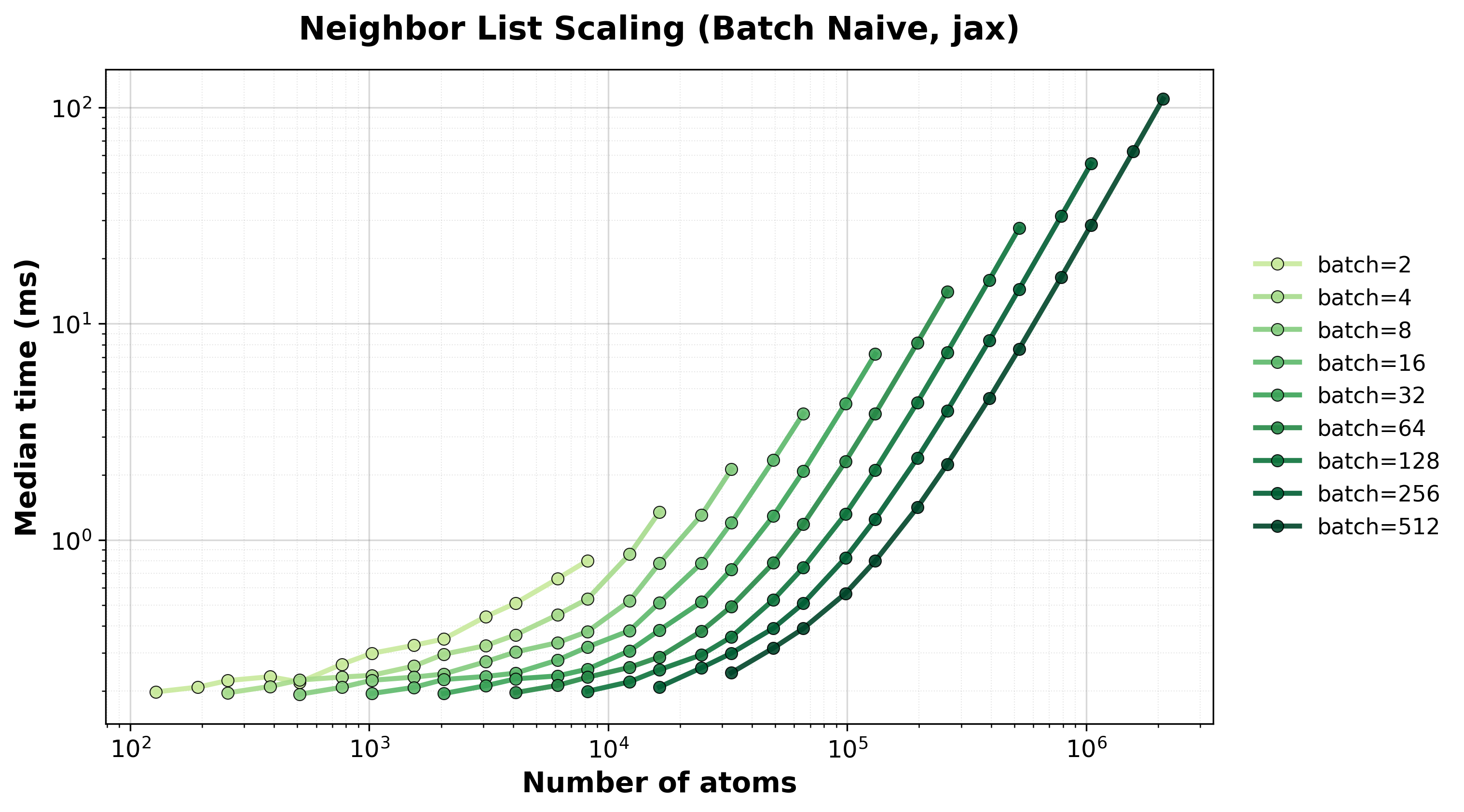

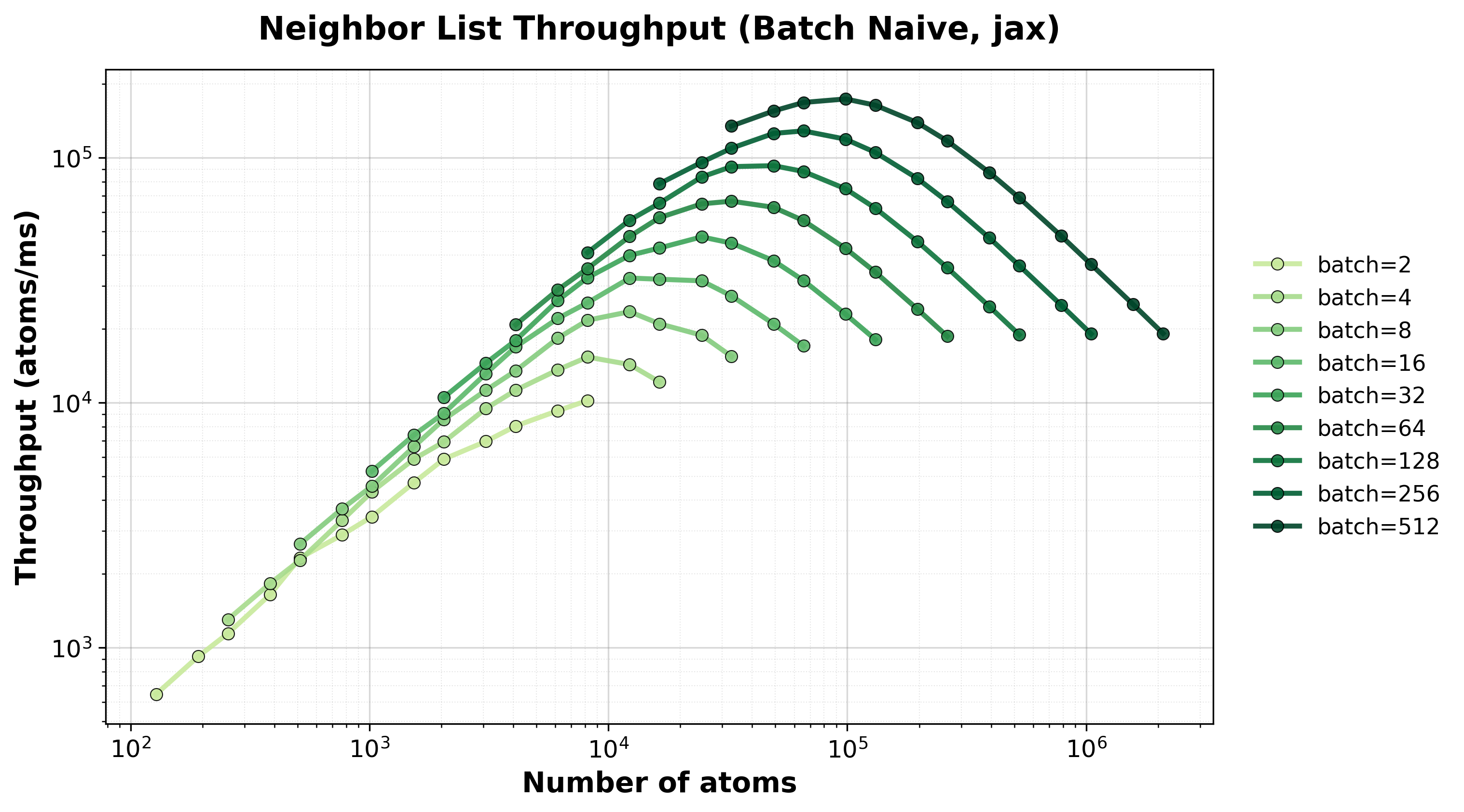

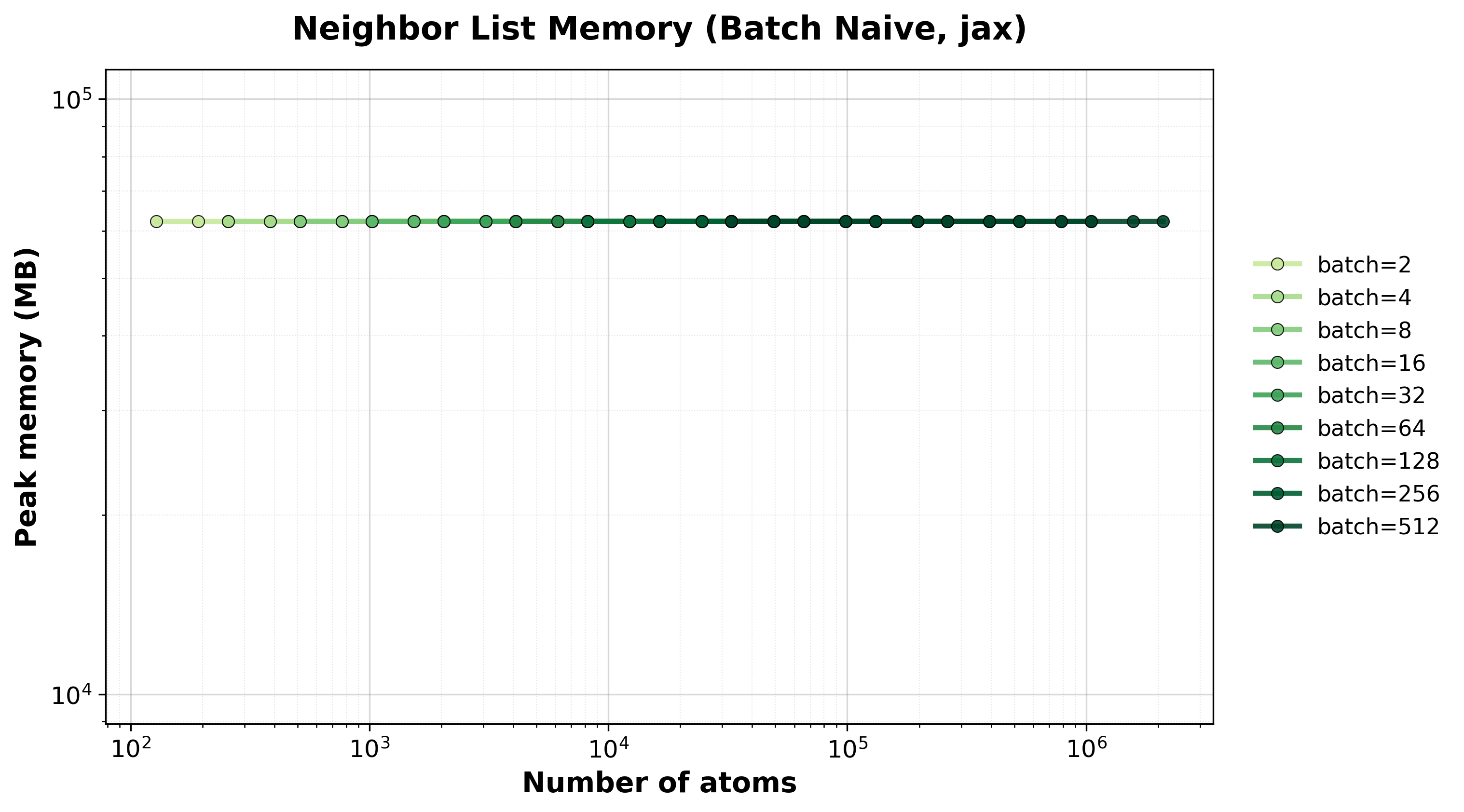

Scaling of the batched naive algorithm with the nvalchemiops JAX backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

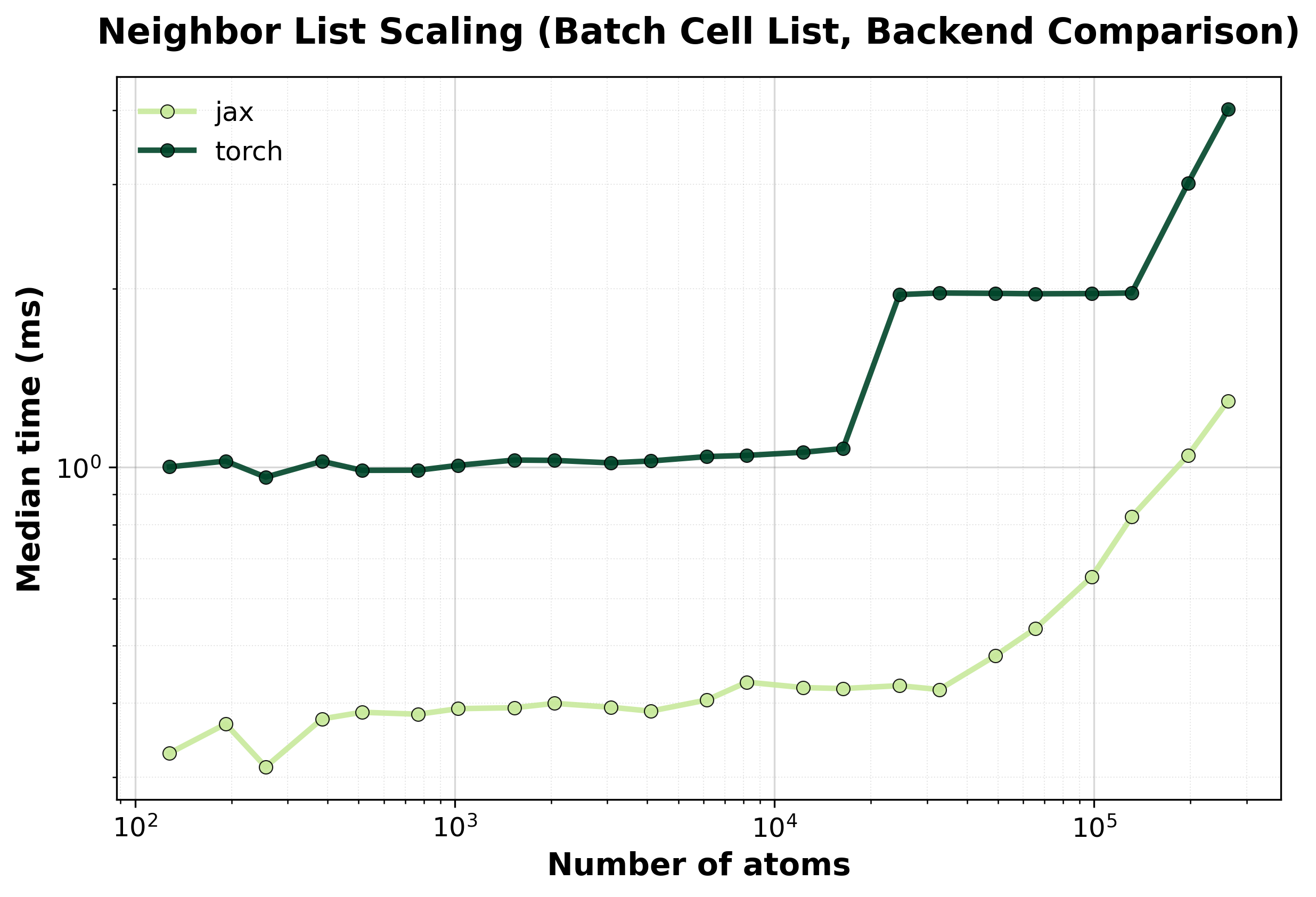

Batch Cell List#

Batched spatial hashing algorithm for processing multiple systems simultaneously with O(N) scaling per system.

Simple comparison of single (non-batched) system computations between backends, where we scale up the size of the system.

Time Scaling

Median execution time comparison between backends.#

Throughput

Throughput (atoms/ms) comparison between backends.#

Memory Usage

Peak GPU memory consumption comparison between backends.#

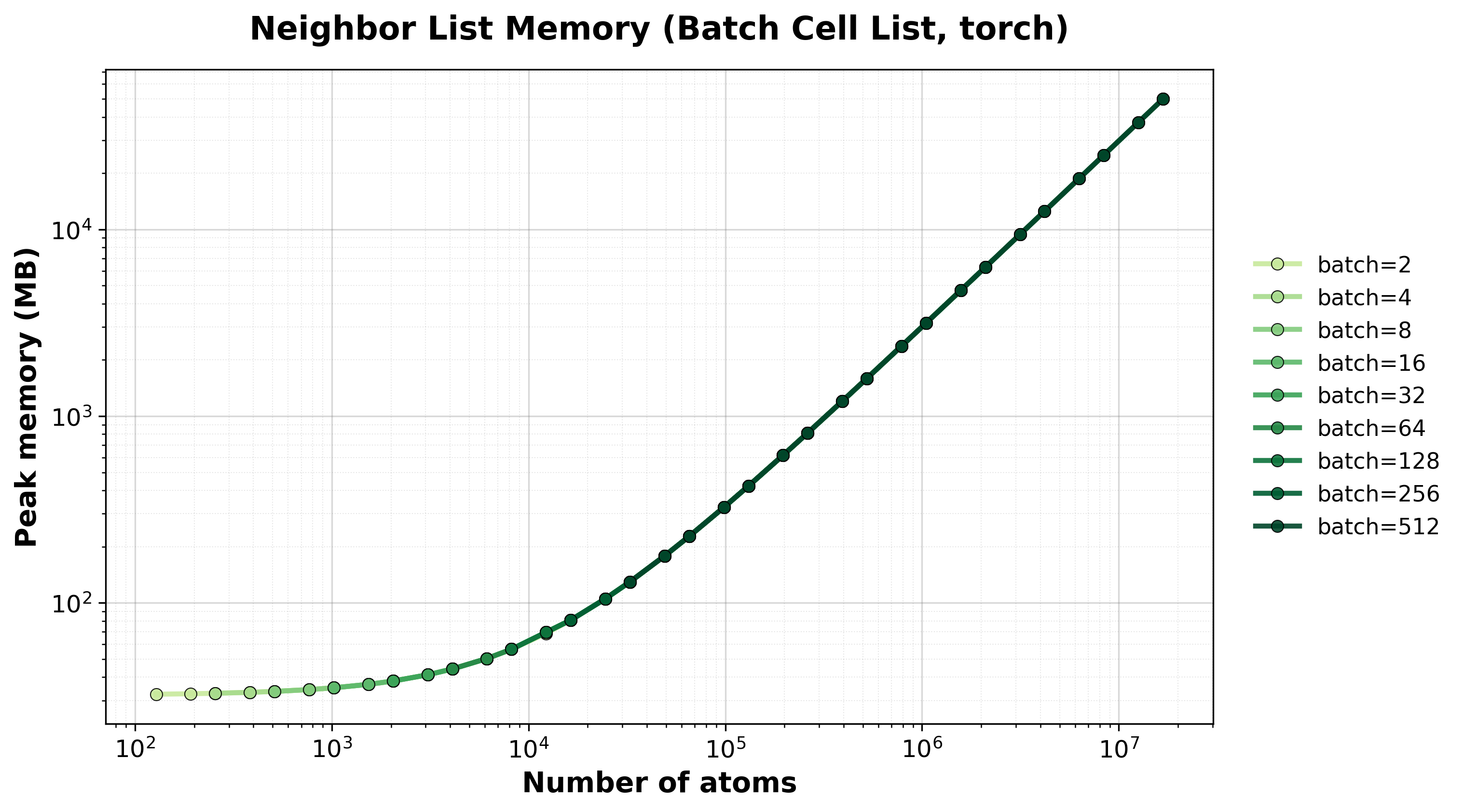

Scaling of the batched cell list algorithm with the nvalchemiops Torch backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

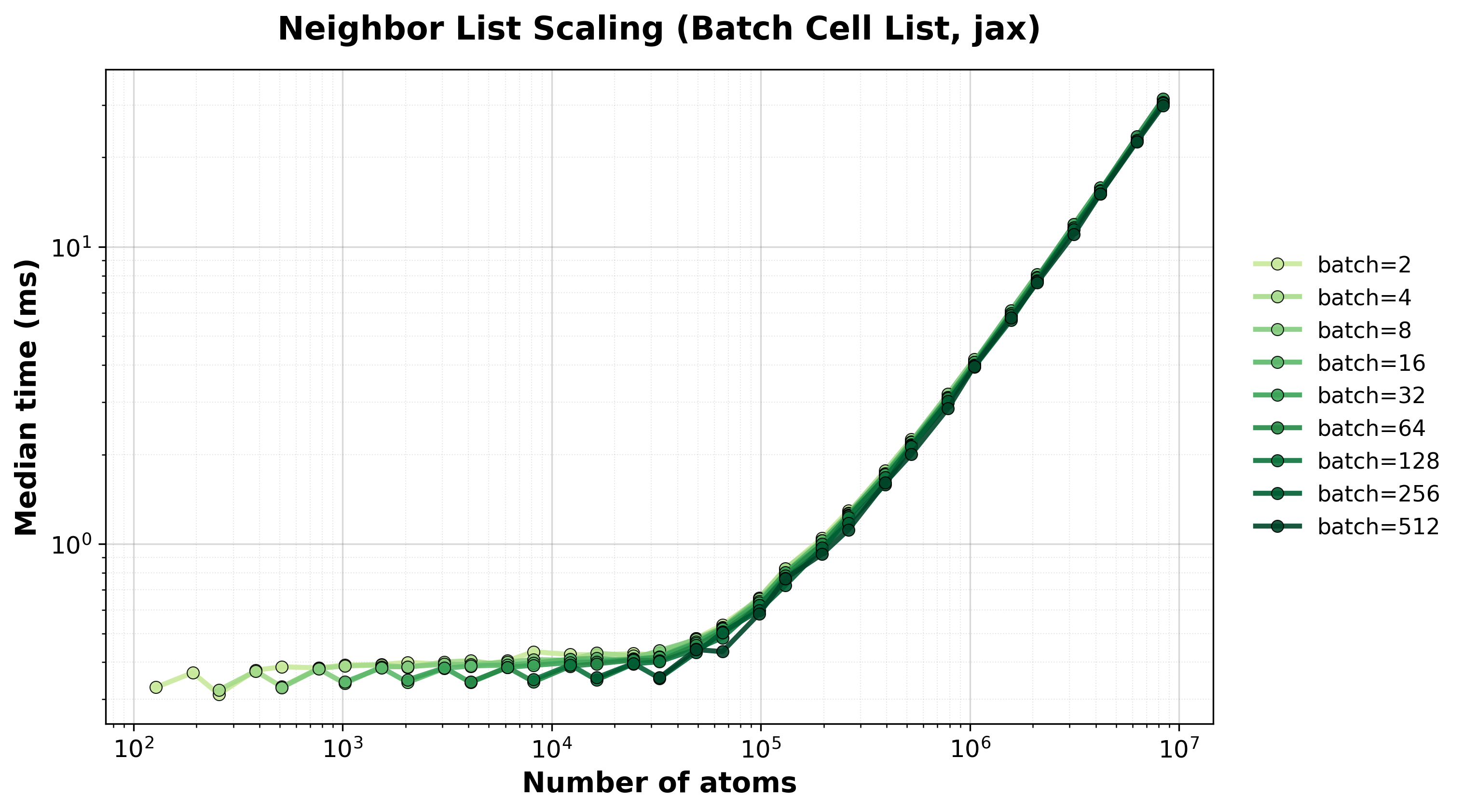

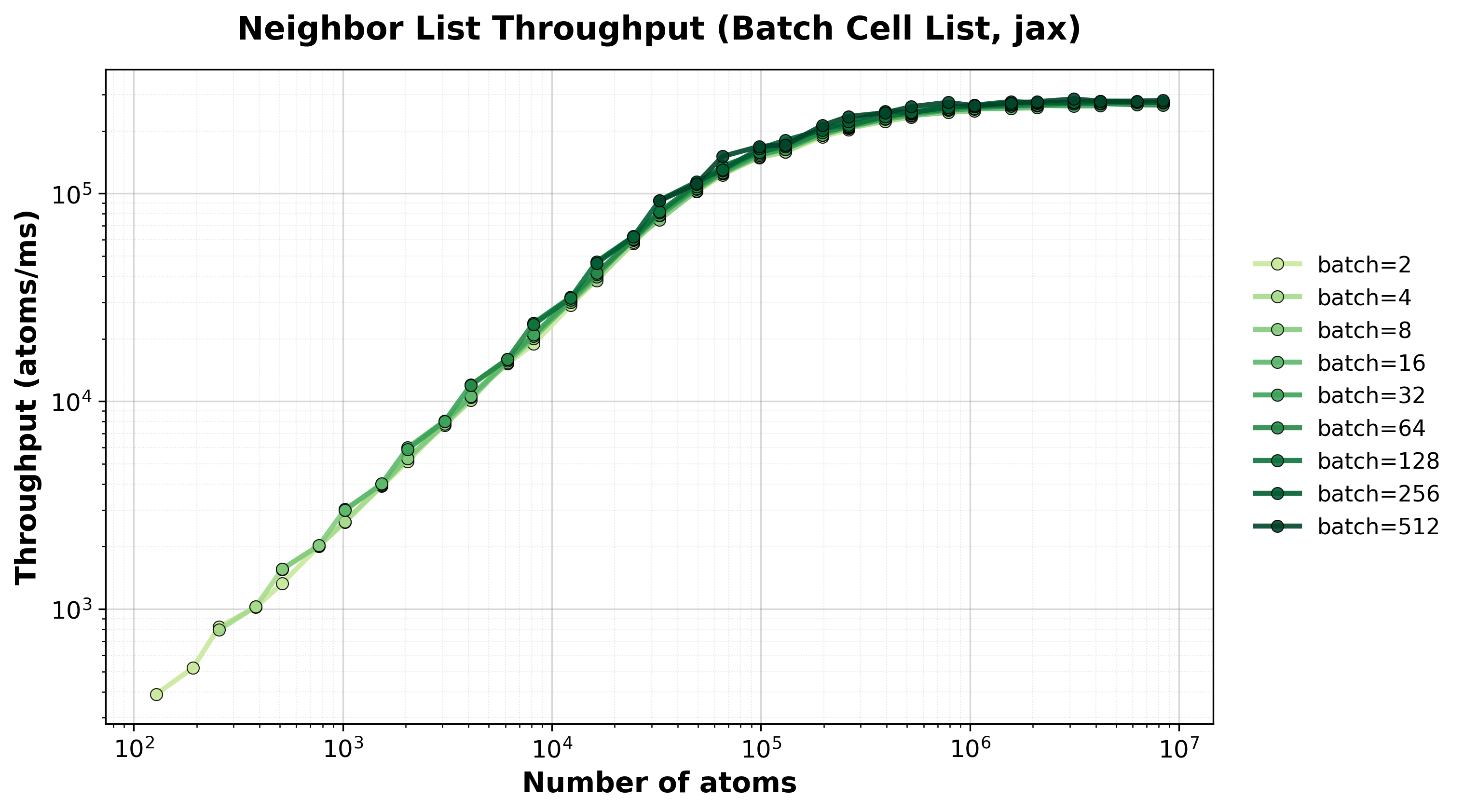

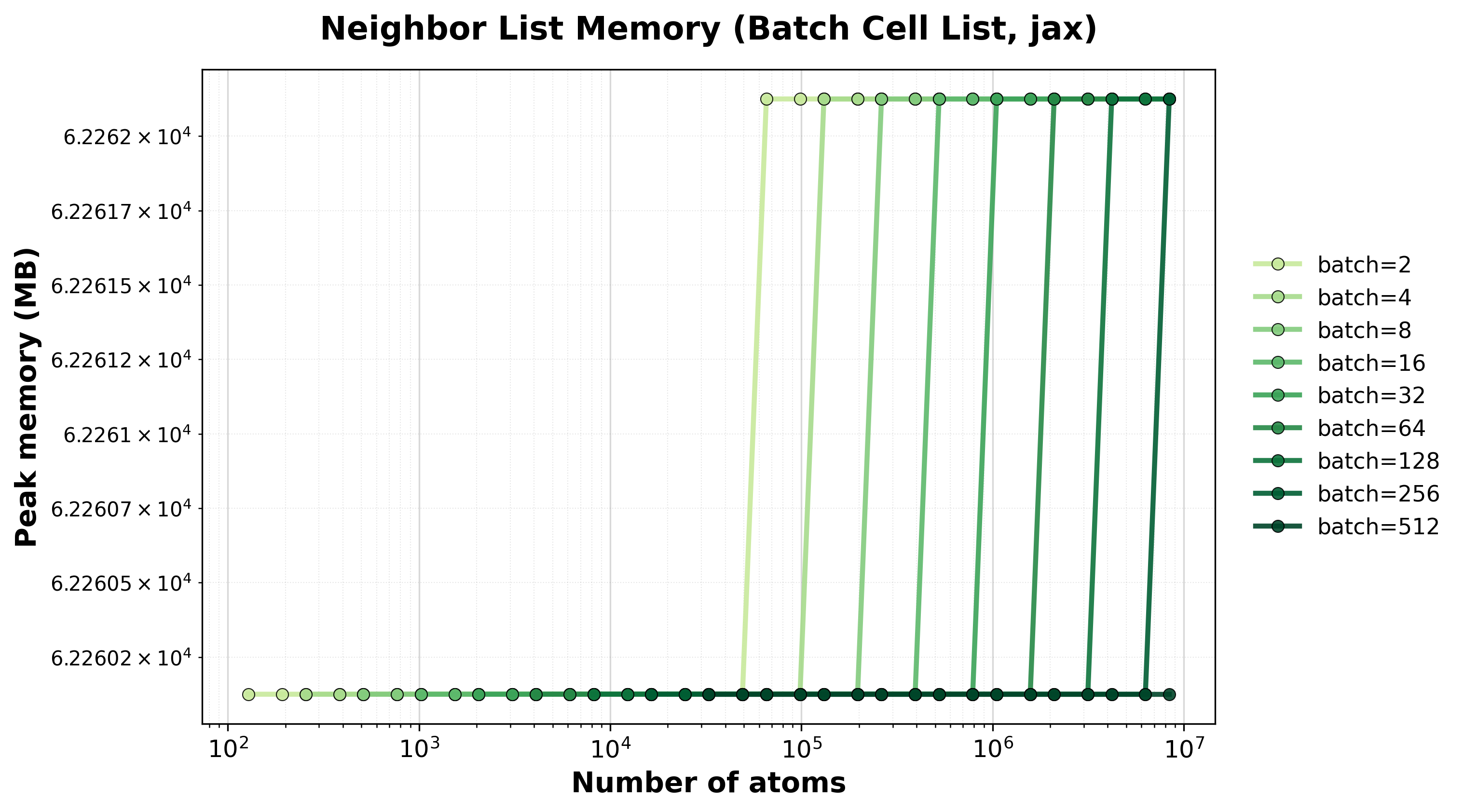

Scaling of the batched cell list algorithm with the nvalchemiops JAX backend.

Shows how performance scales with different batch sizes.

Time Scaling

Execution time scaling for different batch sizes.#

Throughput

Throughput (atoms/ms) for different batch sizes.#

Memory Usage

Peak GPU memory consumption for different batch sizes.#

Hardware Information#

GPU: NVIDIA H100 80GB HBM3

Benchmark Configuration#

Parameter |

Value |

|---|---|

Cutoff |

5.0 Å |

System Type |

FCC crystal lattice |

Warmup Iterations |

3 |

Timing Iterations |

10 |

Dtype |

|

Interpreting Results#

method

: Algorithm name.

total_atoms

: Total number of atoms in the system.

atoms_per_system

: Atoms per system (relevant for batch methods).

total_neighbors

: Total number of neighbor pairs found.

batch_size

: Number of systems processed simultaneously (1 for non-batch methods).

median_time_ms

: Median execution time in milliseconds (lower is better).

peak_memory_mb

: Peak GPU memory usage in megabytes.

Running Your Own Benchmarks#

To generate benchmark results for your hardware:

cd benchmarks/neighborlist

python benchmark_neighborlist.py \

--config benchmark_config.yaml \

--output-dir ../../docs/benchmarks/benchmark_results

Results will be saved as CSV files and plots will be automatically generated during the next documentation build.