Overview

NCX Infra Controller (NICo) is an API-based microservice that provides site-local, zero-trust bare-metal lifecycle management with DPU-enforced isolation, allowing for deployment of multi-tenant AI infrastructure at scale. NICo enables zero-touch automation and ensures the integrity and separation of workloads at the bare-metal layer.

NICo Operational Principles

NICo has been designed according to the following principles:

- The machine is untrustworthy.

- Operating system requirements are not imposed on the machine.

- After being racked, machines must become ready for use with no human intervention.

- All monitoring of the machine must be done using out-of-band methods.

- The network fabric (i.e. Leaf Switches and routers) stays static even during tenancy changes within NICo.

NICo Responsibilities

NICo is responsible for the following tasks in the data-center environment:

- Maintain hardware inventory of ingested machines.

- Integrate with RedFish APIs to manage usernames and passwords

- Perform hardware testing and burn-in.

- Validate and update firmware.

- Allocate IP addresses (IPv4).

- Control power (power on/off/reset).

- Provide DNS services for managed machines.

- Orchestrate provisioning, wiping, and releasing nodes.

- Ensure trust of the machine when switching tenants.

Responsibilities not Covered

NICo is not responsible for the following tasks:

- Configuration of services and software running on managed machines.

- Cluster assembly (that is, it does not build SLURM or Kubernetes clusters)

- Underlay network management

NICo Components and Services

NICo is a service with multiple components that drive actions based on API calls, which can originate from users or as events triggered by machines (e.g. a DHCP boot or PXE request).

Each service communicates with the NICo API server over gRPC using protocol buffers. The API uses gRPC reflection to provide a machine readable API description so clients can auto-generate code and RPC functions in the client.

The NICo deployment includes a number of services:

- NICo API service: Allows users to query the state of all objects and to request creation, configuration, and deletion of entities.

- DHCP: Provides IPs to all devices on underlay networks, including Host BMCs, DPU BMCs, and DPU OOB addresses. It also provides IPs to Hosts on the overlay network.

- PXE: Delivers images to managed hosts at boot time. Currently, managed hosts are configured to always boot from PXE. If a local bootable device is found, the host will boot it. Hosts can also be configured to always boot from a particular image for stateless configurations.

- Hardware health: Pulls

hardware health and configuration information emitted from a Prometheus

/metricsendpoint on port 9009 and reports that state information back to NICo. - SSH console: Provides a virtual serial

console logging and access over

ssh, allowing console access to remote machines deployed on site. Thessh-consolealso logs the serial console output of each host into the logging system, where it can be queried using tools such as Grafana andlogcli. - DNS: Provides domain name service (DNS) functionality

using two services:

carbide-dns: Handles DNS queries from the site controller and managed nodes.unbound: Provides recursive DNS services to managed machines and instances.

Component and Service Dependencies

In addition to the NICo service components, there are other supporting services that must be set up within the K8s site controller nodes.

Site Management

- The entry point for the managed site is through the Elektra site agent. The site agent maintains a northbound Temporal connection to the cloud control plane for command and control.

- The admin CLI provides a command line interface into NICo.

Kubernetes

Some site controller node services require persistent, durable storage to maintain state for their attendant pods:

- Hashicorp Vault: Used by Kubernetes for certificate signing requests (CSRs), this vault

uses three each (one per K8s control node) of the

data-vaultandaudit-vault10GB PVs to protect and distribute the data in the absence of a shared storage solution. - Postgres: This database is used to store state for any NICo or site controller

components that require it, including the main "forgedb". There are three 10GB

pgdataPVs deployed to protect and distribute the data in the absence of a shared storage solution. Theforgedbdatabase is stored here. - Certificate Management Infrastructure: This is a set of components that manage the certificates for the site controller and managed hosts.

Managed Hosts

The point of having a site controller is to administer a site that has been populated with managed hosts.

Each managed host is a pairing of a single Bluefield (BF) 2/3 DPU and a host server.

During initial deployment, the scout service runs, informing the NICo API of any discovered DPUs. NICo completes the installation of services on the DPU and boots into regular operation mode. Thereafter, the dpu-agent starts as a daemon.

Each DPU runs the dpu-agent which connects via gRPC to the API service in NICo to get configuration

instructions.

Metrics and Logs

NICo collects metrics and logs from the managed hosts and the site controller. This information is in Prometheus format and can be scraped by a Prometheus server.

Hardware Compatibility List

This Hardware Compatibility List (HCL) is provided for reference purposes only. Systems listed here have been unit tested or exercised internally in limited scenarios. Inclusion in this list does not imply qualification, certification, or support, and does not represent a commitment to ongoing compatibility. For specific hardware support inquiries or technical specifications, please contact the original hardware vendor.

Hosts

Last Updated: 2/20/2026

| Host Machine | BMC/Management Firmware Version | BIOS/UEFI Version | Misc. Firmware Version (FPGA, CPLD, LXPM, etc.) |

|---|---|---|---|

| GB200 NVL - Wiwynn | 25.06-2_NV_WW_02 | 1.3.2GA | 1.3.2GA |

| NVSwitch Tray - Wiwynn | 1.3.2GA | 1.3.2GA | 1.3.2GA |

| GB200 Compute Tray (1RU) | 1.3.2GA | 1.3.2GA | 1.3.2GA |

| NVSwitch Tray DGX | 1.3.2GA | 1.3.2GA | 1.3.2GA |

| DGX H100 | 25.06.27 (DGXH100_H200_25.06.4 pkg) | 1.06.07 (DGXH100_H200_25.06.4 pkg) | |

| Lenovo ThinkSystem SR670 V2 | 6.10 | 3.30 | 3.31.01 |

| Lenovo ThinkSystem SR675 V3 | 14.10 | 8.30 | 4.20.03 |

| Lenovo ThinkSystem SR675 V3 OVX* | 14.10 | 8.30 | 4.20.03 |

| Lenovo ThinkSystem SR650 | 10.40 | 4.30 | 2.13 |

| Lenovo ThinkSystem SR650 V3 | 6.92 | 3.70 | 4.21.01 |

| Lenovo ThinkSystem SR650 V2 | 5.70 | 3.60 | 3.31.01 |

| Lenovo ThinkSystem SR650 V2 OVX* | 5.70 | 3.60 | 3.31.01 |

| Lenovo ThinkSystem SR655 V3 | 5.80 | 5.70 | 4.20.03 |

| Lenovo ThinkSystem SR655 V3 OVX* | 5.80 | 5.70 | 4.20.03 |

| Lenovo ThinkSystem SR665 V3 OVX* | 5.80 | 5.70 | 4.20.03 |

| Lenovo SR650 V4 | 1.90 | 1.30 | 5.03.00 |

| Lenovo HS350X V3 | 1.20 | 2.17.0 | |

| Dell PowerEdge XE9680 | iDRAC 7.20.60.50 | 2.7.4 | 1.6.0 |

| Dell PowerEdge R750 | iDRAC 7.20.60.50 | 1.18.1 | 1.1.1 |

| SYS-221H-TNR | 1.03.18 | 2.7 | SAA Ver = 1.3.0-p7 |

| Dell PowerEdge R760 | iDRAC 7.20.60.50 | 2.7.5 | 1.2.6 |

| ARS-121L-DNR | 01.08.02 / 01.03.16 (LCC) | 2.2a / 2.0 (LCC) | SAA Ver = 1.2.0-p6 / SUM = 2.14.0-p6 (LCC) |

| SYS-221H-TN24R | X1.05.10 | 2.7 | SAA Ver = 1.3.0-p5 |

| ARS-221GL-NR | 1.03.16 | 2.0 | |

| HPE ProLiant DL385 Gen10 Plus v2 | 3.15 | 3.80_09-05-2025 | |

| DL380 Gen12 | 1.20.00 | 1.62_02-06-2026 | |

| SSG-121E-NES24R | 01.04.19 | 2.7 | SAA Ver = 1.3.0-p1 |

| SYS-121H-TNR | X1.05.10 | 2.7 | SAA Ver = 1.3.0-p5 |

| SYS-821GE-TNHR | 1.03.18 | 2.7 | SAA Ver = 1.3.0-p7 |

| Dell R760xd2 | iDRAC 7.20.80.50 | 2.9.4 | 1.1.2 |

| Dell R670 | iDRAC 1.20.80.51 | 1.7.5 | |

| Dell R770 | iDRAC 1.20.80.51 | 1.7.5 | |

| SYS-421GE-TNRT | 1.03.19 | 2.6 | SAA Ver = 1.2.0-p8 |

| Dell PowerEdge R640 | iDRAC 7.00.00.182 | 2.24.0 | 1.0.6 |

* OVX may not show up as an option; check the Server Serial Number to confirm.

Hosts -- Under Development

This list outlines platforms that are under development and have not undergone full unit testing.

| Host Machine | BMC/Management Firmware Version | BIOS/UEFI Version | Provisioning Manager Version |

|---|---|---|---|

| Lenovo GB300 Compute Tray | 3.0.0 | 1.0.0GA | 1.0.0GA |

DPUs

| DPU | Firmware / Software Version |

|---|---|

| Bluefield-2 | DOCA 3.2.0 |

| Bluefield-3 | DOCA 3.2.0 |

Release Notes

This document contains release notes for the NCX Infra Controller (NICo) project.

Bare Metal Manager 0.2.0

This release of Bare Metal Manager is open-source software (OSS).

Improvements

- The REST API now supports external identity providers (IdPs) for JWT authentication.

- The new

/carbide/instance/batchREST API endpoint allows for batch instances creation. - Instances can now be rebooted by passing an

instance_idargument, in addition to the existingmachine_idargument. - The State Controller is now split into two independent components: The

PeriodicEnqueuer, which periodically enqueues state handling tasks using theEnqueuer::enqueue_objectAPI for each resource/object managed by NICo, and theStateProcessor, which continuously de-queues the state handling tasks for each object type and executes the state handler on them. - The state handler for objects is now scheduled again whenever the outcome of the state handler is

Transition. This reduces the wait time for many state transitions by up to 30 seconds. - The state handler is now re-scheduled for immediate execution if the DPU reports a different version from the previous check. This should reduce the time for wait states like

WaitingForNetworkConfig. - During the pre-ingestion phase, NICo will now set the time zone to UTC if it detects that time is out of sync. This allows the system to correctly interpret NTP timestamps from the time server.

- The Scout agent can now perform secure erase of NVMe devices asynchronously.

- NVLink interfaces are now marked as Pending when an update request is being sent.

- The update logic for NVLink Logical Partition inventory metadata has been improved.

- The

DpuExtensionServicenow supportsnameas an argument for theorderByparameter. - NICo now supports bulk creation/update of

ExpectedMachineobjects. - The Go version has been updated to v1.25.4.

- The

nv-redfishpackage has been updated to v0.1.3.

Bug Fixes

- The above

nv-redfishpackage update fixes a critical bug with the BMC cache, which caused multiple cache miss errors, preventing the health monitor from re-discovery of monitored entities.

Bare Metal Manager EA

What This Release Enables

- Microservice: Our goal is to make NICo deployable and independent of NGC dependencies, enabling a "Disconnected NICo" deployment model.

- GB200 Support: This release enables GB200 Node Ingestion and NVLink Partitioning, with the ability to provision both single and dual DPUs, ingest the GB200 compute trays, and validate the SKU. After ingestion, partners can create NVLink partitions, select instances, and configure the NVLink settings using the Admin CLI.

- Deployment Flexibility: The release includes both the source code and instructions to compile containers for NICo. Our goal is to make the NICo deployable and independent of NGC dependencies, enabling a "Disconnected NICo" deployment model.

What You Can Test

The following key functionalities should be available for testing via the Admin CLI:

- GB200 Node Ingestion: Partners should be able to:

- Install NICo.

- Provision the DPUs (Dual DPUs are also supported).

- Ingest the expected machines (GB200 compute trays).

- Validate the SKU.

- Assign instance types (Note that this currently requires encoding the rack location for GB200).

- NVLink Partitioning: Once the initial ingestion is complete, partners can do the following:

- Create allocations and instances.

- Create a partition.

- Select an instance.

- Set the NVLink configuration.

- Disconnected NICo: This release allows for operation without any dependency on NGC.

Dependencies

| Category | Required Components | Description |

|---|---|---|

| Software | Vault, postgres, k8s cluster, Certificate Management, Temporal | Partners are required to bring in NICo dependencies |

| Hardware | Supported server and switch functionality(e.g. x86 nodes, specific NIC firmware, compatible BMCs, Switches that support BGP, EVPN, and RFC 5549 (unnumbered IPs)) | The code assumes predictable hardware attributes; unsupported SKUs may require custom configuration. |

| Network Topology | L2/L3 connectivity, DHCP/PXE servers, out-of-band management networks, specific switch side port configurations | All modules (e.g. discovery, provisioning) require pre-configured subnets and routing policies, as well as delegation of IP prefixes, ASN numbers, and EVPN VNI numbers. |

| External Systems | DNS resolvers/recursers, NTP, Authentication (Azure OIDC, Keycloak), Observability Stack | NICo provides clients with DNS resolver and NTP server information in the DHCP response. External authentication source that supports OIDC. NICo sends open-telemetry metrics and logs into an existing visualization/storage system |

Supported Switches:

- Optics Compatibility w/B3220 BF-3

- RFC5549 BGP Unnumbered routed ports

- IPv4/IPv6 Unicast BGP address family

- EVPN BGP address family

- LLDP

- BGP External AS

- DHCP Relay that supports Option 82

FAQs

This document contains frequently asked questions about NCX Infra Controller (NICo).

Does NICo install Cumulus Linux onto ethernet switches?

No, NICo does not install Cumulus Linux onto Ethernet switches.

Does NICo install UFM?

No, NICo does not install UFM, it is a dependency. NICo leverages existing UFM deployments for InfiniBand partition management via the UFM API using pkey.

Does NICo manage Infiniband switches in standalone mode (i.e. without UFM)?

No, NICo does not manage Infiniband switches in standalone mode. It requires UFM for InfiniBand partitioning and fabric management. NICo calls UFM APIs to assign partition keys (P_Keys) for isolation.

Does NICo maintain the database of the tenancy mappings of servers and ports?

NICo stores the owner of each instance in the form of a tenant_organization_id that is passed during instance creation.

Does NICo speak to NetQ to learn about the network?

No, the NICo does not speak to NetQ.

Does NICo install DPU OS?

Yes, NICo installs the DPU OS, including all DPU firmware (BMC, NIC, UEFI). NICo also deploys HBN, a containerized service that packages the same core networking components (FRR, NVUE) that power Cumulus Linux.

Does NICo bring up NVLink?

No, NICo does not bring up NVLink. However, NICo manages NVLink partitions through NMX-M APIs. Plans to manage NVLink switches are being evaluated.

Does NICo support NVLink partitioning?

Yes, NICo supports NVLink partitioning.

How does NICo maintain tenancy enforcement between Ethernet (N/S), Infiniband (E/W), NVLink (GPU-to-GPU) networks?

- Ethernet: VXLAN with EVPN for VPC creation on DPU

- E/W Ethernet (Spectrum-X): CX-based FW called DPA to do VXLan on CX (as part of future release)

- Infiniband: UFM-based partition key (P_Key) assignment

- NVLInk: NMX-M based partition management

DPUs enforce Ethernet isolation in hardware, UFM enforces IB isolation, and NMX-M enforces NVLink isolation--all coordinated by NICo.

When NICo is used to maintain tenancy enforcement for Ethernet (N/S), does it require access to make changes to SN switches running Cumulus or are all changes limited to HBN on the DPU?

Ethernet tenancy enforcement is limited to HBN (Host-Based Networking) on the DPU and does not require NICo to make changes to Spectrum (SN) switches running Cumulus Linux. NICo expects the switch configuration to provide BGP speakers on the Switches that speak IPv4 Unicast and L2/L3 EVPN address families, and “BGP Unnumbered” (RFC 5549)

When NICo is used to maintain tenancy enforcement for Ethernet and hosts are presented to customers as bare metal, is OOB isolation of GPU/CPU host BMC managed as well or only the N/S overlay running on DPU?

NICo configures the host BMC to disable connectivity from within the host to the BMC (e.g. Dell iDrac Lockdown, disabling KCS, etc), and also prevents access from the host (via network) to the BMC of the host. Effectively, the user cannot access the BMC of the bare metal hosts. The BMC console (Serial console) is accessed by a user through a NICo service called SSH console that does Authentication and Authorization that the user accessing the console is the current owner of the machine.

Can NICo be used to manage a portion of a cluster?

NICo requires the N/S and OOB Ethernet DHCP relays pointed to the NICo DHCP service as well as access to UFM and NMX-M for E/W. Additionally, the EVPN topology must be visible to all nodes that are managed by the same cluster. If the DC operator wants to separate EVPN/DHCP into VLANs and VRFs, then you can arbitrarily assign nodes to NICo management or not. NMX-M and UFM are not multi–tenant aware, so there's a possibility of two things configuring NMX-M and UFM from interfering with each other.

Can NICo be utilized for HGX platforms for host life cycle management?

Yes, in addition to DGX as well as OEM/ODM CPU-only, Storage, etc nodes.

Does NICo support installing an OS onto the servers? What OS’s are supported to install on NICo?

Yes, NICo supports OS installation onto servers through PXE & Image-based. Any OS can be installed via iPXE (http://ipxe.org) that iPXE supports. OS management (patching, configuration, image generation) is the user’s responsibility.

What is the way to communicate with NICo? Does it expose an API? Does it have a shell interface?

NICo exposes an API interface & authentication through JWT tokens or IdP integration (keycloak). There is also an admin-facing CLI & debugging/Engineering UI.

Where is NICo run? Is it a container/microservice? Is it a single container or a collection deployed via Helm?

NICo commonly runs on a Kubernetes cluster (3 or 5 control plane nodes recommended), though there is no requirement to do so. NICo runs as a set of microservices for API, DNS, DHCP, Hardware Monitoring, BMC Console, Rack Management, etc. There is currently no helm chart for NICo deployment; it can be deployed with Kubernetes Kustomize manifests.

Should I use NICo as my OS installation tool?

NICo is more than an OS installation tool. It certainly helps with OS provisioning, but it's not the main use case for NICo. Automated Baremetal lifecycle management, network isolation & rack management are its key use cases. This includes hardware burn-in testing, hardware completeness validation, Measured Boot for Firmware integrity and ongoing automated firmware updates, and out-of-band continuous hardware management.

Do I need to change the OOB management TOR to configure a separate VLN for the NICo managed hosts and DPU (DPU OOB, Host OOB), with DHCP relay point to NICo DHCP?

Yes, that's usually how it's done. Each VLAN (sometimes the whole switch is a VLAN) - or SVI port - needs to have it's DHCP relay for the machines and DPUs you wish to manage with NICo pointing to NICo's DHCP server address you setup.

Do I need to change existing infrastructure if separate VLANs are used?

No, there is no need to change existing infrastructure if separate VLANs are used.

With only one RJ45 on BF3, the DPU inband IP addresses allocation is part of DPU loopback allocated by NICo. Does it assume that the same management switch also supports DPU SSH access and that the DPU ssh IP is allocated by NICo and only accessible inside the data center?

The IP addresses issued to the DPU RJ45 port are from the "network segments" (which is different than a DPU loopback) - the API in NICo is to create a Network Segment of type underlay on whatever the underlying network configuration is. NICo issues two IPs to the RJ45 - (1) is the DPU OOB that's used to SSH to the ARM OS and NICo's management traffic, and (2) the DPU's BMC that is used for Redfish and DPU configuration. There's also the host's BMC that needs to be also on a VLAN forwarding to the NICo DHCP relay.

The host overlay interfaces addresses on top of vxlan and DPU is allocated via NICo through the control NIC on NICo, through overlay networking. So I assume no DHCP relay configuration needed on any switches. While is this overlay need to be manually configured on NICo control hosts' NIC?

The DHCP relay is required only on the switches connected to the DPU OOBs/BMCs and Host BMCs. The in-band ToRs just need to be configured for bgp unnumbered as "routed port". The "overlay" networks that NICo will assign IPs from to the host are defined as "network segements" with the "overlay" type, then the overlay network is referenced when creating an instance.

Do I need to seperate the PXE of NICo like this as well to isolate the PXE installation process from site PXE server?

There is a separate PXE server that NICo needs to serve it's own images we ship as part of the software (i.e. DPU software, iPXE, etc). But if the DHCP is configured correctly and there's connectivity from the Host to the NICo PXE service, then it will be fine to live side-by-side.

How does NICo select which bare metal to pick to satisfy the request for an instance? What selection criteria is supported?

For the gRPC API, it doesn't, you pick the machine when calling "AllocateInstance" gRPC. For the REST API, it has a concept of resource allocations, so a tenant would get an allocation of some number of a type of machine and then when creating an instance against that instance type it'd randomly pick one. There's an API we're working on to do bulk allocations which will all get allocated on the same nvlink domain and another project to allocate by labels on the machine so you could choose machines in the same rack, etc.

How is NICo made aware of power management endpoints (BMC IP and credentials) for bare metal?

When you provision a NICo "site" you tell it which BMC subnets are provisioned on the network fabric, and then those subnets should be doing DHCP relaying to the NICo DHCP service. When a BMC requests an IP, NICo allocates one and then looks up in an "expected machine" table for the initial username and password for that BMC (it looks it up by mac address, which NICo cross-references with the DHCP lease). So you dont have to "pre-define" BMCs, but you do need to provide the initial mac address, username and password.

Are there APIs to query and debug DPU state?

DPUs will report health status (like if HBN is configured correctly, BGP peering, if the HBN container is running, that kind of thing) and heartbeat information, which version of the configuration has been applied; and also health checks for BMC-side health from the DPU's BMC for things like thermals and stuff.

This information is also visible in the admin web UI. Furthermore, you can SSH to the DPU and poke around if the issue isn't obvious using these methods.

Architecture

This page discusses the high level architecture of a site running NCX Infra Controller (NICo).

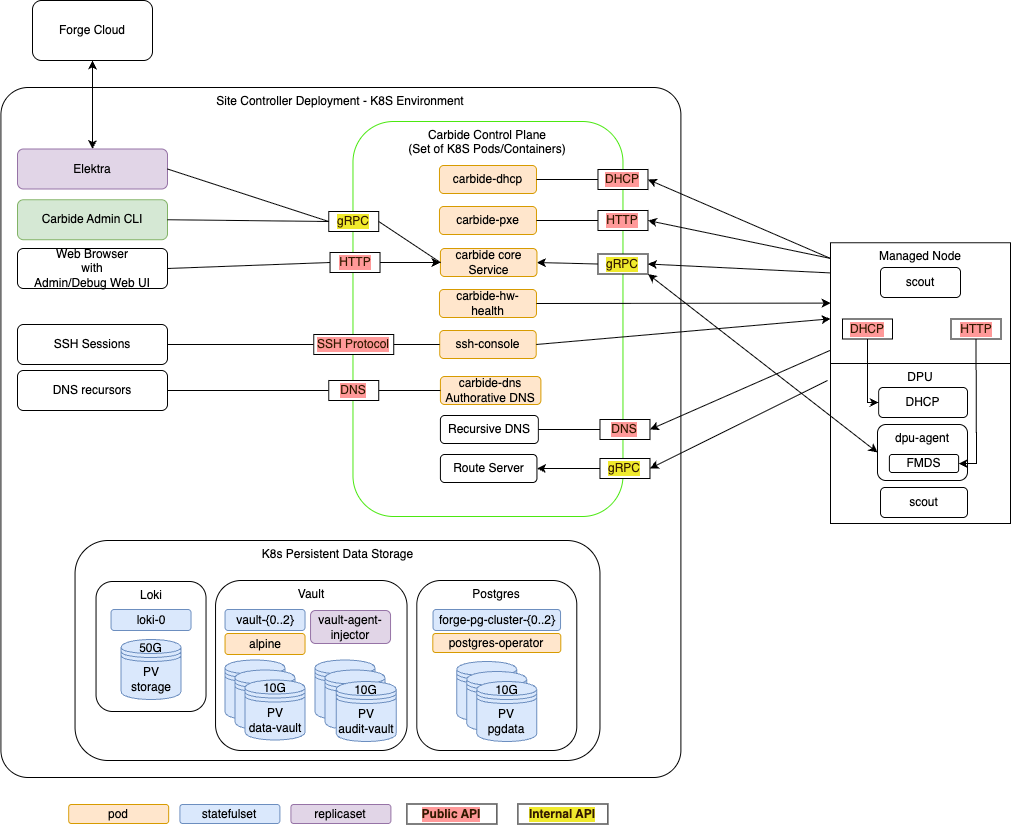

NICo orchestrates the lifecycle of "Managed Hosts" and other resources via set of cooperating control plane services. These control plane services have to be deployed to a Kubernetes cluster with a size of at least 3 nodes (for high availability).

The Kubernetes cluster needs to have variety of services deployed:

- The Carbide control plane services. These services are specific to Carbide, and must be deployed together in order to allow Carbide to manage the lifecyle of hosts.

- Dependency services. Carbide requires "off-the-shelf" dependencies like Postgres, Vault and telemetry services deployed and accessible.

- Optional services. A variety of services in tools within the deployment that interfact with the Carbide deployment, but are not required continuously for the control plane to operate.

The following chapters look at each of these in more detail.

Managed Hosts

A "Managed Host" is a host whose lifecycle is managed by Carbide.

The managed host consists of various internal components that are all part of the same chassis or tray:

- The actual x86 or ARM host, with an arbitrary amount of GPUs

- One or more DPUs (of type Bluefield 2 or Bluefield 3) plugged into the host

- The BMC that is used to manage the host

- The BMC that is used to manage the DPU

Carbide deploys a set of binaries on these hosts during various points of their lifecycle:

Scout

scout is an agent that Carbide runs on the host and DPU of managed hosts for a variety of tasks:

- "Inventory" collection: Scout collects and transmits hardware properties of the host to carbide-core which can not be determined through out-of-band tooling.

- Execution of cleanup tasks whenever the bare metal instance using the host is released by a user

- Execution of machine validation tests

- Periodic Health checks

DPU Agent

dpu-agent is an agent that Carbide runs exclusively on DPUS managed by Carbide as a daemon.

DPU agent performs the following tasks:

- Configuring the DPU as required at any state during the hosts lifecycle. This process is described more in depth in DPU configuration.

- Executing periodic health-checks on the DPU

- Running the Forge metadata service (FMDS), which provides the users on the bare metal instance a HTTP based API to retrieve information about their running instance. Users can e.g. use FMDS to determine their Machine ID or certain Boot/OS information.

- Enabling auto-updates of the dpu-agent itself

- Deploying hotfixes for the DPU OS. These hotfixes reduce the need to perform a full DPU OS reinstallation, and thereby avoid bare metal instances becoming unavailable for their users due to OS updates.

DHCP Server

Carbide runs a custom DHCP server on the DPU, which handles all DHCP requests of the actual host. This means DHCP requests on the hosts primary networking interfaces will never leave the DPU and show up on the underlay network - which provides enhanced security and reliability. The DHCP server is configured by dpu-agent.

Carbide Control plane services

The carbide control plane consists of a number of services which work together to orchestrate the lifecycle of a managed host:

- carbide-core: The Carbide core service is the entrypoint into the control plane. It provides a gRPC API that all other components as well as users (site providers/tenants/site administrators) interact with, as well as implements the lifecycle management of all Carbide managed resources (VPCs, prefixes, Infiniband and NVLink partitions and bare metal instances). The Carbide Core section describes it further in detail.

- carbide-dhcp (DHCP): The DHCP server responds to DHCP requests for all devices on underlay networks. This includes Host BMCs, DPU BMCs and DPU OOB addresses. carbide-dhcp can be thought of as a stateless proxy: It does not acutally perform any IP address management - it just converts DHCP requests into gRPC format and forwards the gRPC based DHCP requests to carbide core.

- carbide-pxe (iPXE): The PXE server provides boot artifacts like iPXE scripts, iPXE user-data and OS images to managed hosts at boot time over HTTP. It determines which OS data to provide for a specific host by requesting the respective data from carbide core - therefore the PXE server is also stateless. Currently, managed hosts are configured to always boot from PXE. If a local bootable device is found, the host will boot it. Hosts can also be configured to always boot from a particular image for stateless configurations.

- carbide-hw-health (Hardware health): This service scrapes all host and DPU BMCs known by Carbide for system health information. It extracts measurements like fan speeds, temperaturs and leak indicators. These measurements are emitted as prometheus metrics on a

/metricsendpoint on port 9009. In addition to that, the service calls the carbide-core APIRecordHardwareHealthReportto set health alerts based on issues identified within the metrics. These alerts are merged within carbide-core into the aggregated-host-health - which is emitted in overall health metrics and used to decide whether hosts are usable as bare metal instances for tenants. - ssh-console: The SSH console provides bare metal-tenants and site-administrators virtual serial console access to hosts managed by Carbide. The ssh-console service also sends the output of each hosts serial console to the logging system (Loki), from where it can be queried using Grafana and logcli. In order to provide this functionality, the ssh-console service continuously connects to all host BMCs. The ssh-console service only forwards logs to users ("bare metal tenants") if they connect to the service and get authenticated.

- carbide-dns (DNS): Domain name service (DNS) functionality

is handled by two services. The

carbide-dnsservice handles DNS queries from the site controller and managed nodes and is authoritative for delegated zones.

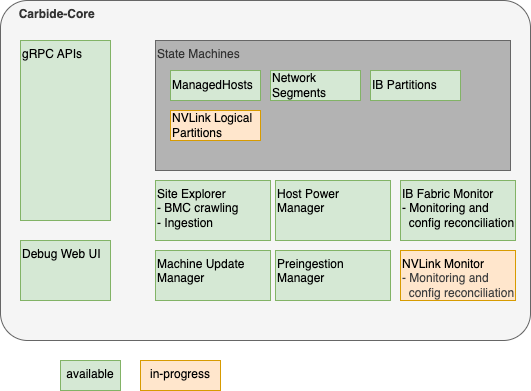

Carbide Core

Carbide core is the binary which provides the most essential services within the Carbide control plane. It provides a gRPC API that all other components as well as users (site providers/tenants/site administrators) interact with, as well as implements the lifecycle management of all Carbide managed resources (VPCs, prefixes, Infiniband and NVLink partitions and bare metal instances).

Carbide core can be considered as a "collection of independent components that are deployed within the same binary". These components are shown the following diagram, and are described further below:

Carbide core is the only component within carbide which interacts with the postgres database. This simplifies the rollout of database migrations throughout the product lifecycle.

Carbide Core Components

gRPC API handlers

The API handlers accept gRPC requests from Carbide users and internal system components. They provide users the ability to inspect the current state of the system, and modify the desired state of various components (e.g. create or reconfigure bare metal instances).

API handlers are all implemented within the trait/interface rpc::forge::forge_server::Forge. Various implementations delegate to the handlers subdirectory. For resources managed by Carbide, API handlers do not directly change the actual state of the resources (e.g. the provisioning state of a host). Instead of it, they only change the required state (e.g. "provisioning required", "termination required", etc). The state changes will be performed by state machines (details below). The carbide-core gRPC API supports

gRPC reflection to provide a machine readable API

description so clients can auto-generate code and RPC functions in the client.

Debug Web UI

Carbide core provides a debug UI under the /admin endpoint. The debug UI allows to inspect the state of all resources managed by Carbide via a variety of HTML pages. It e.g. allows to list details about all managed hosts and DPUs, or about the internal state of other components that are described within the Carbide Core section.

The Debug UI also provides access to various admin level tools. E.g. it

- allows to change the power state of hosts, reset the BMC, and change boot orders

- inspect the redfish tree of any BMC managed by Carbide

- allows admins to perform changes to a BMC (via HTTP POST) in a peer-reviewed and auditable fashion

- inspect UFM responses

State Machines

Carbide implements State Machines for all resources managed by Carbide. The state machines are implemented as idempotent state handling functions calls, which are scheduled by the system. State handling for various resource types is implemented indepently, e.g. the lifecycle of hosts is managed by different tasks and different code than the lifecycle of InfiniBand partitions.

Carbide implements state machines for

- Managed Hosts (Hosts + DPUs)

- Network Segments

- InfiniBand Partitions

- NVLink Logical Partitions

Details about the Carbide state handling implementation can be found here.

Site Explorer

Site Explorer is a process within Carbide Core that continuously monitors the state of all BMCs that are detected within the underlay network. The process acts as a "crawler". It continuously tries to perform redfish requests against all IPs on the underlay network that were provided by Carbide Core and records information that Carbide is required to manage the hosts in a follow-up. The information collected by Carbide is

- Serial Numbers

- Certain inventory data, e.g. the amount, type and serial numbers of DPUs

- Power State

- Configuration data, e.g. boot order, lockdown mode

- Firmware versions

Carbide users can inspect the data that site explorer discovers using the FindExploredEndpoints APIs as well as using the Carbide Debug Web UI.

Site Explorer requires an "Expected Machines" manifest to be deployed. Expected Machines describes the set of Machines that is expected to be managed by the Carbide instance - it encodes BMC MAC addresses, hardware default passwords and other details of these Machines. The manifest can be updated using a set of APIs, e.g. ReplaceAllExpectedMachines.

Beyond the basic BMC data collection, Carbide also performs the following tasks:

- It matches hosts with associated DPUs based on the redfish reports of both components - e.g. both the host an DPU need to reference the same DPU serial number.

- It kickstarts the ingestion process of the host once the host is in an "ingestable" state (all components are found and have up to date firmware versions).

Site Explorer emits metris with the prefix forge_endpoint_ and forge_site_explorer_.

Preingestion Manager

Preingestion Manager is a component which updates the firmware of hosts that are below the minimum required firmware version that is required to be ingestable. Usually firmware updates to hosts are deplyoed within the main machine lifecycle, as managed by the ManagedHost state machine.

In some rare cases - e.g. with very old host or DPU BMCs - the host ingestion process can't be started yet - e.g. because the BMC does not provide the necessary information to map the host to DPUs. In this case the firmware needs to be updated before ingestion, and preingestion manager performs this task.

Machine Update Manager

Machine Update Manager is a scheduler for Host and DPU firmware updates. It selects Machines with outdated software versions for automated updates. Machine update manager looks at various criteria to determine whether a Machine should get updated:

- The current Machine state - e.g. whether its occupied by a tenant. Right now only Machines within the

Readystate are selected for automated software updates - Whether the machine is healthy (no health alerts recorded on the machine)

- How many machines are already updating, and the overall amount of healthy hosts in the machine. Machine Update Manager will never update all Machines at once, and won't schedule additional updates in case the temporary loss of Machines would move the site under the Machine health SLA.

Machine Update Manager does not perform the actual updates - it only performs scheduling/selection. The updates are instead applied within the ManagedHost state machine. This approach is chosen in order to assure that only a single component (managedhost state machine) is managing a hosts lifecycle at any point in time.

Machine Update Manager is an optional component and can be disabled.

Host Power Manager

Host Power Manager is a component which orchestrates power actions against BMCs.

IB (InfiniBand) Fabric Monitor

InfiniBand fabric monitor is a periodic process within Carbide that performs all interactions with the InfiniBand fabric using UFM APIs.

In each run, IBFabricMonitor performs the following task:

- It checks the health of the fabric manager (UFM) by performing API calls

- It checks whether all security configurations for multitenancy are applied on UFM and emits alerts in case of inappropriate settings

- It fetches the actually applied InfiniBand partitioning information for each InfiniBand port on each host managed by Carbide and stores it in Carbide. The data can be inspected in the

Machine::ib_statusfield in the gRPC API. - If calls UFM APIs to bind ports (guids) to partitions (pkeys) according to the configuration of each host. This happens continuosly based on comparing the expected InfiniBand configuration of a host (whether it is used by a tenant or not, and how the tenant configured the InfiniBand interfaces) with the actually applied configuration (determined in the last step).

InfiniBand Fabric Monitor is an optional component. It only needs to be enabled in the case Carbide managed InfiniBand is required.

IB Fabric Monitor emits metrics with prefix forge_ib_monitor_.

NVLink Monitor

In development. The NVLink monitor will have similar responsibilities as IBFabricMonitor, but is used for monitoring and configuring NVLink. It will therefore interact with NMX APIs.

Dependency services

In addition to the Carbide API server components there are other supporting services run within the K8s site controller nodes.

K8s Persistent Storage Objects

Some site controller node services require persistent, durable storage to maintain state for their attendant pods. There are three different K8s statefulsets that run on the controller nodes:

- Loki - The loki/loki-0 pod instatites a single 50GB persistent volume and is used to store logs for the site controller components.

- Hashicorp Vault - Used by Kubernetes for certificate signing requests (CSRs). Vault

uses three each (one per K8s control node) of the

data-vaultandaudit-vault10GB PVs to protect and distribute the data in the absence of a shared storage solution. - Postgres - Used to store state for any Carbide or site controller components that

require it including the main "forgedb". There are three 10GB

pgdataPVs deployed to protect and distribute the data in the absence of a shared storage solution. Theforgedbdatabase is stored here.

Optional services

The point of having a site controller is to administer a site that has been populated with tenant managed hosts. Each managed host is a pairing of a Bluefield (BF) 2/3 DPUs and a host server (only two DPUs have been tested). During initial deployment scout runs and informs carbide-api of any discovered DPUs. Carbide completes the installation of services on the DPU and boots into regular operation mode. Thereafter the forge-dpu-agent starts as a daemon.

Each DPU runs the forge-dpu-agent which connects via gRPC to the API service in Carbide to get configuration instructions.

The forge-dpu-agent also runs the Forge metadata service (FMDS), which provides the users on the bare metal instance a HTTP based API to retrieve information about their running instance. Users can e.g. use FMDS to determine their Machine ID or certain Boot/OS information.

Redfish Workflow

NICo uses DMTF Redfish to discover, provision, and monitor bare-metal hosts and their DPUs through BMC (Baseboard Management Controller) interfaces. This document traces the end-to-end workflow from initial DHCP discovery through ongoing monitoring.

For the overall NICo architecture and component responsibilities, see Overview and components. The Site Explorer component described there is the primary consumer of Redfish APIs.

Workflow Summary

DHCP Request (BMC)

→ NICo DHCP (Kea hook)

→ Carbide Core (gRPC discover_dhcp)

→ Site Explorer probes Redfish endpoint

→ Authenticates, collects inventory

→ Pairs DPUs to hosts via serial number matching

→ Provisioning:

1. Set DPU boot to HTTP IPv4 UEFI

2. Power cycle DPU via Redfish

3. DPU PXE boots carbide.efi

4. BIOS config (SR-IOV, etc.)

5. Set host boot order (DPU first)

6. Power cycle host via Redfish

→ Ongoing monitoring:

- Firmware inventory (periodic)

- Sensor collection (60s interval)

- Prometheus metric export

1. DHCP Discovery

When a BMC on the underlay network sends a DHCP request, the NICo DHCP server (a Kea hook plugin) captures it and forwards the discovery information to Carbide Core.

The Kea hook is implemented as a Rust library with C FFI bindings. When a DHCP packet arrives, the hook:

- Extracts the MAC address, vendor class string, relay address, circuit ID, and remote ID from the DHCP packet

- Builds a

Discoverystruct with these fields - Sends a gRPC

discover_dhcp()request to Carbide Core with the MAC and vendor string - Receives back a

Machineresponse containing the network configuration (IP address, gateway, etc.) to return to the BMC

The vendor class string is parsed to identify the BMC type and capabilities. DHCP entries are tracked in the database by MAC address and associated with machine interfaces.

Key files:

crates/dhcp/src/discovery.rs—Discoverystruct and FFI entry points (discovery_fetch_machine)crates/dhcp/src/machine.rs—Machine::try_fetch()sends gRPC discovery requestcrates/dhcp/src/vendor_class.rs— Vendor class parsing and BMC type identificationcrates/api-model/src/dhcp_entry.rs—DhcpEntrydatabase model

2. Redfish Endpoint Probing and Inventory

Once NICo knows about a BMC IP from DHCP, the Site Explorer component continuously probes and inventories it via Redfish.

Probing

Site Explorer first sends an anonymous (unauthenticated) GET to /redfish/v1 (the Redfish service root) to detect the BMC vendor. The RedfishVendor enum identifies the vendor from the service root response, which determines vendor-specific behavior for subsequent operations.

Authentication

After vendor detection, Site Explorer creates an authenticated Redfish session using one of three methods:

- Anonymous — Used for initial probing only

- Direct — Username/password from the Expected Machines manifest (factory defaults)

- Key — Credential key lookup by BMC MAC address (after credential rotation)

Inventory Collection

With an authenticated session, Site Explorer queries a comprehensive set of Redfish resources and produces an EndpointExplorationReport containing:

| Data Collected | Redfish Source | Purpose |

|---|---|---|

| System serial numbers | GET /redfish/v1/Systems/{id} | Machine identification |

| Chassis serial numbers | GET /redfish/v1/Chassis/{id} | Fallback identification |

| Network adapters + serials | GET /redfish/v1/Chassis/{id}/NetworkAdapters | DPU-host pairing |

| PCIe devices + serials | GET /redfish/v1/Systems/{id} (PCIeDevices) | DPU-host pairing |

| Manager info | GET /redfish/v1/Managers/{id} | BMC firmware version |

| Ethernet interfaces | GET /redfish/v1/Managers/{id}/EthernetInterfaces | BMC network info |

| Firmware versions | GET /redfish/v1/UpdateService/FirmwareInventory | Version tracking |

| Boot configuration | GET /redfish/v1/Systems/{id}/BootOptions | Boot order state |

| Power state | GET /redfish/v1/Systems/{id} (PowerState) | Current state |

Serial numbers are trimmed of whitespace. If system.serial_number is missing, the chassis serial number is used as a fallback.

Key files:

crates/api/src/site_explorer/redfish.rs—RedfishClient:probe_redfish_endpoint(),create_redfish_client(), inventory queriescrates/api/src/site_explorer/bmc_endpoint_explorer.rs—BmcEndpointExplorerorchestrates credential lookup and explorationcrates/api-model/src/bmc_info.rs—BmcInfomodel (IP, port, MAC, firmware version)

3. DPU-Host Pairing

Once Site Explorer has explored both host BMCs and DPU BMCs, it matches them into host-DPU pairs using serial number correlation. This is the core logic that answers: "which DPU belongs to which host?"

Matching Algorithm

The algorithm has three strategies, tried in order:

Step 1 — Build DPU serial number map:

For each explored DPU endpoint, extract system.serial_number and create a map: DPU serial → explored endpoint.

Step 2 — Primary match via PCIe devices:

For each host, iterate through system.pcie_devices. For each device where is_bluefield() returns true (BF2, BF3, or BF3 Super NIC), look up pcie_device.serial_number in the DPU serial map. A match means this DPU is physically installed in this host.

Step 3 — Fallback match via chassis network adapters:

If no BlueField PCIe devices were found (Step 2 count = 0), iterate through chassis.network_adapters instead. For each adapter where is_bluefield_model(part_number) is true, look up network_adapter.serial_number in the DPU serial map.

Step 4 — Final fallback via expected machines manifest:

If the explored matches are incomplete, check expected_machine.fallback_dpu_serial_numbers for manually specified DPU-to-host associations.

Validation

Before accepting a pairing, NICo validates:

- DPU mode: The DPU must be in DPU mode, not NIC mode. BlueFields in NIC mode are excluded from pairing.

- DPU model configuration:

check_and_configure_dpu_mode()verifies the DPU is correctly configured for its model. Hosts with misconfigured DPUs are not ingested. - Completeness: The number of explored DPUs must match the number of BlueField devices the host reports. Incomplete pairings are deferred.

Ingestion

Once all DPUs are matched and validated, the host enters an "ingestable" state and Site Explorer kickstarts the ingestion process via the ManagedHost state machine.

Key file:

crates/api/src/site_explorer/mod.rs—identify_managed_hosts()with the complete pairing algorithm

4. DPU Provisioning

After pairing, the DPU must be provisioned with NICo software. This is orchestrated via Temporal workflows (in carbide-rest) with Redfish power control (in ncx-infra-controller-core).

Boot Configuration

The DPU is configured to boot from HTTP IPv4 UEFI, which directs it to the NICo PXE server. The PXE server serves different artifacts based on architecture:

- ARM (BlueField DPUs):

carbide.efiwith cloud-init user-data containingmachine_idandserver_uri - x86 (Hosts):

scout.efiwith machine discovery parameters (cli_cmd=auto-detect)

Power Cycle

The DPU is power-cycled via Redfish to trigger the network boot:

POST /redfish/v1/Systems/{system_id}/Actions/ComputerSystem.Reset

Body: {"ResetType": "GracefulRestart"}

The power control operation supports multiple reset types: On, ForceOff, GracefulShutdown, GracefulRestart, ForceRestart, ACPowercycle, PowerCycle.

Installation

After PXE boot, the DPU:

- Fetches

carbide.efifrom the NICo PXE server over HTTP - Receives cloud-init configuration with its

machine_idand NICo API endpoint - Installs and starts the DPU agent (

dpu-agent), which connects back to Carbide Core via gRPC

Key files:

crates/api/src/ipxe.rs— iPXE instruction generation per architecturepxe/ipxe/local/embed.ipxe— iPXE boot script templatecarbide-rest/workflow/pkg/workflow/instance/reboot.go—RebootInstanceTemporal workflowcarbide-rest/site-workflow/pkg/grpc/client/instance_powercycle.go— Power cycle gRPC call to site agent

5. Host Configuration and Boot

With the DPU provisioned, NICo configures the host BIOS and boot order via Redfish.

BIOS Attribute Setting

NICo sets BIOS attributes required for bare-metal infrastructure operation. This includes SR-IOV enablement and other platform-specific settings. BIOS operations use the libredfish Redfish trait:

bios()— Read current BIOS attributesset_bios()— Set BIOS attribute valuesmachine_setup()— Apply infrastructure-specific BIOS configurationis_bios_setup()/machine_setup_status()— Check configuration state

These translate to Redfish calls:

GET /redfish/v1/Systems/{id}/Bios — Read attributes

PATCH /redfish/v1/Systems/{id}/Bios/Settings — Write attributes (pending next reboot)

Boot Order Configuration

The host boot order is set so the DPU's network interface is the primary boot device:

#![allow(unused)] fn main() { set_boot_order_dpu_first(bmc_ip, credentials, boot_interface_mac) }

This configures the UEFI boot order to prioritize the DPU's PF MAC address, ensuring the host boots through the DPU's network path.

Host Reboot

After BIOS and boot order changes, the host is power-cycled via Redfish to apply the configuration:

POST /redfish/v1/Systems/{system_id}/Actions/ComputerSystem.Reset

Body: {"ResetType": "GracefulRestart"}

Power cycles are rate-limited to avoid excessive reboots (checked via time_since_redfish_powercycle against config.reset_rate_limit).

Key files:

crates/api/src/site_explorer/redfish.rs—set_boot_order_dpu_first(),redfish_powercycle()crates/api/src/site_explorer/bmc_endpoint_explorer.rs— Orchestrates boot order with credential lookup

6. Ongoing Monitoring

Once hosts are provisioned, the carbide-hw-health service continuously monitors both host BMCs and DPU BMCs via Redfish. The endpoint discovery calls find_machine_ids with include_dpus: true, so every BMC known to NICo (host and DPU) gets its own set of collectors:

- Health monitor — sensor collection and health alert reporting

- Firmware collector — firmware inventory polling

- Logs collector — BMC event log collection

Each collector runs independently per BMC endpoint, meaning a host with two DPUs will have three sets of collectors (one for the host BMC, one for each DPU BMC).

Firmware Inventory

The FirmwareCollector periodically queries each BMC's firmware inventory using nv-redfish:

#![allow(unused)] fn main() { let service_root = ServiceRoot::new(bmc.clone()).await?; let update_service = service_root.update_service().await?; let firmware_inventories = update_service.firmware_inventories().await?; }

This translates to:

GET /redfish/v1

GET /redfish/v1/UpdateService

GET /redfish/v1/UpdateService/FirmwareInventory

GET /redfish/v1/UpdateService/FirmwareInventory/{id} (for each item)

Each firmware item's name and version is exported as a Prometheus gauge metric with labels:

serial_number— Machine chassis serialmachine_id— NICo machine UUIDbmc_mac— BMC MAC addressfirmware_name— Component name (e.g., "BMC_Firmware", "DPU_NIC")version— Firmware version string

Sensor Collection

Sensors (temperature, fan speed, power consumption, current draw) are collected at configurable intervals:

| Config Parameter | Default | Description |

|---|---|---|

sensor_fetch_interval | 60 seconds | How often sensors are polled |

sensor_fetch_concurrency | 10 | Maximum concurrent BMC sensor queries |

include_sensor_thresholds | true | Whether to include threshold values |

Sensor data is read from:

GET /redfish/v1/Chassis/{id}/Sensors

GET /redfish/v1/Chassis/{id}/Sensors/{sensor_id}

Sensor types include: Temperature (Cel), Rotational/Fan (RPM), Power (W), and Current (A).

All sensor data is exported as Prometheus metrics on the /metrics endpoint (port 9009) and fed into Carbide Core via RecordHardwareHealthReport for health aggregation.

Key files:

crates/health/src/firmware_collector.rs—FirmwareCollectorusing nv-redfishcrates/health/src/discovery.rs— Creates and manages collectors per endpointcrates/health/src/config.rs— Polling intervals and concurrency configuration

Redfish Libraries

NICo uses two Redfish client libraries concurrently. nv-redfish is replacing libredfish over time.

| Library | Version | Language | Used For | Location in Code |

|---|---|---|---|---|

| libredfish | 0.39.3 | Rust | Site Explorer: discovery, boot config, power control, BIOS, account management | crates/api/src/site_explorer/ |

| nv-redfish | 0.1.4 | Rust | Health monitoring: firmware inventory collection | crates/health/src/ |

libredfish provides a Redfish trait with vendor-specific implementations (Dell, HPE, Lenovo, Supermicro, NVIDIA DPU/GB200/GH200/Viking). It handles the full breadth of BMC operations.

nv-redfish uses a code-generation approach: CSDL (Redfish schema XML) is compiled into strongly-typed Rust at build time. It is feature-gated so only needed Redfish services are compiled in. Currently enabled features in NICo: std-redfish, update-service, resource-status.

Both libraries are declared in the workspace Cargo.toml.

Redfish Endpoints Reference

For the complete list of Redfish endpoints and their required response fields, see Redfish Endpoints Reference.

Redfish Endpoints Reference

This page documents all Redfish endpoints used by NCX Infra Controller (NICo), organized by resource group. Each section includes endpoint tables, required response fields with their importance to NICo, and vendor-specific notes.

Field importance levels:

- Critical — NICo cannot function correctly without this field. Pairing, identification, or core workflows fail.

- Required — Expected by NICo and used in normal operation. Missing values cause degraded behavior.

- Recommended — Used when available, with graceful fallback if absent.

- Optional — Informational or used only in specific configurations.

For the manually-maintained tracker with full vendor coverage and response payload examples, see the DSX OEM Redfish APIs spreadsheet.

Service Root

Code: get_service_root() in libredfish; probe_redfish_endpoint() in site_explorer/redfish.rs

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1 | GET | Service root, vendor detection |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Vendor | Required | Vendor detection — determines all vendor-specific behavior |

Systems | Required | Link to systems collection |

Managers | Required | Link to managers collection |

Chassis | Required | Link to chassis collection |

UpdateService | Required | Link to firmware update service |

Systems

Code: get_systems(), get_system() in libredfish; exploration in site_explorer/redfish.rs

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems | GET | List computer systems |

/redfish/v1/Systems/{id} | GET | System info, serial number, power state |

/redfish/v1/Systems/{id} | PATCH | Boot source override (boot_once/boot_first) |

/redfish/v1/Systems/{id}/Actions/ComputerSystem.Reset | POST | Power control (On/ForceOff/GracefulRestart/ForceRestart/ACPowercycle/PowerCycle) |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

SerialNumber | Critical | Machine ID generation via DMI hash. Pairing fails without it. |

Id | Required | DPU detection (checks for "bluefield" substring) |

PowerState | Required | Health reporting, preingestion state validation. Values: On, Off, PoweringOn, PoweringOff, Paused, Reset |

Boot.BootOrder | Required | Boot order reporting and verification |

Boot.BootOptions | Required | Link to boot options for interface detection |

PCIeDevices | Required | Array of links — primary DPU-host pairing path |

EthernetInterfaces | Required | Link to system NICs for DPU pairing |

Model | Recommended | DPU model detection (BF2 vs BF3). Falls back gracefully. |

Manufacturer | Recommended | Machine ID generation. Has DEFAULT_DMI_SYSTEM_MANUFACTURER fallback. |

SKU | Optional | Validation against expected machines manifest |

BiosVersion | Optional | BIOS version tracking |

TrustedModules | Optional | TPM status reporting |

Sample response (GET /redfish/v1/Systems/{id}):

{

"Id": "System.Embedded.1",

"SerialNumber": "J1234XY",

"PowerState": "On",

"Manufacturer": "Dell Inc.",

"Model": "PowerEdge R750",

"Boot": {

"BootOrder": ["NIC.Slot.3-1", "HardDisk.Direct.0-0:AHCI"],

"BootOptions": { "@odata.id": "/redfish/v1/Systems/System.Embedded.1/BootOptions" }

},

"PCIeDevices": [

{ "@odata.id": "/redfish/v1/Systems/System.Embedded.1/PCIeDevices/236-0" }

],

"EthernetInterfaces": { "@odata.id": "/redfish/v1/Systems/System.Embedded.1/EthernetInterfaces" }

}

Vendor-specific notes: Dell/Supermicro/HPE have system info overrides. NVIDIA DPU uses Oem.Nvidia for mode set/rshim. NVIDIA GBx00 uses Oem.Nvidia for machine setup.

System Ethernet Interfaces

Code: get_system_ethernet_interfaces(), get_system_ethernet_interface() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems/{id}/EthernetInterfaces | GET | List system network interfaces |

/redfish/v1/Systems/{id}/EthernetInterfaces/{id} | GET | Interface details (MAC, UEFI path) |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

MACAddress (or MacAddress) | Critical | DPU-host pairing, interface identification. Accepts both field name variants. |

UefiDevicePath | Required | Primary interface detection via PCI path ordering (parsed to format "2.1.0.0.0") |

Id | Required | Interface identification |

InterfaceEnabled | Optional | Error handling — disabled interfaces may have invalid MAC values |

Chassis

Code: get_chassis_all(), get_chassis(), get_chassis_assembly() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Chassis | GET | List chassis |

/redfish/v1/Chassis/{id} | GET | Chassis info, serial number |

/redfish/v1/Chassis/{id}/Assembly | GET | Assembly info (GB200 serial extraction) |

/redfish/v1/Chassis/{id}/Actions/Chassis.Reset | POST | Chassis power control (AC power cycle) |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Id | Critical | System classification: "Card1"=DPU, "powershelf"=power shelf, "mgx_nvswitch_0"=NVSwitch, "Chassis_0"=GB200 |

SerialNumber | Critical | Fallback for system serial (DPU uses Chassis/Card1 serial). Power shelf/switch IDs. Whitespace trimmed. |

PartNumber | Required | BlueField DPU identification via part number matching (900-9d3b6, SN37B36732, etc.) |

NetworkAdapters | Required | Link to network adapters collection for DPU identification |

Model | Recommended | Model identification. GB200: Assembly checked for "GB200 NVL" model. |

Manufacturer | Recommended | Power shelf vendor identification. Has fallback defaults. |

Oem.Nvidia.chassis_physical_slot_number | Optional | Physical slot in multi-node systems |

Oem.Nvidia.compute_tray_index | Optional | Tray index in modular systems |

Oem.Nvidia.topology_id | Optional | System topology identifier |

Sample response (GET /redfish/v1/Chassis/{id}):

{

"Id": "Card1",

"SerialNumber": "MBF2M516A-CECA_Ax_SN123456",

"PartNumber": "900-9D3B6-00CV-AA0",

"Model": "BlueField-2 DPU 25GbE",

"Manufacturer": "NVIDIA",

"NetworkAdapters": { "@odata.id": "/redfish/v1/Chassis/Card1/NetworkAdapters" }

}

Network Adapters

Code: get_chassis_network_adapters(), get_chassis_network_adapter() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Chassis/{id}/NetworkAdapters | GET | List network adapters |

/redfish/v1/Chassis/{id}/NetworkAdapters/{id} | GET | Adapter details (serial, part number) |

/redfish/v1/Chassis/{id}/NetworkAdapters/{id}/NetworkDeviceFunctions | GET | Network device functions (NVIDIA DPU) |

/redfish/v1/Chassis/{id}/NetworkAdapters/{id}/Ports | GET | Network adapter ports |

/redfish/v1/Chassis/{id}/NetworkAdapters/{id}/Ports/{id} | GET | Port details |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

SerialNumber | Critical | DPU-host pairing fallback path. Must be visible to Host BMC. Whitespace trimmed. |

PartNumber | Critical | BlueField/SuperNIC identification via is_bluefield_model() |

Id | Required | Adapter tracking |

Sample response (GET /redfish/v1/Chassis/{id}/NetworkAdapters/{id}):

{

"Id": "ConnectX6_1",

"SerialNumber": "MT2243X01234",

"PartNumber": "MCX653106A-HDAT_Ax",

"Controllers": [

{

"FirmwarePackageVersion": "24.37.1014",

"Links": { "PCIeDevices": [{ "@odata.id": "/redfish/v1/Systems/System.Embedded.1/PCIeDevices/236-0" }] }

}

]

}

PCIe Devices

Code: pcie_devices() in libredfish; site_explorer exploration

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Chassis/{id}/PCIeDevices | GET | PCIe device list (Supermicro uses chassis path) |

/redfish/v1/Chassis/{id}/PCIeDevices/{id} | GET | PCIe device details |

/redfish/v1/Systems/{id} (PCIeDevices array) | GET | PCIe device links embedded in system response |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

SerialNumber | Critical | Primary DPU-host pairing — matched against DPU system serial numbers |

PartNumber | Critical | BlueField identification via is_bluefield_model() (BF2, BF3, BF3 SuperNIC) |

Id | Required | Device tracking |

Vendor-specific note: Supermicro uses Chassis/{id}/PCIeDevices; others embed PCIeDevices links in Systems/{id} response.

Managers

Code: get_managers(), get_manager() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Managers | GET | List BMC managers |

/redfish/v1/Managers/{id} | GET | BMC info, firmware version |

/redfish/v1/Managers/{id}/Actions/Manager.Reset | POST | BMC reset |

/redfish/v1/Managers/{id}/Actions/Manager.ResetToDefaults | POST | BMC factory reset |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Id | Required | Manager identification. Viking detection: id == "BMC". Sets default manager ID for subsequent calls. |

FirmwareVersion | Required | BMC firmware version tracking |

UUID | Recommended | Manager unique identification |

EthernetInterfaces | Required | Link to BMC network interfaces |

LogServices | Required | Link to log services for event collection |

Vendor-specific notes: HPE has lockdown status override. Dell uses Managers/{id}/Attributes for lockdown/remote access. Supermicro uses Oem/Supermicro/SysLockdown.

Manager Ethernet Interfaces

Code: get_manager_ethernet_interfaces(), get_manager_ethernet_interface() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Managers/{id}/EthernetInterfaces | GET | List BMC interfaces |

/redfish/v1/Managers/{id}/EthernetInterfaces/{id} | GET | BMC MAC, IP configuration |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

MACAddress | Critical | BMC identification and credential storage/lookup |

Sample response (GET /redfish/v1/Managers/{id}/EthernetInterfaces/{id}):

{

"Id": "1",

"MACAddress": "B8:3F:D2:90:95:82",

"IPv4Addresses": [{ "Address": "10.0.1.100" }]

}

Boot Options

Code: get_boot_options(), get_boot_option() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems/{id}/BootOptions | GET | List boot options |

/redfish/v1/Systems/{id}/BootOptions/{id} | GET | Boot option details |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

DisplayName | Required | OOB interface detection (checks for "OOB" string) |

UefiDevicePath | Required | MAC extraction via regex MAC\((?<mac>[[:alnum:]]+)\, — e.g. extracts B83FD2909582 to B8:3F:D2:90:95:82 |

BootOptionEnabled | Optional | Boot option state |

BootOptionReference | Required | Boot option ordering |

Sample response (GET /redfish/v1/Systems/{id}/BootOptions/{id}):

{

"Id": "NIC.Slot.3-1",

"DisplayName": "PXE OOB NIC Slot 3 Port 1",

"UefiDevicePath": "PciRoot(0x2)/Pci(0x1,0x0)/Pci(0x0,0x0)/MAC(B83FD2909582,0x1)",

"BootOptionEnabled": true,

"BootOptionReference": "NIC.Slot.3-1"

}

BIOS

Code: bios(), set_bios(), pending(), clear_pending(), reset_bios(), change_bios_password() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems/{id}/Bios | GET | Read BIOS attributes |

/redfish/v1/Systems/{id}/Bios/Settings | GET | Read pending BIOS changes |

/redfish/v1/Systems/{id}/Bios/Settings | PATCH | Write BIOS attributes (pending next reboot) |

/redfish/v1/Systems/{id}/Bios/Actions/Bios.ResetBios | POST | BIOS factory reset |

/redfish/v1/Systems/{id}/Bios/Actions/Bios.ChangePassword | POST | UEFI password management |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Attributes | Required | BIOS attribute read/write (SR-IOV enablement, machine setup) |

Vendor-specific paths: HPE uses /Bios/settings (lowercase). Lenovo uses /Bios/Pending. Viking uses /Bios/SD. Dell/NVIDIA DPU/GBx00/Supermicro have attribute-specific overrides.

Secure Boot

Code: get_secure_boot(), enable_secure_boot(), disable_secure_boot(), get_secure_boot_certificates(), add_secure_boot_certificate() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems/{id}/SecureBoot | GET | Read secure boot status |

/redfish/v1/Systems/{id}/SecureBoot | PATCH | Enable/disable secure boot |

/redfish/v1/Systems/{id}/SecureBoot/SecureBootDatabases/{db}/Certificates | GET | List secure boot certs |

/redfish/v1/Systems/{id}/SecureBoot/SecureBootDatabases/{db}/Certificates | POST | Add secure boot cert |

/redfish/v1/Systems/{id}/SecureBoot/SecureBootDatabases/{db}/Certificates/{id} | GET | Cert details |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

SecureBootEnable | Required | Secure boot enabled status |

SecureBootCurrentBoot | Required | Current boot secure boot state |

SecureBootMode | Optional | Secure boot mode reporting |

Account Service

Code: get_accounts(), change_password_by_id(), create_user(), delete_user(), set_machine_password_policy() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/AccountService | PATCH | Password policy/lockout settings |

/redfish/v1/AccountService/Accounts | GET | List user accounts |

/redfish/v1/AccountService/Accounts | POST | Create user account |

/redfish/v1/AccountService/Accounts/{id} | GET | Account details |

/redfish/v1/AccountService/Accounts/{id} | PATCH | Password/username change |

/redfish/v1/AccountService/Accounts/{id} | DELETE | Delete user account |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

UserName | Required | Account management |

Password | Required | Credential rotation |

RoleId | Required | Admin role verification |

Id | Required | Account identification. Vendor-specific: Lenovo="1", AMI/Viking="2", NVIDIA=current user. |

Firmware Inventory

Code: get_software_inventories(), get_firmware() in libredfish; FirmwareCollector in health crate via nv-redfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/UpdateService | GET | Update service info |

/redfish/v1/UpdateService/FirmwareInventory | GET | List firmware components |

/redfish/v1/UpdateService/FirmwareInventory/{id} | GET | Component version details |

/redfish/v1/UpdateService/Actions/UpdateService.SimpleUpdate | POST | URL-based firmware update |

/redfish/v1/UpdateService/MultipartUpload | POST | Binary firmware upload (Dell) |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Id | Required | Component ID — matched against firmware config regex. Vendor-specific IDs: NVIDIA DPU=DPU_NIC/DPU_UEFI, Supermicro=CPLD_Backplane_1/CPLD_Motherboard, GBx00=EROT_BIOS_0/HGX_FW_BMC_0/HostBMC_0 |

Version | Required | Firmware version — used for upgrade decisions. DPU versions: trim, lowercase, remove "bf-" prefix. |

Name | Required | Component name — exported as Prometheus metric label firmware_name |

ReleaseDate | Optional | Informational |

Sample response (GET /redfish/v1/UpdateService/FirmwareInventory/{id}):

{

"Id": "BMC_Firmware",

"Name": "BMC Firmware",

"Version": "7.00.00.171",

"ReleaseDate": "2024-06-15T00:00:00Z",

"Updateable": true

}

Sensors and Thermal (Health Monitoring)

Code: monitor.rs in health crate; get_thermal_metrics(), get_power_metrics() in libredfish

All endpoints below are polled at the configured sensor_fetch_interval (default 60 seconds).

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Chassis/{id}/Sensors | GET | Environmental sensors |

/redfish/v1/Chassis/{id}/Thermal | GET | Temperature/fan readings |

/redfish/v1/Chassis/{id}/Power | GET | Power consumption/PSU |

/redfish/v1/Chassis/{id}/PowerSupplies | GET | Power supply collection |

/redfish/v1/Chassis/{id}/PowerSupplies/{id}/Sensors | GET | PSU sensor metrics |

/redfish/v1/Systems/{id}/Processors/{id}/EnvironmentSensors | GET | CPU temperature |

/redfish/v1/Systems/{id}/Memory/{id}/EnvironmentSensors | GET | Memory temperature |

/redfish/v1/Systems/{id}/Storage/{id}/Drives/{id}/EnvironmentSensors | GET | Drive temperature |

/redfish/v1/Chassis/{id}/Drives | GET | Drive info (GBx00) |

/redfish/v1/Chassis/{id}/ThermalSubsystem/ThermalMetrics | GET | Thermal metrics (GBx00) |

/redfish/v1/Chassis/{id}/ThermalSubsystem/LeakDetection/LeakDetectors | GET | Leak detection (GBx00) |

/redfish/v1/Chassis/{id}/EnvironmentMetrics | GET | Chassis power (GBx00/DPS) |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Reading / ReadingCelsius | Required | Sensor value for Prometheus metrics |

ReadingUnits / ReadingType | Required | Sensor classification: Cel, RPM, W, A |

Name | Required | Sensor identification in Prometheus labels |

Status.Health | Required | Health state: Ok, Warning, Critical |

Thresholds.UpperCritical | Optional | Alert thresholds (configurable via include_sensor_thresholds) |

Thresholds.LowerCritical | Optional | Alert thresholds |

ReadingRangeMax / ReadingRangeMin | Optional | Valid reading range |

Log Services

Code: logs_collector.rs in health crate; get_bmc_event_log(), get_system_event_log() in libredfish

Log collection runs at 5-minute intervals and uses incremental fetching: ?$filter=Id gt '{last_id}'

Discovery endpoints (all vendors)

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Managers/{id}/LogServices | GET | Discover manager log services |

/redfish/v1/Chassis/{id}/LogServices | GET | Chassis log services |

/redfish/v1/Systems/{id}/LogServices | GET | System log services |

BMC event log entries (vendor-specific)

| Endpoint | Method | Vendor |

|---|---|---|

/redfish/v1/Managers/{id}/LogServices/Sel/Entries | GET | Dell |

/redfish/v1/Managers/{id}/LogServices/IEL/Entries | GET | HPE |

/redfish/v1/Managers/{id}/LogServices/SEL/Entries | GET | Viking |

/redfish/v1/Systems/{id}/LogServices/AuditLog/Entries | GET | Lenovo |

System event log entries (vendor-specific)

| Endpoint | Method | Vendor |

|---|---|---|

/redfish/v1/Systems/{id}/LogServices/EventLog/Entries | GET | NVIDIA DPU |

/redfish/v1/Systems/{id}/LogServices/SEL/Entries | GET | NVIDIA DPU/GBx00 |

/redfish/v1/Systems/{id}/LogServices/IML/Entries | GET | HPE |

Key Response Fields

| Field | Importance | NICo Usage |

|---|---|---|

Id | Required | Entry identifier for incremental collection |

Created | Required | Timestamp |

Severity | Required | Critical/Warning/Ok — maps to OTEL severity |

Message | Required | Log message text |

MessageArgs | Optional | Message format arguments |

Task Service

Code: get_tasks(), get_task() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/TaskService/Tasks | GET | List async operation tasks |

/redfish/v1/TaskService/Tasks/{id} | GET | Task status (firmware updates, lockdown, etc.) |

Dell also uses Managers/{id}/Jobs/{id} (converted to Task internally).

Component Integrity

Code: get_component_integrities(), get_component_ca_certificate(), trigger_evidence_collection(), get_evidence() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/ComponentIntegrity | GET | SPDM attestation components |

{component}/Certificates/CertChain | GET | Component CA certificate |

{component}/Actions/ComponentIntegrity.SPDMGetSignedMeasurements | POST | Trigger evidence collection |

Manager Network Protocol

Code: get_manager_network_protocol() in libredfish

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Managers/{id}/NetworkProtocol | GET | BMC network services config |

/redfish/v1/Managers/{id}/NetworkProtocol | PATCH | Enable/disable IPMI access |

Storage

Code: get_drives_metrics() in libredfish; discover_drive_entities() in health monitor

| Endpoint | Method | Purpose |

|---|---|---|

/redfish/v1/Systems/{id}/Storage | GET | List storage controllers |

/redfish/v1/Systems/{id}/Storage/{id} | GET | Storage controller details |

/redfish/v1/Systems/{id}/Storage/{id}/Drives/{id} | GET | Drive details |

/redfish/v1/Systems/{id}/Storage/{id}/Volumes | POST | Create RAID volume (Dell) |

NVIDIA OEM Extensions

Code: Various methods in libredfish nvidia_dpu.rs, nvidia_gh200.rs, nvidia_gb200.rs, nvidia_gbswitch.rs

| Endpoint | Method | Vendor | Purpose |

|---|---|---|---|

Systems/{id}/Oem/Nvidia | GET | NVIDIA DPU | Base MAC, rshim status, NIC mode |

Systems/{id}/Oem/Nvidia/Actions/HostRshim.Set | POST | NVIDIA DPU | Set rshim (BF3) |

Systems/{id}/Oem/Nvidia/Actions/Mode.Set | POST | NVIDIA DPU | Set NIC/DPU mode |

Managers/Bluefield_BMC/Oem/Nvidia | PATCH | NVIDIA DPU | Enable rshim |

Chassis/BMC_0/Actions/Oem/NvidiaChassis.AuxPowerReset | POST | NVIDIA GBx00 | AC power cycle |

Chassis/HGX_Chassis_0 | GET | NVIDIA GBx00 | HGX chassis info |

Systems/HGX_Baseboard_0/Processors | GET | NVIDIA GBx00 | GPU enumeration (DPS) |

Systems/HGX_Baseboard_0/Processors/{id}/Oem/Nvidia/WorkloadPowerProfile | GET/POST | NVIDIA GBx00 | WPPS config (DPS) |

CI/CD Pipeline Endpoints

These endpoints are used by the CI/CD tooling (cicd/redfish_cli.py, cicd/install_wrapper.py) and are not part of core NICo.

| Endpoint | Method | Purpose |

|---|---|---|

{System}/VirtualMedia or {Manager}/VirtualMedia | GET | Virtual media devices |

{VirtualMedia}/Actions/VirtualMedia.InsertMedia | POST | Mount ISO image |

{VirtualMedia}/Actions/VirtualMedia.EjectMedia | POST | Eject media |

Systems/{id} | PATCH | Boot source override (CD once) |

{Manager}/HostInterfaces/{id} | PATCH | Enable/disable OS-to-BMC NIC |

SessionService/Sessions | POST | Create auth session |

Reliable State Handling

NCX Infra Controller (NICo) provides reliable state handling for a variety of resources via a mechanism called the state controller.

"Reliable state handling" refers to the ability of resources to traverse through lifecycle states even in the case of intermittent errors (e.g. a Host BMC or a dependent service is temporarily unavailable) via automated periodic retries. It also means that state handling is deterministic and free of race conditions.

These are the resources managed by the state controller:

- Managed Host Lifecycle

- IB Partition Lifecycle

- Network Segment Lifecycle

- Machine Lifecycle

The functionality of the state controller is described as follows:

- NICo defines some generic interfaces for resources that have states that need to be handled: the StateHandler interface and the IO interface. The handler implementation specifies how to transition between states, while IO defines how to load resources from the database and store them back there.

- The handler function is executed periodically (typically every 30s) and is implemented in an idempotent fashion, so, even if something fails intermittently, it will be automatically retried at the next iteration.

- The state handler is the only entity that directly changes the lifecycle state of a resource. And the only way to transition to a new state is by the handler function returning the new state as result. Other components like API handlers can only queue intents/requests (e.g. "Use this host as an instance", "Report a network status change", "Report a health status change"), preventing many race conditions.

- For hosts/machines, the implementation is basically a single, large switch/case ("if this state, then wait for this signal, and go to the next"). Modelling states as Rust enums is immensely useful here. The compiler raises errors if a particular state or substate is not handled. The top level host lifecycle state is defined here, and it is very large. The states also all serialize into JSON values, which can be observed in the state history with admin tools for each resource.

- State diagrams are provided on the Managed Host State Diagrams page.