Structured Data

Example Features

This example deploys a developer RAG pipeline for chat Q&A and serves inferencing from an NVIDIA API Catalog endpoint instead of NVIDIA Triton Inference Server, a local Llama 2 model, or local GPUs.

Developers get free credits for 10K requests to any of the available models.

The key difference from the Using the NVIDIA API Catalog example is that this example demonstrates how to use RAG with structured CSV data.

This example uses models from the NVIDIA API Catalog. This approach does not require embedding models or vector database solutions. Instead, the example uses PandasAI to manage the workflow.

For ingestion, the query server loads the structured data from a CSV file into a Pandas dataframe. The query server can ingest multiple CSV files, provided the files have identical columns. Ingestion of CSV files with differing columns is not supported and results in an exception.

The core functionality uses a PandasAI agent to extract information from the dataframe. This agent combines the query with the structure of the dataframe into an LLM prompt. The LLM then generates Python code to extract the required information from the dataframe. Subsequently, this generated code is executed on the dataframe and yields an output dataframe.

To demonstrate the example, sample CSV files are available.

These are part of the structured data example Helm chart and represent a subset of the Microsoft Azure Predictive Maintenance from Kaggle.

The CSV data retrieval prompt is specifically tuned for three CSV files from this dataset: PdM_machines.csv, PdM_errors.csv, and PdM_failures.csv.

The CSV files to use are specified in the rag-app-structured-data-chatbot.yaml Docker Compose file by updating the environment variable CSV_NAME.

The default value is PdM_machines, but can be changed to PdM_errors or PdM_failures.

Customization of the CSV data retrieval prompt is not supported.

Model |

Embedding |

Framework |

Description |

Multi-GPU |

TRT-LLM |

Model Location |

Triton |

Vector Database |

|---|---|---|---|---|---|---|---|---|

ai-mixtral-8x7b-instruct for response generation ai-mixtral-8x7b-instruct for PandasAI |

Not Applicable |

PandasAI |

QA chatbot |

NO |

NO |

API Catalog |

NO |

Not Applicable |

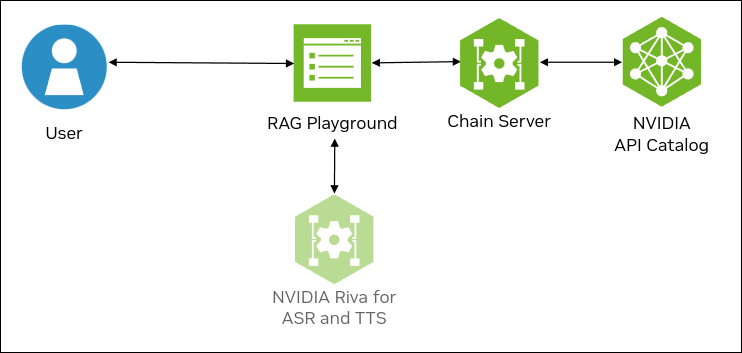

The following figure shows the sample topology:

The sample chat bot web application communicates with the chain server. The chain server sends inference requests to an NVIDIA API Catalog endpoint.

Optionally, you can deploy NVIDIA Riva. Riva can use automatic speech recognition to transcribe your questions and use text-to-speech to speak the answers aloud.

Prerequisites

Clone the Generative AI examples Git repository using Git LFS:

$ sudo apt -y install git-lfs $ git clone git@github.com:NVIDIA/GenerativeAIExamples.git $ cd GenerativeAIExamples/ $ git lfs pull

Install Docker Engine and Docker Compose. Refer to the instructions for Ubuntu.

Optional: Enable NVIDIA Riva automatic speech recognition (ASR) and text to speech (TTS).

To launch a Riva server locally, refer to the Riva Quick Start Guide.

In the provided

config.shscript, setservice_enabled_asr=trueandservice_enabled_tts=true, and select the desired ASR and TTS languages by adding the appropriate language codes toasr_language_codeandtts_language_code.After the server is running, assign its IP address (or hostname) and port (50051 by default) to

RIVA_API_URIindeploy/compose/compose.env.

Alternatively, you can use a hosted Riva API endpoint. You might need to obtain an API key and/or Function ID for access.

In

deploy/compose/compose.env, make the following assignments as necessary:export RIVA_API_URI="<riva-api-address/hostname>:<port>" export RIVA_API_KEY="<riva-api-key>" export RIVA_FUNCTION_ID="<riva-function-id>"

Get an API Key for the Mixtral 8x7B Instruct API Endpoint

Perform the following steps if you do not already have an API key. You can use different model API endpoints with the same API key.

Navigate to https://build.nvidia.com/explore/discover.

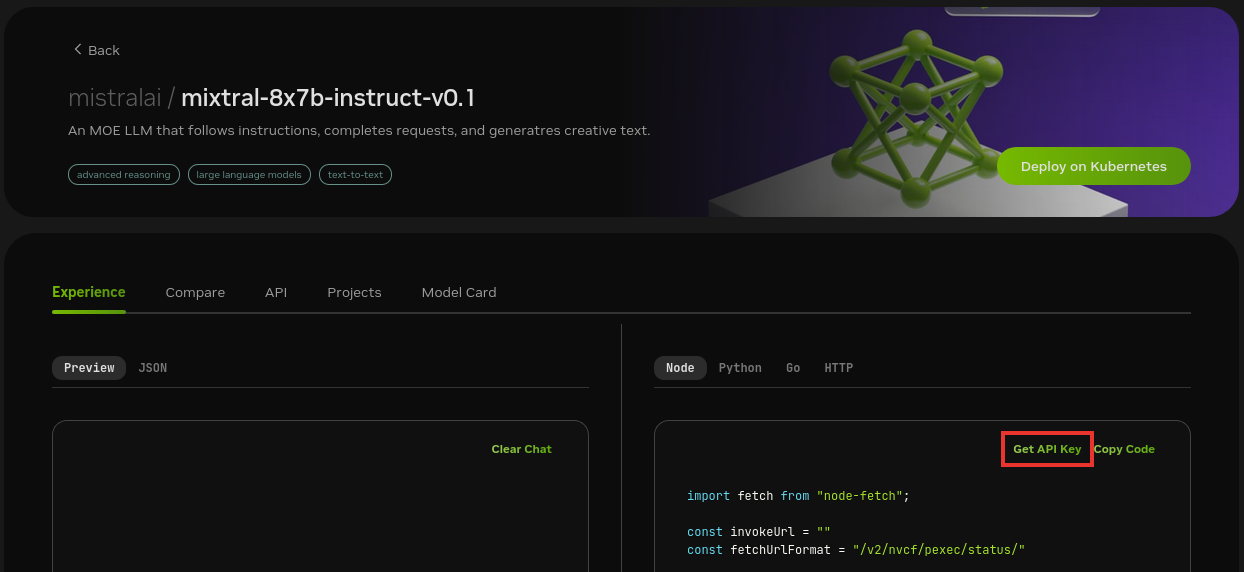

Find the Mixtral 8x7B Instruct card and click the card.

Click Get API Key.

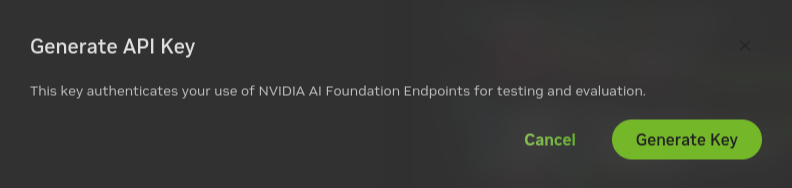

Click Generate Key.

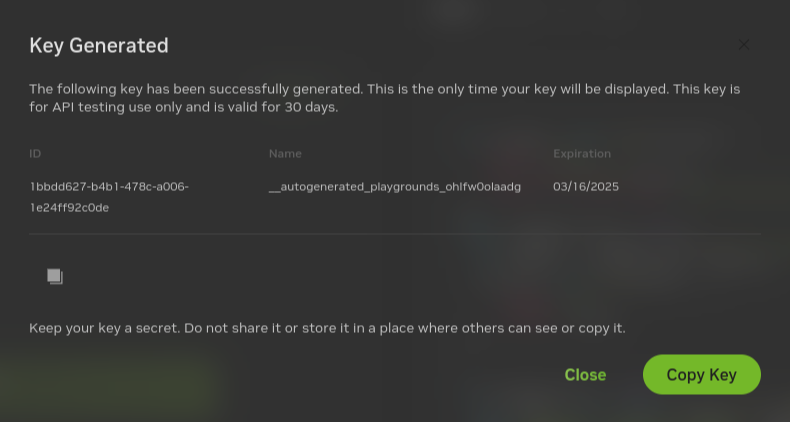

Click Copy Key and then save the API key. The key begins with the letters nvapi-.

Build and Start the Containers

In the Generative AI examples repository, export this variable in terminal.

Add the API key for the model endpoint:

export NVIDIA_API_KEY="nvapi-..."

From the root of the repository, build the containers:

$ docker compose --env-file deploy/compose/compose.env -f deploy/compose/rag-app-structured-data-chatbot.yaml build

Start the containers:

$ docker compose --env-file deploy/compose/compose.env -f deploy/compose/rag-app-structured-data-chatbot.yaml up -d

Example Output

✔ Network nvidia-rag Created ✔ Container chain-server Started ✔ Container rag-playground Started

Confirm the containers are running:

$ docker ps --format "table {{.ID}}\t{{.Names}}\t{{.Status}}"

Example Output

CONTAINER ID NAMES STATUS 39a8524829da rag-playground Up 2 minutes bfbd0193dbd2 chain-server Up 2 minutes

Next Steps

Access the web interface for the chat server. Refer to Using the Sample Chat Web Application for information about using the web interface.

Upload a CSV from the

RetrievalAugmentedGeneration/examples/csv_ragdirectory to the knowledge base.Enable the Use knowledge base checkbox when you submit a question.

Stop the containers by running

docker compose -f deploy/compose/rag-app-structured-data-chatbot.yaml down.